About This Website

Meta page describing Gwern.net site ideals of stable long-term essays which improve over time; idea sources and writing methodology; metadata definitions; site statistics; copyright license.

This page is about Gwern.net content; for the details of its implementation & design like the popup paradigm, see Design; and for information about me, see Links.

The Content

Of all the books I have delivered to the presses, none, I think, is as personal as the straggling collection mustered for this hodgepodge, precisely because it abounds in reflections and interpolations. Few things have happened to me, and I have read a great many. Or rather, few things have happened to me more worth remembering than Schopenhauer’s thought or the music of England’s words.

A man sets himself the task of portraying the world. Through the years he peoples a space with images of provinces, kingdoms, mountains, bays, ships, islands, fishes, rooms, instruments, stars, horses, and people. Shortly before his death, he discovers that that patient labyrinth of lines traces the image of his face.

The content here varies from statistics to psychology to self-experiments/Quantified Self to philosophy to poetry to programming to anime to investigations of online drug markets or leaked movie scripts (or two topics at once: anime & statistics or anime & criticism or heck anime & statistics & criticism!).

I believe that someone who has been well-educated will think of something worth writing at least once a week; to a surprising extent, this has been true. (I added ~130 documents to this repository over the first 3 years.)

Target Audience

Special knowledge can be a terrible disadvantage if it leads you too far along a path you cannot explain anymore.

I don’t write simply to find things out, although curiosity is my primary motivator, as I find I want to read something which hasn’t been written—“…I realised that I wanted to read about them what I myself knew. More than this—what only I knew. Deprived of this possibility, I decided to write about them. Hence this book.”1 There are many benefits to keeping notes as they allow one to accumulate confirming and especially contradictory evidence2, and even drafts can be useful so you Don’t Repeat Yourself or simply decently respect the opinions of mankind.

The goal of these pages is not to be a model of concision, maximizing entertainment value per word, or to preach to a choir by elegantly repeating a conclusion. Rather, I am attempting to explain things to my future self, who is intelligent and interested, but has forgotten. What I am doing is explaining why I decided what I did to myself and noting down everything I found interesting about it for future reference. I hope my other readers, whomever they may be, might find the topic as interesting as I found it, and the essay useful or at least entertaining–but the intended audience is my future self.

Development

I hate the water that thinks that it boiled itself on its own. I hate the seasons that think they cycle naturally. I hate the sun that thinks it rose on its own.

Sodachi Oikura, Owarimonogatari (Sodachi Riddle, Part One)

It is everything I felt worth writing that didn’t fit somewhere like Wikipedia or was already written. I never expected to write so much; but I discovered that once I had a hammer, nails were everywhere, and that supply creates its own demand3.

Long Site

The Internet is self destructing paper. A place where anything written is soon destroyed by rapacious competition and the only preservation is to forever copy writing from sheet to sheet faster than they can burn. If it’s worth writing, it’s worth keeping. If it can be kept, it might be worth writing…If you store your writing on a third party site like Blogger, Livejournal or even on your own site, but in the complex format used by blog/wiki software du jour you will lose it forever as soon as hypersonic wings of Internet labor flows direct people’s energies elsewhere. For most information published on the Internet, perhaps that is not a moment too soon, but how can the muse of originality soar when immolating transience brushes every feather?

Julian Assange (“Self destructing paper”, 2006-12-05)

One of my personal interests is applying the idea of the Long Now. What and how do you write a personal site with the long-term in mind? We live most of our lives in the future, and the actuarial tables give me until the 2070–102080s, excluding any benefits from caloric restriction/intermittent fasting or projects like SENS. It is a common-place in science fiction4 that longevity would cause widespread risk aversion. But on the other hand, it could do the opposite: the longer you live, the more long-shots you can afford to invest in. Someone with a timespan of 70 years has reason to protect against black swans—but also time to look for them.5 It’s worth noting that old people make many short-term choices, as reflected in increased suicide rates and reduced investment in education or new hobbies, and this is not due solely to the ravages of age but the proximity of death—the HIV-infected (but otherwise in perfect health) act similarly short-term.6

What sort of writing could you create if you worked on it (be it ever so rarely) for the next 60 years? What could you do if you started now?7

Keeping the site running that long is a challenge, and leads to the recommendations for Resilient Haskell Software: 100% FLOSS software8, open standards for data, textual human-readability, avoiding external dependencies910, and staticness11.

Preserving the content is another challenge. Keeping the content in a DVCS like git protects against file corruption and makes it easier to mirror the content; regular backups12 help. I have taken additional measures: WebCitation has archived most pages and almost all external links; the Internet Archive is also archiving pages & external links13. (For details, read Archiving URLs.)

One could continue in this vein, devising ever more powerful & robust storage methods (perhaps combine the DVCS with forward error correction through PAR2, a la bup), but what is one to fill the storage with?

Long Content

What has been done, thought, written, or spoken is not culture; culture is only that fraction which is remembered.

Gary Taylor (The Clock of the Long Now; emphasis added)14

‘Blog posts’ might be the answer. But I have read blogs for many years and most blog posts are the triumph of the hare over the tortoise. They are meant to be read by a few people on a weekday in 200422ya and never again, and are quickly abandoned—and perhaps as Assange says, not a moment too soon. (But isn’t that sad? Isn’t it a terrible ROI for one’s time?) On the other hand, the best blogs always seem to be building something: they are rough drafts—works in progress15. So I did not wish to write a blog. Then what? More than just “evergreen content”, what would constitute Long Content as opposed to the existing culture of Short Content? How does one live in a Long Now sort of way?16

It’s shocking to find how many people do not believe they can learn, and how many more believe learning to be difficult. Muad’Dib knew that every experience carries its lesson.17

My answer is that one uses such a framework to work on projects that are too big to work on normally or too tedious. (Conscientiousness is often lacking online or in volunteer communities18 and many useful things go undone.) Knowing your site will survive for decades to come gives you the mental wherewithal to tackle long-term tasks like gathering information for years, and such persistence can be useful19—if one holds onto every glimmer of genius for years, then even the dullest person may look a bit like a genius himself20. (Even experienced professionals can only write at their peak for a few hours a day—usually first thing in the morning, it seems.) Half the challenge of fighting procrastination is the pain of starting—I find when I actually get into the swing of working on even dull tasks, it’s not so bad. So this suggests a solution: never start. Merely have perpetual drafts, which one tweaks from time to time. And the rest takes care of itself. I have a few examples of this:

-

When I read in Wired in 200818ya that the obscure working memory exercise called dual n-back (DNB) had been found to increase IQ substantially, I was shocked. IQ is one of the most stubborn properties of one’s mind, one of the most fragile21, the hardest to affect positively, but also one of the most valuable traits one could have22; if the technique panned out, it would be huge. Unfortunately, DNB requires a major time investment (as in, half an hour daily); which would be a bargain—if it delivers. So, to do DNB or not?

Questions of great import like this are worth studying carefully. The wheels of academia grind exceeding slow, and only a fool expects unanimous answers from fields like psychology. Any attempt to answer the question ‘is DNB worthwhile?’ will require years and cover a breadth of material. This FAQ on DNB is my attempt to cover that breadth over those years.

Neon Genesis Evangelion notes:

I have been discussing NGE since 200422ya. The task of interpreting Eva is very difficult; the source works themselves are a major time-sink23, and there are thousands of primary, secondary, and tertiary works to consider—personal essays, interviews, reviews, etc. The net effect is that many Eva fans ‘know’ certain things about Eva, such as End of Evangelion not being a grand ‘screw you’ statement by Hideaki Anno or that the TV series was censored, but they no longer have proof. Because each fan remembers a different subset, they have irreconcilable interpretations. (Half the value of the page for me is having a place to store things I’ve said in countless fora which I can eventually turn into something more systematic.)

To compile claims from all those works, to dig up forgotten references, to scroll through microfilms, buy issues of defunct magazines—all this is enough work to shatter the heart of the stoutest salaryman. Which is why I began years ago and expect not to finish for years to come. (Finishing by 2020 seems like a good prediction.)

Cloud Nine: Years ago I was reading the papers of the economist Robin Hanson. I recommend his work highly; even if they are wrong, they are imaginative and some of the finest speculative fiction I have read. (Except they were non-fiction.) One night I had a dream in which I saw in a flash a medieval city run in part on Hansonian grounds; a steampunk version of his futarchy. A city must have another city as a rival, and soon I had remembered the strange ’90s idea of assassination markets, which was easily tweaked to work in a medieval setting. Finally, between them, was one of my favorite proposals, Buckminster Fuller’s cloud nine megastructure.

I wrote several drafts but always lost them. Sad24 and discouraged, I abandoned it for years. This fear leads straight into the next example.

A Book reading list:

Once, I didn’t have to keep reading lists. I simply went to the school library shelf where I left off and grabbed the next book. But then I began reading harder books, and they would cite other books, and sometimes would even have horrifying lists of hundreds of other books I ought to read (‘bibliographies’). I tried remembering the most important ones but quickly forgot. So I began keeping a book list on paper. I thought I would throw it away in a few months when I read them all, but somehow it kept growing and growing. I didn’t trust computers to store it before25, but now I do, and it lives on in digital form (currently on Goodreads—because they have export functionality). With it, I can track how my interests evolved over time26, and what I was reading at the time. I sometimes wonder if I will read them all even by 2070.

What is next? So far the pages will persist through time, and they will gradually improve over time. But a truly Long Now approach would be to make them be improved by time—make them more valuable the more time passes. (Stewart Brand remarks in The Clock of the Long Now that a group of monks carved thousands of scriptures into stone, hoping to preserve them for posterity—but posterity would value far more a carefully preserved collection of monk feces, which would tell us countless valuable things about important phenomenon like global warming.)

One idea I am exploring is adding long-term predictions like the ones I make on PredictionBook.com. Many27 pages explicitly or implicitly make predictions about the future. As time passes, predictions would be validated or falsified, providing feedback on the ideas.28

For example, the Evangelion essay’s paradigm implies many things about the future movies in Rebuild of Evangelion29; The Melancholy of Kyon is an extended prediction30 of future plot developments in The Melancholy of Haruhi Suzumiya series; Haskell Summer of Code has suggestions about what makes good projects, which could be turned into predictions by applying them to predict success or failure when the next Summer of Code choices are announced. And so on.

I don’t think “Long Content” is simply for working on things which are equivalent to a “monograph” (a work which attempts to be an exhaustive exposition of all that is known—and what has been recently discovered—on a single topic), although monographs clearly would benefit from such an approach. If I write a short essay cynically remarking on, say, Al Gore and predicting he’d sell out and registered some predictions and came back 20 years later to see how it worked out, I would consider this “Long Content” (it gets more interesting with time, as the predictions reach maturation); but one couldn’t consider this a “monograph” in any ordinary sense of the word.

One of the ironies of this approach is that as a transhumanist, I assign non-trivial probability to the world undergoing massive change during the 21st century due to any of a number of technologies such as artificial intelligence (such as mind uploading31) or nanotechnology; yet here I am, planning as if I and the world were immortal.

I personally believe that one should “think Less Wrong and act Long Now”, if you follow me. I diligently do my daily spaced-repetition review and n-backing; I carefully design my website and writings to last decades, actively think about how to write material that improves with time, and work on writings that will not be finished for years (if ever). It’s a bit schizophrenic since both are totalized worldviews with drastically conflicting recommendations about where to invest my time. It’s a case of high discount rates versus low discount rates; and one could fairly accuse me of committing the sunk cost fallacy, but then, I’m not sure that sunk cost fallacy is a fallacy (certainly, I have more to show for my wasted time than most people).

The Long Now views its proposals like the Clock and the Long Library and seedbanks as insurance—in case the future turns out to be surprisingly unsurprising. I view these writings similarly. If Ray Kurzweil’s most ambitious predictions turn out right and the Singularity happens by 2050 or so, then much of my writings will be moot, but I will have all the benefits of said Singularity; if the Singularity never happens or ultimately pays off in a very disappointing way, then my writings will be valuable to me. By working on them, I hedge my bets.

Finding My Ideas

To the extent I personally have any method for ‘getting started’ on writing something, it’s to pay attention to anytime you find yourself thinking, “how irritating that there’s no good webpage/Wikipedia article on X” or “I wonder if Y” or “has anyone done Z” or “huh, I just realized that A!” or “this is the third time I’ve had to explain this, jeez.”

The DNB FAQ started because I was irritated people were repeating themselves on the dual n-back mailing list; the modafinil article started because it was a pain to figure out where one could order modafinil; the trio of Death Note articles (Anonymity, Ending, Script) all started because I had an amusing thought about information theory; the Silk Road 1 page was commissioned after I groused about how deeply sensationalist & shallow & ill-informed all the mainstream media articles on the Silk Road drug marketplace were (similarly for Bitcoin is Worse is Better); my Google survival analysis was based on thinking it was a pity that Arthur’s Guardian analysis was trivially & fatally flawed; and so on and so forth.

None of these seems special to me. Anyone could’ve compiled the DNB FAQ; anyone could’ve kept a list of online pharmacies where one could buy modafinil; someone tried something similar to my Google shutdown analysis before me (and the fancier statistics were all standard tools). If I have done anything meritorious with them, it was perhaps simply putting more work into them than someone else would have; to quote Teller:

“I think you’ll see what I mean if I teach you a few principles magicians employ when they want to alter your perceptions…Make the secret a lot more trouble than the trick seems worth. You will be fooled by a trick if it involves more time, money and practice than you (or any other sane onlooker) would be willing to invest.”

“My partner, Penn, and I once produced 500 live cockroaches from a top hat on the desk of talk-show host David Letterman. To prepare this took weeks. We hired an entomologist who provided slow-moving, camera-friendly cockroaches (the kind from under your stove don’t hang around for close-ups) and taught us to pick the bugs up without screaming like preadolescent girls. Then we built a secret compartment out of foam-core (one of the few materials cockroaches can’t cling to) and worked out a devious routine for sneaking the compartment into the hat. More trouble than the trick was worth? To you, probably. But not to magicians.”

Besides that, I think after a while writing/research can be a virtuous circle or autocatalytic. If one were to look at my repo statistics, you see that I haven’t always been writing as much. What seems to happen is that as I write more:

I learn more tools

eg. I learned basic meta-analysis in R to answer what all the positive & negative n-back studies summed to, but then I was able to use it for iodine; I learned linear models for analyzing MoR reviews but now I can use them anywhere I want to, like in my Touhou draft material.

The “Feynman method” has been facetiously described as “find a problem; think very hard; write down the answer”, but Gian-Carlo Rota gives the real one:

Richard Feynman was fond of giving the following advice on how to be a genius. You have to keep a dozen of your favorite problems constantly present in your mind, although by and large they will lay in a dormant state. Every time you hear or read a new trick or a new result, test it against each of your twelve problems to see whether it helps. Every once in a while there will be a hit, and people will say: “How did he do it? He must be a genius!”

I internalize a habit of noticing interesting questions that flit across my brain

eg. in March 201313ya while meditating: “I wonder if more doujin music gets released when unemployment goes up and people may have more spare time or fail to find jobs? Hey! That giant Touhou music torrent I downloaded, with its 45000 songs all tagged with release year, could probably answer that!” (One could argue that these questions probably should be ignored and not investigated in depth—Teller again—nevertheless, this is how things work for me.)

if you aren’t writing, you’ll ignore useful links or quotes; but if you stick them in small asides or footnotes as you notice them, eventually you’ll have something bigger.

I grab things I see on Google Alerts & Scholar, Pubmed, Reddit, Hacker News, my RSS feeds, books I read, and note them somewhere until they amount to something. (An example would be my slowly accreting citations on IQ and economics.)

people leave comments, ping me on IRC, send me emails, or leave anonymous messages, all of which help

Some examples of this come from my most popular page, on Silk Road 1:

an anonymous message led me to investigate a vendor in depth and ponder the accusation leveled against them; I wrote it up and gave my opinions and thus I got another short essay to add to my SR page which I would not have had otherwise (and I think there’s a <20% chance that in a few years this will pay off and become a very interesting essay).

CMU’s Nicholas Christin, who wrote a paper by scraping SR for many months and giving all sorts of overall statistics, emailed me to point out I was citing inaccurate figures from the first version of his paper. I thanked him for the correction and while I was replying, mentioned I had a hard time believing his paper’s claims about the extreme rarity of scams on SR as estimated through buyer feedback. After some back and forth and suggesting specific mechanisms how the estimates could be positively biased, he was able to check his database and confirmed that there was at least one very large omission of scams in the scraped data and there was probably a general undersampling; so now I have a more accurate feedback estimate for my SR page (important for estimating risk of ordering) and he said he’ll acknowledge me in the/a paper, which is nice.

Information Organizing

Occasionally people ask how I manage information and read things.

For quotes or facts which are very important, I employ spaced repetition by adding them to my Mnemosyne

I keep web clippings in Evernotes; I also excerpt from research papers & books, and miscellaneous sources. This is useful for targeted searches when I remember a fact but not where I learned it, and for storing things which I don’t want to memorize but which have no logical home in my website or LW or elsewhere. It is also helpful for writing my book reviews and the monthly newsletter, as I can read through my book excerpts to remind myself of the highlights and at the end of the month review clippings from papers/webpages to find good things to reshare which I was too busy at the time to do so or was unsure of its importance. I don’t make any use of more complex Evernote features.

I periodically back up my Evernote using the Linux client Nixnote’s export feature. (I made sure there was a working export method before I began using Evernote, and use it only as long as Nixnote continues to work.)

My workflow for dealing with PDFs, as of late 201412ya, is:

if necessary, jailbreak the paper using Libgen or an university proxy, then upload a copy to Dropbox, named

year-author.pdfread the paper, making excerpts as I go

store the metadata & excerpts in Evernote

if useful, integrate into Gwern.net with its title/year/author metadata, adding a local fulltext copy if the paper had to be jailbroken, otherwise rely on my custom archiving setup to preserve the remote URL

hence, any future searches for the filename / title / key contents should result in hits either in my Evernote or Gwern.net

Web pages are archived & backed up by my custom archiving setup. This is intended mostly for fixing dead links (eg. to recover the fulltext of the original URL of an Evernote clipping).

I don’t have any special book reading techniques. For really good books I excerpt from each chapter and stick the quotes into Evernote.

I store insights and thoughts in various pages as parenthetical comments, footnotes, and appendices. If they don’t fit anywhere, I dump them in Notes.

Larger masses of citations and quotes typically get turned into pages.

I make heavy use of RSS subscriptions for news. For that, I am currently using Liferea. (Not that I’m hugely thrilled about it. Google Reader was much better.)

For projects and followups, I use reminders in Google Calendar.

For recording personal data, I automate as much as possible (eg. Zeo and arbtt) and I make a habit of the rest—getting up in the morning is a great time to build a habit of recording data because it’s a time of habits like eating breakfast and getting dressed.

Hence, to refind information, I use a combination of Google, Evernote, grep (on the Gwern.net files), occasionally Mnemosyne, and a good visual memory.

As far as writing goes, I do not use note-taking software or things like FreeMind or org-mode—not that I think they are useless but I am worried about whether they would ever repay the large upfront investments of learning/tweaking or interfere with other things. Instead, I occasionally compile outlines of articles from comments on LW/Reddit/IRC, keep editing them with stuff as I remember them, search for relevant parts, allow little thoughts to bubble up while meditating, and pay attention to when I am irritated at people being wrong or annoyed that a particular topic hasn’t been written down yet.

My Experience of Writing

That’s not writing; that’s just typewriting.

Truman Capote on the Beat Generation (195967ya)

What is it like to write, for me? Maybe a bit different than for you.

Why don’t you write? Since I find it easy to write, I’ve been puzzled by the many people I know who have worthwhile things they could write, and are fully capable of ‘writing’ them in the sense of explaining them to me (often in text-based chat!) in sufficient detail that it could be turned into a serviceable blog post—but who won’t.

Typical mind. Asking people about their experience of writing, and what bars them from taking that critical step even when they agree the topic is worthwhile & they would like to have the writeup, I’ve come to realize my experience of writing is different from theirs.

Blank-page tyranny. For them, the problem with longform writing is not a lack of material (my default assumption), but the writing being a school-like exercise in pain & tedium, as they struggle to fill up the blank page and assemble their atomic details into a coherent output: they struggle to take their pile of individual playing cards, and build a house of cards (which could topple at the first mistake).

Text earworms. For me, this is almost never problem because my experience of writing is radically different.

So, I would divide my writing into two types: ‘incremental’/‘routine’/occasional, and ‘big bang’: Incremental writing is the ordinary kind of writing where I might add a quote or reference, or copyedit something, or write a small forgettable response to someone. Most people do not find incremental writing to be hard, and may do quite a lot of it, whether in email or social media or work. ‘Big bang’ writing, on the other hand, is the more valuable sort where I sit down and bang out a long comment or even an entire essay like “Why Not To Write A Book” in a single sitting; this is the sort of writing that people are impressed by and find themselves unable to do, but which I do fairly regularly. How?

I rarely struggle with assembling my fragments into a whole, because the whole instead inflicts itself on me. Much of my writing is like a musical earworm or intrusive thought: I experience the rumination as a mental voice reciting a paragraph, looping indefinitely, until I suppress it or get distracted (but then it may return, of its own volition). The paragraph might be a dialogue32, a comment in reply to someone specific, or on a general topic, a tweet, an email, or it might be the key paragraph for an essay I’ve been musing for a while—anything, really. The paragraph usually starts as a tangled rat’s-nest of fragments, allusions, citations, and parenthetical digressions, and gradually cleans itself up into something more readable. (One can always tell when I wrote something in a rush, without the benefit of revision to flatten it out, because of the nested parentheticals and tangents.) No one else seems to operate the same way.

Transcription. The voice is clearly myself, and does not feel like any kind of muse or external force, any more than a musical earworm or phrase feels “alien” to you; but it is effortless and involuntary33, and hard to make it go away, which can be annoying. I am apparently so disagreeable that my brain can’t stop arguing with itself. So I put down my writings into my website in order to forget the writings in my head. Thus, ‘big bang’ writing is easy—I am simply an amanuensis for the voice in my head. The key text has repeated itself so often that I can write it in a sitting. Indeed, these paragraphs have repeated themselves so many times that I am barely even thinking about this as I write it. (Instead I am thinking about a different topic: the value of writing a book vs blog posts.) I don’t experience the ‘tyranny of the blank page’, so much as the ‘tyranny of transcription’.

90% done. The real pain comes in the editing process afterwards, where I must laboriously stitch together the fragments and copyedit and add references and markup. (The voice is no help there, having gone silent once the loop has been written down.) The ‘incremental’ phase of writing is frustrating enough that I generally avoid writing as long as I can, in the hopes that the voice will give up and go away.34 If I cannot outwait the recitation, if it keeps returning over enough periods, or some specific reason comes up (like an interested reader), then I may bother to write it down (rather than do something more fun, like read new research papers).

No free lunch. But that is only my experience of writing. Even if it does not feel like effortful thinking, and often like simply writing down something ‘obvious’, the voice comes from somewhere, and is not divine inspiration. The underlying reality must be the usual one: writing is like gardening. One patiently tends one’s garden, seeding and watering and pruning, and green shoots come up, and one day, one may behold a sudden blossoming, which one may cut and put in a vase to be seen by all. Or not, and let it wither and fall.

Writing Checklist

It turns out that writing essays (technical or philosophical) is a lot like writing code—there are so many ways to err that you need a process with as much automation as possible. My current checklist for finishing an essay:

syntax:

do a Pandoc/Firefox preview for visible major formatting problems

linkcheckerfordead links

inserting into archive queue

references:

for academic hyperlinks, include a tooltip with the title & author metadata

for any papers cited: either link full text, provide a local full text, or submit a request on /r/Scholar or LessWrong; for books, insert an Amazon link if a copy can’t be hosted

language:

check readability level (eg. Flesch-Kincaid)

check for use of the word “significant”/“significance” and insert “[statistically]” as appropriate (to disambiguate between effect sizes and statistical-significance; this common confusion is one reason for “statistical-significance considered harmful”)

convert English units to metric

proselint checks

content:

mention any use of PredictionBook in my essay on forecasting & prediction markets

mention any use of Fermi estimates in Fermi calculations

arrange for notifications of future results (when deciding to do long-term followups, eg. dual n-back research):

if using statistics:

after publication, publicize; currently:

Hacker News

Reddit

LessWrong (and further sites as appropriate)

Twitter

Markdown Checker

I’ve found that many errors in my writing can be caught by some simple scripts, which I’ve compiled into a shell script, markdown-lint.sh.

My linter does:

checks for corrupted non-text binary files

checks a blacklist of domains which are either dead (eg. Google+) or have a history of being unreliable (eg. ResearchGate, NBER, PNAS); such links need36 to either be fixed, pre-emptively mirrored, or removed entirely.

a special case is PDFs hosted on IA; the IA is reliable, but I try to rehost such PDFs so they’ll show up in Google/Google Scholar for everyone else.

Broken syntax: I’ve noticed that when I make Markdown syntax errors, they tend to be predictable and show up either in the original Markdown source, or in the rendered HTML. Two common source errors:

"(www" ")www"And the following should rarely show up in the final rendered HTML:

"\frac" "\times" "(http" ")http" "[http" "]http" " _ " "[^" "^]" "<!--" "-->" "<-- " "<-" "->" "$title$" "$description$" "$author$" "$tags$" "$category$"Similarly, I sometimes slip up in writing image/document links so any link starting

https://gwern.netor~/wiki/or/home/gwern/is probably wrong. There are a few Pandoc-specific issues that should be checked for too, like duplicate footnote names and images without separating newlines or unescaped dollar signs (which can accidentally lead to sentences being rendered as TeX).A final pass with

htmltidyfinds many errors which slip through, like incorrectly-escaped URLs.Flag dangerous language: Imperial units are deprecated, but so too is the misleading language of NHST statistics (if one must talk of “significance” I try to flag it as “statistically-significant” to warn the reader). I also avoid some other dangerous words like “obvious” (if it is really is, why do I need to say it?).

Bad habits:

proselint(with some checks disabled because they play badly with Markdown documents)Another static warning is checking for too-long lines (most common in code blocks, although sometimes broken indentation will cause this) which will cause browsers to use scrollbars, for which I’ve written a Pandoc script,

one for a bad habit of mine—too-long footnotes

duplicate and hidden-PDF URLs: a URL being linked multiple times is sometimes an error (too much copy-paste or insufficiently edited sections); PDF URLs should receive a visual annotation warning the reader it’s a PDF, but the CSS rules, which catch cases like

.pdf$, don’t cover cases where the host quietly serves a PDF anyway, so all URLs are checked. (A URL which is a PDF can be made to trigger the PDF rule by appending#pdf.)broken links are detected with

linkchecker. The best time to fix broken links is when you’re already editing a page.

While this throws many false positives, those are easy to ignore, and the script fights bad habits of mine while giving me much greater confidence that a page doesn’t have any merely technical issues that screw it up (without requiring me to constantly reread pages every time I modify them, lest an accidental typo while making an edit breaks everything).

Anonymous Feedback

Back in November 201115ya, lukeprog posted “Tell me what you think of me” where he described his use of a Google Docs form for anonymous receipt of textual feedback or comments. Typically, most forms of communication are non-anonymous, or if they are anonymous, they’re public. One can set up pseudonyms and use those for private contact, but it’s not always that easy, and is definitely a series of trivial inconveniences (if anonymous feedback is not solicited, one has to feel it’s important enough to do and violate implicit norms against anonymous messages; one has to set up an identity; one has to compose and send off the message, etc.).

I thought it was a good idea to try out, and on 2011-11-08, I set up my own anonymous feedback form and stuck it in the footer of all pages on Gwern.net where it remains to this day. I did wonder if anyone would use the form, especially since I am easy to contact via email, use multiple sites like Reddit or Lesswrong, and even my Disqus comments allowed anonymous comments—so who, if anyone, would be using this form? I scheduled a followup in 2 years on 2013-11-30 to review how the form fared.

754 days, 2.884m page views, and 1.350m unique visitors later, I have received 116 pieces of feedback (mean of 24.8k visits per feedback). I categorize them as follows in descending order of frequency:

Corrections, problems (technical or otherwise), suggested edits: 34

Praise: 31

Question/request (personal, tech support, etc.): 22

Misc (eg. gibberish, socializing, Japanese): 13

Criticism: 9

News/suggestions: 5

Feature request: 4

Request for cybering: 1

Extortion: 1 (see my blackmail page dealing with the September 201313ya incident)

Some submissions cover multiple angles (they can be quite long), sometimes people double-submitted or left it blank, etc., so the numbers won’t sum to 116.

In general, a lot of the corrections were usable and fixed issues of varying importance, from typos to the entire site’s CSS being broken due to being uploaded with the wrong MIME type. One of the news/suggestion feedbacks was very valuable, as it lead to writing the Silk Road mini-essay “A Mole?” A lot of the questions were a waste of my time; I’d say half related to Tor/Bitcoin/Silk-Road. (I also got an irritating number of emails from people asking me to, say, buy LSD or heroin off SR for them.) The feature requests were usually for a better RSS feed, which I tried to oblige by starting the Changelog page. The cybering and extortion were amusing, if nothing else. The praise was good for me mentally, as I don’t interact much with people.

I consider the anonymous feedback form to have been a success, I’m glad lukeprog brought it up on LW, and I plan to keep the feedback form indefinitely.

Feedback Causes

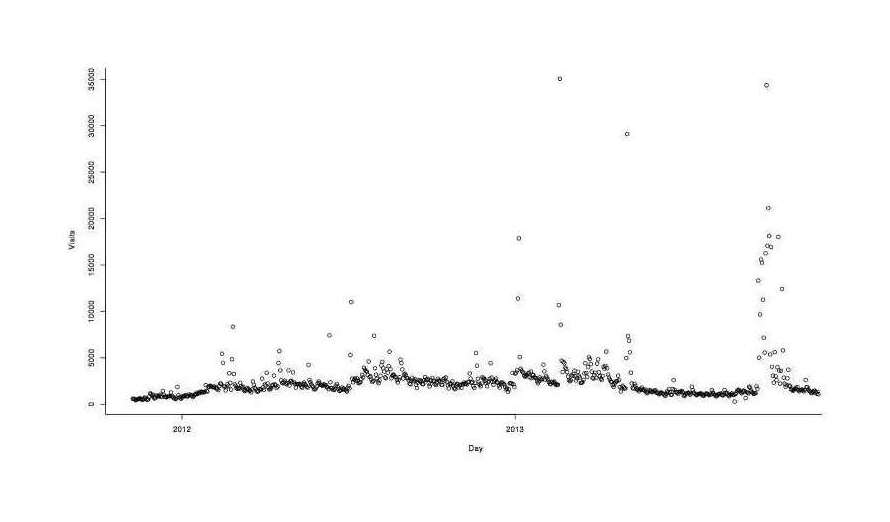

One thing I wondered is whether feedback was purely a function of traffic (the more visits, the more people who could see the link in the footer and decide to leave a comment), or more related to time (perhaps people returning regularly and eventually being emboldened or noticing something to comment on). So I compiled daily hits, combined with the feedback dates, and looked at a graph of hits:

Hits over time for Gwern.net

The hits are heavily skewed by Hacker News & Reddit traffic spikes, and probably should be log transformed. Then I did a logistic regression on hits, log hits, and a simple time index:

feedback <- read.csv("https://gwern.net/doc/traffic/2013-gwern-gwernnet-anonymousfeedback.csv",

colClasses=c("Date","logical","integer"))

plot(Visits ~ Day, data=feedback)

feedback$Time <- 1:nrow(feedback)

summary(step(glm(Feedback ~ log(Visits) + Visits + Time, family=binomial, data=feedback)))

# ...

# Coefficients:

# Estimate Std. Error z value Pr(>|z|)

# (Intercept) -7.363507 1.311703 -5.61 2.0e-08

# log(Visits) 0.749730 0.173846 4.31 1.6e-05

# Time -0.000881 0.000569 -1.55 0.12

#

# (Dispersion parameter for binomial family taken to be 1)

#

# Null deviance: 578.78 on 753 degrees of freedom

# Residual deviance: 559.94 on 751 degrees of freedom

# AIC: 565.9The logged hits works out better than regular hits, and survives to the simplified model. And the traffic influence seems much larger than the time variable (which is, curiously, negative).

Technical Aspects

Popularity

On a semi-annual basis, since 201115ya, I review Gwern.net website traffic using Google Analytics; although what most readers value is not what I value, I find it motivating to see total traffic statistics reminding me of readers (writing can be a lonely and abstract endeavour), and useful to see what are major referrers.

Gwern.net typically enjoys steady traffic in the 50–100k range per month, with occasional spikes from social media, particularly Hacker News; over the first decade (2010–102020), there were 7.98m pageviews by 3.8m unique users.

Colophon

Hosting

Gwern.net is served by Amazon S3 through the CloudFlare CDN. (Amazon charges less for bandwidth and disk space than NearlyFreeSpeech.net, an old hosting company I originally used, although one loses all the capabilities offered by Apache’s .htaccess, and Brotli compression is difficult so must be handled by CloudFlare; total costs may turn out to be a wash and I will consider the switch to Amazon S3 a success if it can bring my monthly bill to <$10 or <$120 a year.)

From October 201016ya to June 201214ya, the site was hosted on NFSN; its specific niche is controversial material and activist-friendly pricing. Its libertarian owners cast a jaundiced eye on takedown requests, and pricing is pay-as-you-go. I like the former aspect, but the latter sold me on NFSN. Before I stumbled on NFSN (someone mentioned it offhandedly while chatting), I was getting ready to pay $10–15 a month ($120 yearly) to Linode. Linode’s offerings are overkill since I do not run dynamic websites or something like Haskell.org (with wikis and mailing lists and darcs repositories), but I didn’t know a good alternative. NFSN’s pricing meant that I paid for usage rather than large flat fees. I put in $32 to cover registering Gwern.net until 2014, and then another $10 to cover bandwidth & storage price. DNS aside, I was billed $8.27 for October-December 2010; DNS included, January-April 2011 cost $10.09. $10 covered months of Gwern.net for what I would have paid Linode in 1 month! In total, my 2010 costs were $39.44 (bill archive); my 2011 costs were $118.32 ($9.86 a month; archive); and my 2012 costs through June were $112.54 ($21 a month; archive); sum total: $270.3.

The switch to Amazon S3 hosting is complicated by my simultaneous addition of CloudFlare as a CDN; my total June 2012 Amazon bill is $1.62, with $0.19 for storage. CloudFlare claims it covered 17.5GB of 24.9GB total bandwidth, so the $1.41 represents 30% of my total bandwidth; multiply 1.41 by 3 is 4.30, and my hypothetical non-CloudFlare S3 bill is ~$4.5. Even at $10, this was well below the $21 monthly cost at NFSN. (The traffic graph indicates that June 2012 was a relatively quiet period, but I don’t think this eliminates the factor of 5.) From July 2012 to June 2013, my Amazon bills totaled $60, which is reasonable except for the steady increase ($1.62/$3.27/$2.43/$2.45/$2.88/$3.43/$4.12/$5.36/$5.65/$5.49/$4.88/$8.48/$9.26), being primarily driven by out-bound bandwidth (in June 2013, the $9.26 was largely due to the 75GB transferred—and that was after CloudFlare dealt with 82GB); $9.26 is much higher than I would prefer since that would be >$110 annually. This was probably due to all the graphics I included in the “Google shutdowns” analysis, since it returned to a more reasonable $5.14 on 42GB of traffic in August. September, October, November and December 2013 saw high levels maintained at $7.63/$12.11/$5.49/$8.75, so it’s probably a new normal. 2014 entailed new costs related to EC2 instances & S3 bandwidth spikes due to hosting a multi-gigabyte scientific dataset, so bills ran $8.51/$7.40/$7.32/$9.15/$26.63/$14.75/$7.79/$7.98/$8.98/$7.71/$7/$5.94. 2015 & 2016 were similar: $5.94/$7.30/$8.21/$9.00/$8.00/$8.30/$10.00/$9.68/$14.74/$7.10/$7.39/$8.03/$8.20/$8.31/$8.25/$9.04/$7.60/$7.93/$7.96/$9.98/$9.22/$11.80/$9.01/$8.87. 2017 saw costs increase due to one of my side-projects, aggressively increasing fulltexting of Gwern.net by providing more papers & scanning cited books, only partially offset by changes like lossy optimization of images & converting GIFs to WebMs: $12.49/$10.68/$11.02/$12.53/$11.05/$10.63/$9.04/$11.03/$14.67/$15.52/$13.12/$12.23 (total: $144.01). In 2018, I continued fulltexting: $13.08/$14.85/$14.14/$18.73/$18.88/$15.92/$15.64/$15.27/$16.66/$22.56/$23.59/$25.91/(total: $213).

For 2019, I made a determined effort to host more things, including whole websites like the OKCupid archives or rotten.com, and to include more images/videos (the StyleGAN anime faces tutorial alone must be easily 20MB+ just for images) and it shows in how my bandwidth costs exploded: $33.8$26.492019/$47.92$37.562019/$47.92$37.562019/$47.92$37.562019/$31.89$252019/$31.89$252019/$31.89$252019/$31.89$252019/$99.4$77.912019/$158.77$124.452019/$94.82$74.322019/$101.03$79.192019. I began considering a move of Gwern.net to my Hetzner dedicated server which has cheap bandwidth + ~6tb space, combined with upgrading my Cloudflare CDN to keep site latency in check (even at $25.16$202020/month, it’s still far cheaper than AWS S3 bandwidth).

In 2020, I did so, merging the hosting of Gwern.net, ThisWaifuDoesNotExist, Danbooru20xx, miscellaneous ML datasets & models, all onto a single Hetzner dedicated server, for ~$62.89$502020/month. With uncapped bandwidth, I could be much more aggressive about hosting files and automatically archiving webpage snapshots. This was highly satisfactory for the next 2 years, but the growth of Danbooru20xx eventually exceeded the drive space and I relocated to another server with >20tb space, costing ~$60. (I didn’t need the full 20tb immediately but that left a large safety margin and I was thinking of creating some additional datasets like Danbooru20xx, using Derpibooru & e621—the goal being to eventually create a single model handling all kinds of illustration-based fandoms with much higher quality than the default of everyone creating their own small underpowered model on just their personal interest.)

Source

The revision history is kept in git; individual Markdown page sources can be read by appending .md to their URL (eg. for this page). The site infrastructure is available on Github.

Size

As of 2022-11-08, the source of Gwern.net is composed of >443 text files with >4.38m words or >31MB; this includes my writings & documents I have transcribed into Markdown, but excludes images, PDFs, HTML mirrors, source code, archives, infrastructure (such as tag-directories), popup and the revision history. With those included and everything compiled to the static37 HTML, the site is >72GB. The source repository contains >16,629 patches (this is an under-count as the creation of the repository in 2008-09-26 included already-written material); the infrastructure repository, >5,807.

Design

License

This site is licensed under the Creative Commons public domain (CC-0) license.

I believe the public domain license reduces FUD and dead-weight loss38, encourages copying (LOCKSS), gives back (however little) to Free Software/Free Content, and costs me nothing39.

Appendix

Benford’s Law

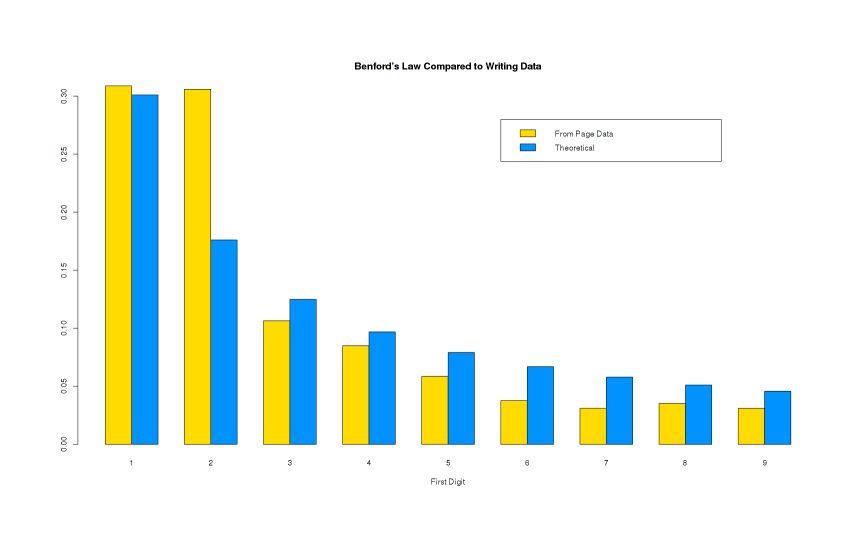

Does Gwern.net follow the famous Benford’s law?

A quick analysis suggests that it sort of does, except for the digit 2, probably due to the many citations to research from the past 2 decades (>200026ya AD).

In March 201313ya I wondered, upon seeing a mention of Benford’s law: “if I extracted all the numbers from everything I’ve written on Gwern.net, would it satisfy Benford’s law?” It seems the answer is… almost. I generate the list of numbers by running a Haskell program to parse digits, commas, and periods; and then I process it with shell utilities.40 This can then be read in R to run a chi-squared test confirming lack of fit (p ≈ 0) and generate this comparison of the data & Benford’s law41:

Histogram/barplot of parsed numbers vs predicted

There’s a clear resemblance for everything but the digit ‘2’, which then blows the fit to heck. I have no idea why 2 is overrepresented—it may be due to all the citations to recent academic papers which would involve numbers starting with ‘2’ (200224ya, 201016ya, 201313ya…) and cause a double-count in both the citation and filename, since if I look in the docs/ fulltext folder, I see 160 files starting with ‘1’ but 326 starting with ‘2’. But this can’t be the entire explanation since ‘2’ has 20.3k entries while to fit Benford, it needs to be just 11.5k—leaving a gap of ~10k numbers unexplained. A mystery.

Gennadi Sosonko, pg19 of Russian Silhouettes, on why he wrote his book of biographical sketches of great Soviet chess players. (As Richardson asks (Vectors 1.0, 200125ya): “25. Why would we write if we’d already heard what we wanted to hear?”)↩︎

One danger of such an approach is that you will simply engage in confirmation bias, and build up an impressive-looking wall of citations that is completely wrong but effective in brainwashing yourself. The only solution is to be diligent to include criticism—so even if you do not escape brainwashing, at least your readers have a chance. The Autobiography of Charles Darwin, Darwin 1902124ya:

↩︎I had, also, during many years followed a golden rule, namely, that whenever a published fact, a new observation or thought came across me, which was opposed to my general results, to make a memorandum of it without fail and at once; for I had found by experience that such facts and thoughts were far more apt to escape from the memory than favourable ones. Owing to this habit, very few objections were raised against my views which I had not at least noticed and attempted to answer.

“It is only the attempt to write down your ideas that enables them to develop.” –Wittgenstein (pg109, Recollections of Wittgenstein); “I thought a little [while in the isolation tank], and then I stopped thinking altogether…incredible how idleness of body leads to idleness of mind. After 2 days, I’d turned into an idiot. That’s the reason why, during a flight, astronauts are always kept busy.” –Oriana Fallaci, quoted in Rocket Men: The Epic Story of the First Men on the Moon by Craig Nelson. See also Beatriz Flamini.↩︎

Such as Larry Niven’s Known Space universe; consider the introduction to the chronologically last story in that setting, “Safe at Any Speed” (Tales of Known Space).↩︎

-

↩︎“If the individual lived five hundred or one thousand years, this clash (between his interests and those of society) might not exist or at least might be considerably reduced. He then might live and harvest with joy what he sowed in sorrow; the suffering of one historical period which will bear fruit in the next one could bear fruit for him too.”

From Richard Posner’s Aging and Old Age:

↩︎One way to distinguish empirically between aging effects and proximity-to-death effects would be to compare, with respect to choice of occupation, investment, education, leisure activities, and other activities, elderly people on the one hand with young or middle-aged people who have truncated life expectancies but are in apparent good health, on the other. For example, a person newly infected with the AIDS virus (HIV) has roughly the same life expectancy as a 65-year-old and is unlikely to have, as yet, [major] symptoms. The conventional human-capital model implies that, after correction for differences in income and for other differences between such persons and elderly persons who have the same life expectancy (a big difference is that the former will not have pension entitlements to fall back upon), the behavior of the two groups will be similar. It does appear to be similar, so far as investing in human capital is concerned; the truncation of the payback period causes disinvestment. And there is a high suicide rate among HIV-infected persons (even before they have reached the point in the progression of the disease at which they are classified as persons with AIDS), just as there is, as we shall see in chapter 6, among elderly persons.

John F. Kennedy, 196264ya:

↩︎I am reminded of the story of the great French Marshal Lyautey, who once asked his gardener to plant a tree. The gardener objected that the tree was slow-growing and would not reach maturity for a hundred years. The Marshal replied, “In that case, there is no time to lose, plant it this afternoon.”

-

↩︎In the long run, the utility of all non-Free software approaches zero. All non-Free software is a dead end.

These dependencies can be subtle. Computer archivist Jason Scott writes of URL shortening services that:

↩︎URL shorteners may be one of the worst ideas, one of the most backward ideas, to come out of the last five years. In very recent times, per-site shorteners, where a website registers a smaller version of its hostname and provides a single small link for a more complicated piece of content within it… those are fine. But these general-purpose URL shorteners, with their shady or fragile setups and utter dependence upon them, well. If we lose TinyURL or bit.ly, millions of weblogs, essays, and non-archived tweets lose their meaning. Instantly. To someone in the future, it’ll be like everyone from a certain era of history, say ten years of the 18th century, started speaking in a one-time pad of cryptographic pass phrases. We’re doing our best to stop it. Some of the shorteners have been helpful, others have been hostile. A number have died. We’re going to release torrents on a regular basis of these spreadsheets, these code breaking spreadsheets, and we hope others do too.

Joshua Schachter remarks (and the comments provide even more examples) further on URL shorteners:

↩︎But the biggest burden falls on the clicker, the person who follows the links. The extra layer of indirection slows down browsing with additional DNS lookups and server hits. A new and potentially unreliable middleman now sits between the link and its destination. And the long-term archivability of the hyperlink now depends on the health of a third party. The shortener may decide a link is a Terms Of Service violation and delete it. If the shortener accidentally erases a database, forgets to renew its domain, or just disappears, the link will break. If a top-level domain changes its policy on commercial use, the link will break. If the shortener gets hacked, every link becomes a potential phishing attack.

A static text-source site has many advantages for Long Content that I consider use almost a no-brainer.

By nature, they compile most content down to flat standalone textual files, which allow recovery of content even if the original site software has bit-rotted or the source files have been lost or the compiled versions cannot be directly used in new site software: one can parse them with XML tools or with quick hacks or by eye.

Site compilers generally require dependencies to be declared up front, and the approach makes explicitness and content easy, but dynamic interdependent components difficult, all of which discourages creeping complexity and hidden state.

A static site can be archived into a tarball of files which will be readable as long as web browsers exist (or afterwards if the HTML is reasonably clean), but it could be difficult to archive a CMS like WordPress or Blogspot (the latter doesn’t even provide the content in HTML—it only provides a rat’s-nest of inscrutable JavaScript files which then download the content from somewhere and display it somehow; indeed, I’m not sure how I would automate archiving of such a site if I had to; I would need some sort of headless browser to run the JS and serialize the final resulting DOM, possibly with some scripting of mouse/keyboard actions).

The content is often not available locally, or is stored in opaque binary formats rather than text (if one is lucky, it will at least be a database), both of which make it difficult to port content to other website software; you won’t have the necessary pieces, or they will be in wildly incompatible formats.

Static sites are usually written in a reasonably standardized markup language such as Markdown or LaTeX, in distinction to blogs which force one through WYSIWYG editors or invent their own markup conventions, which is yet another barrier: parsing a possibly ill-defined language.

The lowered sysadmin efforts (who wants to be constantly cleaning up spam or hacks on their WordPress blog?) are a final advantage: lower running costs make it more likely that a site will stay up rather than cease to be worth the hassle.

Static sites are not appropriate for many kinds of websites, but they are appropriate for websites which are content-oriented, do not need interactivity, expect to migrate website software several times over coming decades, want to enable archiving by oneself or third parties (“lots of copies keeps stuff safe”), and to gracefully degrade after loss or bitrot.↩︎

Such as burning the occasional copy onto read-only media like DVDs.↩︎

One can’t be sure; the IA is fed by Alexa, and Alexa doesn’t guarantee pages will be spidered & preserved if one goes through their request form.↩︎

I am diligent in backing up my files, in periodically copying my content from the cloud, and in preserving viewed Internet content; why do I do all this? Because I want to believe that my memories are precious, that the things I saw and said are valuable; “I want to meet them again, because I believe my feelings at that time were real.” My past is not trash to me, used up & discarded.↩︎

Examples of such blogs:

Eliezer Yudkowsky’s contributions to LessWrong were the rough draft of a philosophy book (or two)

John Robb’s Global Guerrillas lead to his Brave New War: The Next Stage of Terrorism and the End of Globalization

Kevin Kelly’s Technium was turned into What Technology Wants.

An example of how not to do it would be Robin Hanson’s Overcoming Bias blog; it is stuffed with fascinating citations & sketches of ideas, but they never go anywhere with the exception of his mind emulation economy posts which were eventually published in 2016 as The Age of Em. Just his posts on medicine would make a fascinating essay or just list—but he has never made one. (“Showing That You Care: The Evolution of Health Altruism” would be a natural home for many of his posts’ contents, but will never be updated.) Kevin Simler stepped up to help write The Elephant in the Brain: Hidden Motives in Everyday Life, and that seems to be the closest we’ll ever get.↩︎

“Kevin Kelly Answers Your Questions”, 2011-09-06:

↩︎[Question:] “One purpose of the Long Now Clock is to encourage long-term thinking. Aside from the Clock, though, what do you think people can do in their everyday lives to adopt or promote long-term thinking?”

KK: “The 10,000-year Clock we are building in the hills of west Texas is meant to remind us to think long-term, but learning how to do that as in individual is difficult. Part of the difficulty is that as individuals we constrained to short lives, and are inherently not long-term. So part of the skill in thinking long-term is to place our values and energies in ways that transcend the individual—either in generational projects, or in social enterprises.”

“As a start I recommend engaging in a project that will not be complete in your lifetime. Another way is to require that your current projects exhibit some payoff that is not immediate; perhaps some small portion of it pays off in the future. A third way is to create things that get better, or run up in time, rather than one that decays and runs down in time. For instance a seedling grows into a tree, which has seedlings of its own. A program like Heifer Project which gives breeding pairs of animals to poor farmers, who in turn must give one breeding pair away themselves, is an exotropic scheme, growing up over time.”

‘Princess Irulan’, Frank Herbert, Dune↩︎

GiveWell reports in “A good volunteer is hard to find” that of volunteers motivated enough to email them asking to help, something like <20% will complete the GiveWell test assignment and render meaningful help. It is difficult to make any good use of volunteers (an observation quietly echoed by others in the nonprofit world). Such persons would have been well-advised to have simply donated some money. I have long noted that many of the most popular pages on Gwern.net could have been written by anyone and drew on no unique talents of mine; I have on several occasions received offers to help with the DNB FAQ—none of which have resulted in actual help.↩︎

An old sentiment; consider “A drop hollows out the stone” (Ovid, Epistles) or Thomas Carlyle’s “The weakest living creature, by concentrating his powers on a single object, can accomplish something. The strongest, by dispensing his over many, may fail to accomplish anything. The drop, by continually falling, bores its passage through the hardest rock. The hasty torrent rushes over it with hideous uproar, and leaves no trace behind.” (The life of Friedrich Schiller, 1825201ya)↩︎

“Ten Lessons I wish I had been Taught”, Gian-Carlo Rota:

↩︎Richard Feynman was fond of giving the following advice on how to be a genius. You have to keep a dozen of your favorite problems constantly present in your mind, although by and large they will lay in a dormant state. Every time you hear or read a new trick or a new result, test it against each of your twelve problems to see whether it helps. Every once in a while there will be a hit, and people will say: ‘How did he do it? He must be a genius!’

IQ is sometimes used as a proxy for health, like height, because it sometimes seems like any health problem will damage IQ. Didn’t get much protein as a kid? Congratulations, your nerves will lack myelination and you will literally think slower. Missing some iodine? Say good bye to <10 points! If you’re anemic or iron-deficient, that might increase to <15 points. Have tapeworms? There go some more points, and maybe centimeters off your adult height, thanks to the worms stealing nutrients from you. Have a rough birth and suffer a spot of hypoxia before you began breathing on your own? Tough luck, old bean. It is very easy to lower IQ; you can do it with a baseball bat. It’s the other way around that’s nearly impossible.↩︎

For details on the many valuable correlates of the Conscientiousness personality factor, see Conscientiousness and online education.↩︎

25 episodes, 6 movies, >11 manga volumes—just to stick to the core works.↩︎

Mibu no Tadamine, KKS XII: 609:

↩︎More than my life

What I most regret

Is

A dream unfinished

And awakening.As with Cloud Nine; I accidentally erased everything on a routine basis while messing around with Windows.↩︎

For example, I notice I am no longer deeply interested in the occult. Hopefully this is because I have grown mentally and recognize it as rubbish; I would be embarrassed if when I died it turned out my youthful self had a better grasp on the real world.↩︎

Some pages don’t have any connection to predictions. It’s possible to make predictions for some border cases like the terrorism essays (death tolls, achievements of particular groups’ policy goals), but what about the short stories or poems? My imagination fails there.↩︎

Thinking of predictions is good mental discipline; we should always be able to cash out our beliefs in terms of the real world, or know why we cannot. Unfortunately, humans being humans, we need to actually track our predictions—all of them—lest our predicting degenerate into entertainment like political punditry.↩︎

Dozens of theories have been put forth. I have been collecting & making predictions; and am up to 219. It will be interesting to see how the movies turn out.↩︎

I have 2 predictions registered about the thesis on PB.com: 1 reviewer will accept my theory by 2016 and the light novels will finish by 2015.↩︎

See Robin Hanson, “If Uploads Come First”↩︎

I avoid writing these down. Dialogue is a deceptively difficult genre outside of pedagogy like inquiry-based learning/“discovery fiction”, and most dialogues would be better off as “classic style” essays.↩︎

Sometimes, when quasi-lucid-dreaming, I can ‘read’ a website, especially Twitter, and the text is genuinely there (because I can transcribe some of them upon waking) and written in all variety of styles just like the real thing. An example:

Still the best poem not about God 🦋🥅

Fire is the only path between ice.

This is original, but I didn’t write it, so who did? Eerily, the dream feels completely effortless and devoid of any control or intent—the rest of my brain must be busy babbling away predicting arbitrary Internet text as if it were GPT-3, yet like a human confabulating, there is no conscious awareness of that effort.

Reading websites/Twitter is not the only place this shows up—I can also ‘listen’ to music while daydreaming, napping, or dreaming, but only if I start from music in my collection that I’ve listened to many times before. It’s much harder to visualize arbitrary images or sounds. So I wonder if this is a sort of Tetris effect, where specific domains become so well-modeled that the self-supervised predictive parts of your brain can ‘leak’ or become ‘autonomous’ when the usual conscious processes idle enough?↩︎

Critics sometimes mock me & Scott Alexander & Eliezer Yudkowsky for verbosity unto graphomania/hypergraphia/logorrhea.

While this is wrong as a psychiatric diagnosis—we can both easily just not write, experience no difficulties in ordinary life due to the desire to write, and are not compelled to do so, much less to the extreme of scribbling nonsense on paper (eg. Charles Crumb)—there is probably something to the weaker claim that there is a spectrum of writing-motivation (of which hypergraphia is the pathological extreme) and we are far above average on it, and this is the ur-fascination which critically contributes to our “writing pipeline” (but where other people leak out).

Since we don’t know what it’s like to be other people, it is easy to misunderstand how writing feels to other people—I am reminded of Donald Knuth & Chuck Moore’s difficulty understanding how the rest of us struggle to read or write computer programs.↩︎

I originally used last file modification time but this turned out to be confusing to readers, because I so regularly add or update links or add new formatting features that the file modification time was usually quite recent, and so it was meaningless.↩︎

Reactive archiving is inadequate because such links may die before my crawler gets to them, may not be archivable, or will just expose readers to dead links for an unacceptably long time before I’d normally get around to them.↩︎

I like the static site approach to things; it tends to be harder to use and more restrictive, but in exchange it yields better performance & leads to fewer hassles or runtime issues. The static model of compiling a single monolithic site directory also lends itself to testing: any shell script or CLI tool can be easily run over the compiled site to find potential bugs (which has become increasingly important as site complexity & size increases so much that eyeballing the occasional page is inadequate).↩︎

PD increases economic efficiency through—if nothing else—making works easier to find. Tim O’Reilly says that “Obscurity is a far greater threat to authors and creative artists than piracy.” If that is so, then that means that difficulty of finding works reduces the welfare of artists and consumers, because both forgo a beneficial trade (the artist loses any revenue and the consumer loses any enjoyment). Even small increases in inconvenience make big differences.↩︎

Not that I could sell anything on this wiki; and if I could, I would polish it as much as possible, giving me fresh copyright.↩︎

We write a short Haskell program as part of a pipeline:

echo '{-# LANGUAGE OverloadedStrings #-}; import qualified Data.Text as T; main = interact (T.unpack . T.unlines . Prelude.filter (/="") . T.split (not . (`elem` "0123456789,.")) . T.pack)' > ~/number.hs && find ~/wiki/ -type f -name "*.md" -exec cat "{}" \; | runghc ~/number.hs | sort | tr -d ',' | tr -d '.' | cut -c 1 | sed -e 's/0$//' -e '/^$/d' > ~/number.txtGraph then test:

↩︎numbers <- read.table("number.txt") ta <- table(numbers$V1); ta # 1 2 3 4 5 6 7 8 9 # 20550 20356 7087 5655 3900 2508 2075 2349 2068 ## cribbing exact R code from http://www.math.utah.edu/~treiberg/M3074BenfordEg.pdf sta <- sum(ta) pb <- sapply(1:9, function(x) log10(1+1/x)); pb m <- cbind(ta/sta,pb) colnames(m)<- c("Observed Prop.", "Theoretical Prop.") barplot( rbind(ta/sta,pb/sum(pb)), beside = T, col = rainbow(7)[c(2,5)], xlab = "First Digit") title("Benford's Law Compared to Writing Data") legend(16,.28, legend = c("From Page Data", "Theoretical"), fill = rainbow(7)[c(2,5)],bg="white") chisq.test(ta,p=pb) # # Chi-squared test for given probabilities # # data: ta # X-squared = 9331, df = 8, p-value < 2.2e-16