Which books on GoodReads are most difficult to finish? Estimating proportions in December 2019 gives an entirely different result than absolute counts.

What books are hardest for a reader who starts them to finish, and most likely to be abandoned? I scrape a crowdsourced tag,

abandoned, from the GoodReads book social network on 2019-12-09 to estimate conditional probability of being abandoned.The default GoodReads tag interface presents only raw counts of tags, not counts divided by total ratings (=reads). This conflates popularity with probability of being abandoned: a popular but rarely-abandoned book may have more

abandonedtags than a less popular but often-abandoned book. There is also residual error from the winner’s curse where books with fewer ratings are more mis-estimated than popular books. I fix that to see what more correct rankings look like.Correcting for both changes the top-5 ranking completely, from (raw counts):

to (shrunken posterior proportions):

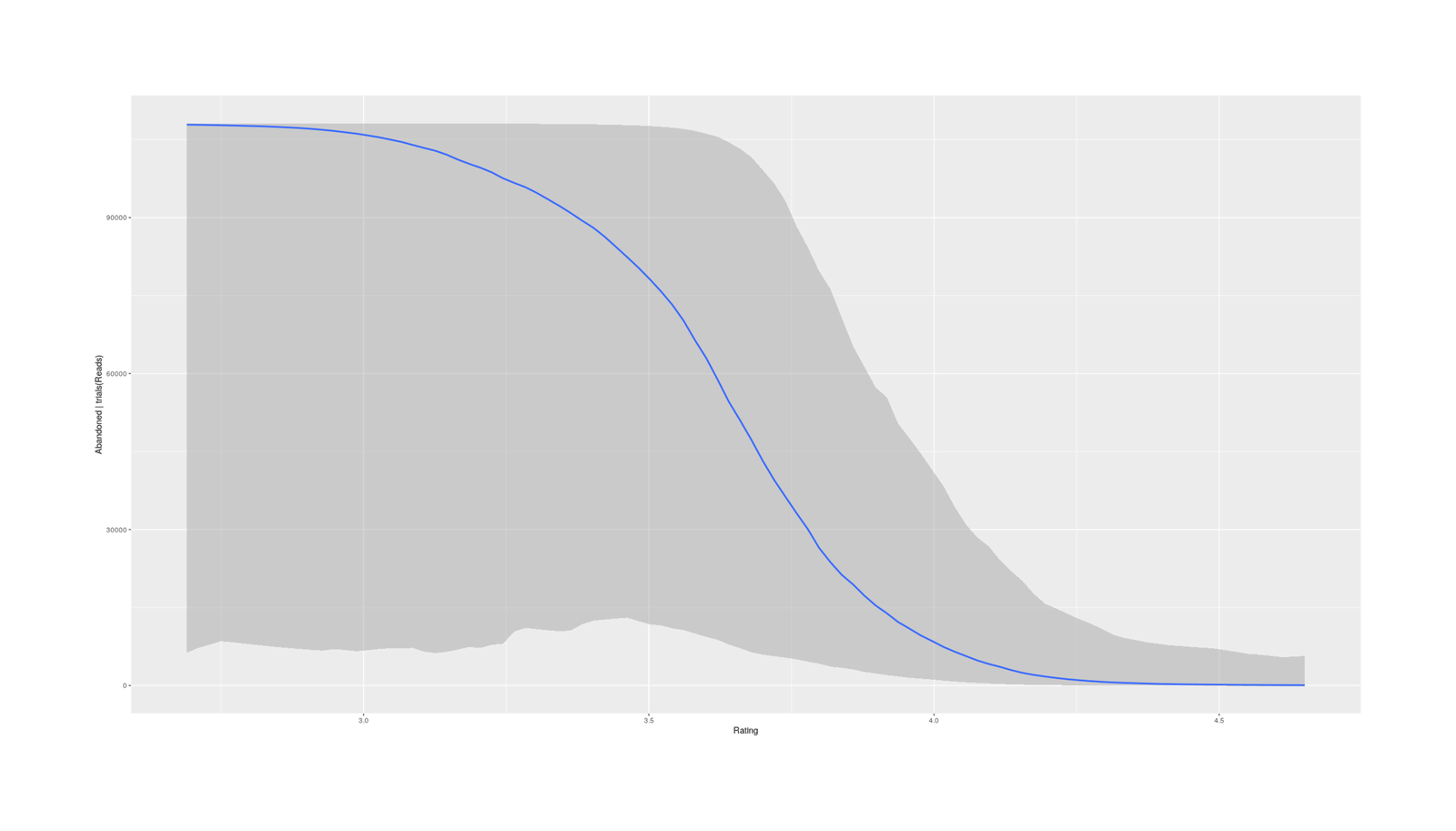

I also consider a model adjusting for covariates (author/average-rating/year), to see what books are most surprisingly often-abandoned given their pedigrees & rating etc. Abandon rates increase the newer a book is, and the lower the average rating.

Adjusting for those, the top-5 are:

The Casual Vacancy, J. K. Rowling

Books at the top of the adjusted list appear to reflect a mix of highly-popular authors changing genres, and ‘prestige’ books which are highly-rated but a slog to read.

These results are interesting for how they highlight how people read books for many reasons (such as marketing campaigns, literary prestige, or following a popular author), and this is reflected in their decision whether to continue reading or to abandon a book.

The book review website/social network GoodReads (2006–) is probably the single largest book-focused website in the Anglosphere—it is almost as widely used by authors, who as much as they may despise it, can’t live without it. (I was surprised when, reading writer interviews to learn about preferred writing times, how many have given interviews to GoodReads over the years.) I do not recommend GoodReads as it is extremely slow, largely unmaintained post-acquisition, and user-hostile (eg. eliminating the API access which allowed users to escape to better book websites like LibraryThing); nevertheless, it remains the largest book website and some interesting data can be extracted from it. Its functionality is limited, but GoodReads does support user-set tags on books in the form of ‘bookshelves’; one such tag is the GR “abandoned” bookshelf1, which lets people record which books they couldn’t finish reading.2 A (former) GoodReads user myself, I came across that tag and thought to myself that the results were amusing—take that, both highschool English class & J. K. Rowling!—but also totally misleading, since the bookshelf page only shows books sorted by total tags per book.

Little recent data. There aren’t many similar sources for people dropping books. Amazon’s Kindle has allowed for similar inferences by indirectly gauging reader attrition through the distribution of ‘highlights’ (user-made excerpts/notes), under the assumption that highlight-worthy material is uniformly distributed & thus absence of evidence (of highlighting) is evidence of absence (of reading), which Jordan Ellenberg in 2014 to facetiously estimate that Thomas Piketty’s Capital in the Twenty-First Century was the most abandoned book of 2014. That hasn’t seemed to be updated recently, even though Amazon surely has detailed analytics and knows exactly what books have highest abandonment rates (to address Kindle Unlimited fraud & cabals if nothing else), so GoodReads offers a useful alternative despite all the flaws of haphazardly-collected social media data.

Problems in Ranking by Raw Count

Popularity ≠ Unreadability. It’s no surprise that J. K. Rowling’s The Casual Vacancy or Catch-22 are at the top of the list, because they are read so often. A really abandonable-book probably would never become popular enough to get enough abandoned-tags to crack the list. (There are only two kinds of books: those people complain about reading, and those people don’t read.)

So as it is, a simple sort-by-count tells us nothing more than a lot of people have read these books. It’d be more interesting to look at something like what books have the highest percentage of abandoned tags versus total reads. GoodReads, as it happens, provides the number of ‘reads’ as recorded by GR users, so that’s good.

Low counts mean sampling error. However, the abandoned counts are also low in an absolute sense: GR displays only the top 25 pages (so we can’t look at least abandoned books), and to make the cutoff requires just 40 tags. In comparison, the most-read book on the list has 6,238,667 reads. The average percentage abandoned of reads is ~0.13%, which is extremely rare (1 in 769); I am certain that books are abandoned at a much higher rate than that, because I know I abandon books more frequently than 1 in every 769 books despite finishing books being a point of pride for me3, implying that the tags are not necessarily unbiased either.

Bayesian correction for flukes. So we have potentially unstable estimates of percentages: we have a lot (n = 1250) of entries, which we know ought to be similar, but with highly noisy estimates as we try to estimate what is a tiny & rare event (read → abandoned), which will exaggerate differences & mislead us due to winner’s curse/regression to the mean, particularly when we look at the extremes like top/bottom. This makes it a good fit for Bayesian multilevel modeling: we can treat each book as a random effect, and get shrinkage back to a common mean, reducing the damage done by the sampling error.

From a Bayesian multi-level model, we can then extract reasonably stable proportions, rank, and get out a more meaningful list of books by ‘abandon’ rates. Sounds good to me, and the result should be amusing.

Data

Scraping

No API or CLI, so manual extraction. Despite the GoodReads API (which has since been removed), scraping the data turned out to be a nuisance. The GoodReads API appears to only support exporting your particular data, and not scraping arbitrary tags or lists: it doesn’t even hint at the necessary functionality, and searching didn’t turn up any hints. (Perhaps unsurprisingly given GR’s neglect by Amazon since its acquisition.) The data appears on the web interface in HTML form, so I settled for scraping that. (If I wanted the reviews themselves, I would encounter even more horrifying limitations: GoodReads restricts every reader to seeing a maximum of 300 reviews for a book, no matter how many there actually are—one can see additional sets of 300 by choosing different sorting options, but one can never browse anything close to all of them. This means that a review which falls below the top 300 becomes invisible, and the top reviews become a self-reinforcing echo chamber.)

GR lies to bots.4

At first, I thought I could simply use my usual elinks -dump trick and iterate through the 25 pages GR allows.5 This failed because GR only shows you the other pages when you are logged in; if you are logged out, every page request returns the first page (!) and it’s no good. If there were more pages than 25, I’d look further into exporting cookies from Firefox to elinks or using a TagSoup-like library to parse it, but it’s not worth it for so little.

Instead, I copy-pasted each page’s text by hand, and did Emacs macro & regexp search-and-replaces to turn it into a usable CSV. I kept the provided metadata of author/average-GR-rating (1–5 Likert scale)/publication-year.

Preprocessing

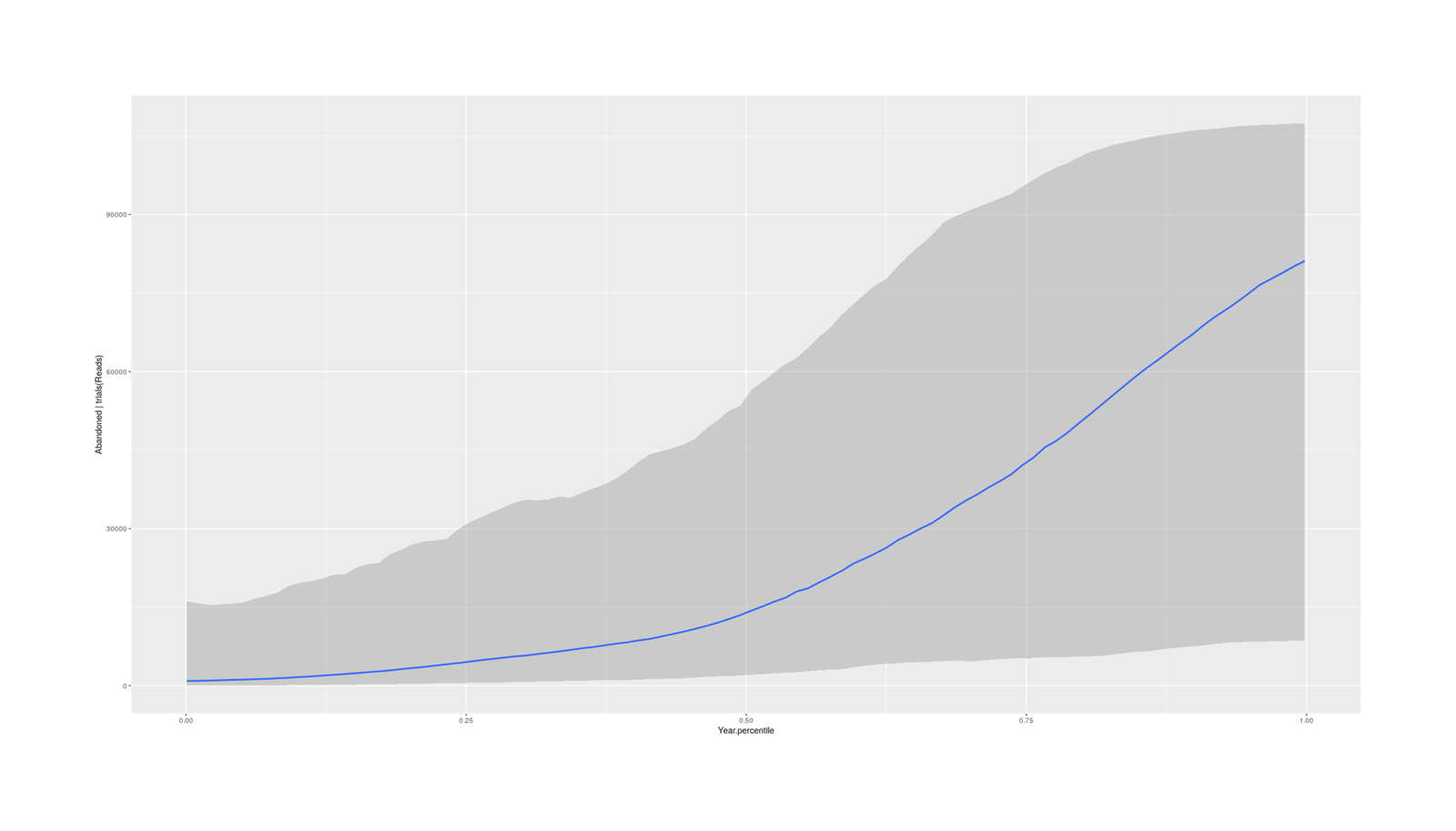

Transforming to normality. Reads/Abandoned are highly right-skewed, as one would expect, and a log-transform helps. Year is tricky, because it ranges from 750BC to 2019, but is so left-skewed to books published in the 20th & especially 21st century that the usual transforms like square/cube-rooting do nothing (which will be a problem if we want to use it as a covariate to look at temporal trends in abandon-rates); I settled for transforming years into percentiles. And we’ll want an Abandon rate in addition to the raw Abandoned count.

Reading the CSV of tag data in & transforming:

gr <- read.csv("https://gwern.net/doc/culture/2019-12-09-goodreads-bookshelf-abandoned.csv",

colClasses=c("factor", "factor", "integer", "numeric", "integer", "integer"))

## Raw data:

head(gr)

# Title Author Abandoned Rating Reads Year

# 1 The Casual Vacancy J. K. Rowling 763 3.30 288511 2012

# 2 Catch-22 (Catch-22, #1) Joseph Heller 685 3.98 679887 1961

# 3 American Gods (American Gods, #1) Neil Gaiman 663 4.11 693316 2001

# 4 A Game of Thrones (A Song of Ice and Fire, #1) George R. R. Martin 655 4.45 1833563 1996

# 5 The Book Thief Markus Zusak 608 4.37 1644846 2005

# 6 Outlander (Outlander, #1) Diana Gabaldon 572 4.22 744288 1991

## Transforms:

gr$Reads.log <- log(gr$Reads)

gr$Abandoned.log <- log(gr$Abandoned)

perc.rank <- function(x) trunc(rank(x))/length(x)

gr$Year.percentile <- perc.rank(gr$Year)

gr$Abandoned.rate <- gr$Abandoned / gr$Reads

gr$Abandoned.rate.log <- log(gr$Abandoned.rate)Description

Describing basic statistics:

library(skimr)

skim(gr) ## n = 50 books per page * 25 pages = 1250

# Skim summary statistics

# n obs: 1250

# n variables: 11

#

# ── Variable type:factor ──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────

# variable missing complete n n_unique top_counts ordered

# Author 0 1250 1250 835 Ste: 12, Nei: 9, Nea: 8, Chu: 6 FALSE

# Title 0 1250 1250 1248 Lie: 2, The: 2, 'Su: 1, 10%: 1 FALSE

#

# ── Variable type:integer ─────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────

# variable missing complete n mean sd p0 p25 p50 p75 p100 hist

# Abandoned 0 1250 1250 104.95 84.51 43 53 74 124.75 763 ▇▂▁▁▁▁▁▁

# Reads 0 1250 1250 264410.29 491210.21 4837 48771.25 108093.5 263805.75 6238667 ▇▁▁▁▁▁▁▁

# Year 0 1250 1250 1982.6 162.45 -750 1995 2009 2013 2019 ▁▁▁▁▁▁▁▇

#

# ── Variable type:numeric ─────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────

# variable missing complete n mean sd p0 p25 p50 p75 p100 hist

# Abandoned.log 0 1250 1250 4.45 0.58 3.76 3.97 4.3 4.83 6.64 ▇▅▃▂▂▁▁▁

# Abandoned.rate 0 1250 1250 0.0013 0.0015 1.7e-05 0.00035 0.00079 0.0016 0.02 ▇▁▁▁▁▁▁▁

# Abandoned.rate.log 0 1250 1250 -7.21 1.09 -10.97 -7.95 -7.14 -6.43 -3.89 ▁▁▃▆▇▅▂▁

# Rating 0 1250 1250 3.92 0.28 2.69 3.76 3.94 4.11 4.65 ▁▁▁▃▆▇▃▁

# Reads.log 0 1250 1250 11.66 1.25 8.48 10.79 11.59 12.48 15.65 ▁▃▆▇▆▃▁▁

# Year.percentile 0 1250 1250 0.5 0.29 8e-04 0.25 0.51 0.72 1 ▇▇▆▇▇▇▆▇

round(digits=2, cor(subset(gr, select=c("Abandoned.log", "Reads.log", "Rating", "Year.percentile", "Abandoned.rate.log"))))

# Abandoned.log Reads.log Rating Year.percentile Abandoned.rate.log

# Abandoned.log 1.00

# Reads.log 0.49 1.00

# Rating 0.08 0.42 1.00

# Year.percentile -0.09 -0.36 -0.18 1.00

# Abandoned.rate.log -0.03 -0.89 -0.44 0.36 1.00Newer/lower-rated = worse. As expected, read count correlates strongly with abandon count, and also as makes sense, being a more recent book correlates with fewer reads (less time for people to have read it). Abandoned rates mechanically correlate with abandoned/read counts, of course, since it’s derived from them; more interesting is that higher ratings predict much lower abandon rates, and being older also predicts lower abandon rates. The further correlation of average rating with year percentile is interesting, as it suggests more recent books both have worse ratings and are otherwise more likely to be abandoned—you’d think people would avoid new books with lower ratings, but I guess novelty & marketing are enough to convince people to waste their time.

Analysis

Simple Proportions

So the data looks sensible. What do the raw abandon rates look like when we look at the worst (largest) abandon rates?

gr$Rank.raw <- 1:nrow(gr)

gr <- gr[order(gr$Abandoned.rate, decreasing=TRUE),]

gr$Rank.proportion <- 1:nrow(gr)

cor(gr$Rank.raw, gr$Rank.proportion, method = "spearman")

# [1] -0.0406028435

gr[1:30,c(13, 12, 1, 2, 10)]

# Rank.proportion Rank.raw Title Author Abandoned.rate

# 1 117 Black Leopard, Red Wolf (The Dark Star Trilogy, #1) Marlon James 0.0204

# 2 482 Space Opera Catherynne M. Valente 0.0114

# 3 411 Little, Big John Crowley 0.0106

# 4 256 The Witches: Salem, 1692 Stacy Schiff 0.0099

# 5 982 Tender Morsels Margo Lanagan 0.0096

# 6 663 Too Like the Lightning (Terra Ignota, #1) Ada Palmer 0.0093

# 7 1212 The Flame Alphabet Ben Marcus 0.0091

# 8 592 New York 2140 Kim Stanley Robinson 0.0090

# 9 690 Hild Nicola Griffith 0.0085

# 10 1039 Gingerbread Helen Oyeyemi 0.0084

# 11 701 Dhalgren Samuel R. Delany 0.0083

# 12 114 Winter's Tale Mark Helprin 0.0078

# 13 134 A Brief History of Seven Killings Marlon James 0.0078

# 14 10 Infinite Jest David Foster Wallace 0.0075

# 15 184 The Ministry of Utmost Happiness Arundhati Roy 0.0072

# 16 962 Gold Fame Citrus Claire Vaye Watkins 0.0071

# 17 1007 I Am a Cat Natsume Sōseki 0.0070

# 18 159 Milkman Anna Burns 0.0066

# 19 689 Avenue of Mysteries John Irving 0.0064

# 20 718 The Years of Rice and Salt Kim Stanley Robinson 0.0064

# 21 371 Zone One Colson Whitehead 0.0063

# 22 522 To Rise Again at a Decent Hour Joshua Ferris 0.0063

# 23 565 City on Fire Garth Risk Hallberg 0.0062

# 24 330 Telegraph Avenue Michael Chabon 0.0061

# 25 576 It Can’t Happen Here Sinclair Lewis 0.0061

# 26 568 The Finkler Question Howard Jacobson 0.0059

# 27 1128 Spinster: Making a Life of One's Own Kate Bolick 0.0058

# 28 771 The Grace of Kings (The Dandelion Dynasty, #1) Ken Liu 0.0058

# 29 645 Barkskins Annie Proulx 0.0057

# 30 1134 A Girl Is a Half-formed Thing Eimear McBride 0.0057The ranking by rate looks completely different from ranking by count: The Casual Vacancy, Catch-22, American Gods, A Game of Thrones6 etc have all vanished and been replaced by an almost disjoint set. (In fact, the two rankings are uncorrelated: ρ = −0.04.)

Proportion rankings look right. These rankings look much more sensible to me as estimates of most-likely-to-be-abandoned: I did in fact abandon Dhalgren in disgust, and while I enjoyed Natsume Sōseki’s satires of post-Meiji Japan & academia in I Am a Cat, I understand why readers might drop it. I’m unfamiliar with Black Leopard, Red Wolf which tops the ranking, but on looking into it further, that makes sense: it is set in Africa & attempts to Africanize American superhero pop culture, has been hailed by the literary press & given awards, somewhat politicized, is over 600 pages long, and even its fans concede that it is difficult to read7. So Black Leopard, Red Wolf topping the ranking may not be flattering, but may be fair.

Bayesian Modeling

Implementing Bayesian correction… However—that brings us back to sampling error. Black Leopard, Red Wolf just came out in 2019 and hardly anyone has read it. In several cases, a member of the top 50 approaches the cut-off for not being in the list at all by having <40 abandon count (eg. Spinster or The Flame Alphabet). Is it really plausible that they have the highest abandon rates? This smacks of the winner’s curse, where measurement error/small noisy datapoints dominate the extremes of an analysis without actually being extreme (just numerous & poorly estimated).

So the next step is Bayesian modeling to account for the uncertainty caused by small n for these most-abandonable books. Using brms, we estimate a simple Bayesian binomial model, with a random effect for a given book’s abandon rate, and add a semi-informative prior expressing our opinion that all books are somewhat similar in abandon rate & there are not wacky huge differences in log odds.

Results:

library(brms)

gbSimple <- brm(Abandoned|trials(Reads) ~ (1|Title), family=binomial,

prior=c(prior(student_t(3,0,0.5), class="sd", group="Title")), data=gr, chains=30)

gbSimple

# Formula: Abandoned | trials(Reads) ~ (1 | Title)

# Data: gr (Number of observations: 1250)

# Samples: 30 chains, each with iter = 2000; warmup = 1000; thin = 1;

# total post-warmup samples = 30000

#

# Group-Level Effects:

# ~Title (Number of levels: 1248)

# Estimate Est.Error l-95% CI u-95% CI Eff.Sample Rhat

# sd(Intercept) 1.08 0.02 1.04 1.13 751 1.04

#

# Population-Level Effects:

# Estimate Est.Error l-95% CI u-95% CI Eff.Sample Rhat

# Intercept -7.21 0.03 -7.27 -7.15 363 1.09

rgbs <- as.data.frame(ranef(gbSimple)$Title)

rgbs <- rgbs[order(rgbs$Estimate.Intercept, decreasing=TRUE),]

## Combine with original GR dataset to examine full rankings at leisure:

rgbs$Title <- rownames(rgbs)

grShrunk <- merge(rgbs, gr, all=TRUE)

grShrunk <- grShrunk[order(grShrunk$Estimate.Intercept, decreasing=TRUE),]

write.csv(grShrunk, row.names=FALSE,

file="2020-01-05-goodreads-bookshelf-abandoned-posteriorproportions.csv")

## https://gwern.net/doc/culture/2020-01-05-goodreads-bookshelf-abandoned-posteriorproportions.csvhead(rgbs, n=30)

Estimate.Intercept Est.Error.Intercept Q2.5.Intercept Q97.5.Intercept

# Black Leopard, Red Wolf (The Dark Star Trilogy, #1) 3.32379864 0.0790607148 3.16589591 3.47735486

# Space Opera 2.71627444 0.1112760421 2.49346320 2.92865256

# Little, Big 2.64442569 0.1059941782 2.43214091 2.84849322

# The Witches: Salem, 1692 2.58375600 0.0899676079 2.40497914 2.75670092

# Tender Morsels 2.52059267 0.1430103435 2.23171890 2.79277487

# Too Like the Lightning (Terra Ignota, #1) 2.51037552 0.1248071384 2.26078544 2.74843195

# New York 2140 2.47210718 0.1191486878 2.23275007 2.69988279

# The Flame Alphabet 2.46184114 0.1554327209 2.15012868 2.75649392

# Hild 2.41661420 0.1280633539 2.15894924 2.66063531

# Dhalgren 2.39653706 0.1279221591 2.14009601 2.64025458

# Gingerbread 2.39249509 0.1477781116 2.09432888 2.67215550

# Winter's Tale 2.35943852 0.0792310629 2.20259365 2.51172500

# A Brief History of Seven Killings 2.34778502 0.0787129413 2.19104704 2.49958076

# Infinite Jest 2.31906968 0.0539429921 2.21314673 2.42519239

# The Ministry of Utmost Happiness 2.27664588 0.0827673701 2.11245754 2.43835817

# Gold Fame Citrus 2.23087746 0.1436343235 1.94186387 2.50264489

# I Am a Cat 2.21057738 0.1455388602 1.91550434 2.48521722

# Milkman 2.18260216 0.0814159601 2.01950559 2.33876916

# Avenue of Mysteries 2.13978963 0.1272281207 1.88351811 2.38285098

# Zone One 2.13680900 0.1028742023 1.93089374 2.33295611

# The Years of Rice and Salt 2.12679705 0.1265444209 1.87263437 2.36955658

# To Rise Again at a Decent Hour 2.11845577 0.1129727195 1.89144903 2.33461408

# City on Fire 2.11101764 0.1169914835 1.87395603 2.33317581

# Telegraph Avenue 2.10574358 0.0969163720 1.91411544 2.29259053

# It Can’t Happen Here 2.08954687 0.1167404830 1.85544344 2.31284728

# The Finkler Question 2.06326071 0.1174026491 1.82910415 2.28826845

# Barkskins 2.02841842 0.1217175555 1.78412138 2.26053102

# The Grace of Kings (The Dandelion Dynasty, #1) 2.02389635 0.1315723831 1.75779024 2.27784518

# Spinster: Making a Life of One's Own 2.01611353 0.1538652622 1.70673329 2.30803729

# A Girl Is a Half-formed Thing 2.00585364 0.1538617892 1.69349463 2.29740124

titles <- sapply(gsub(" ", ".", row.names(rgbs)[1:30]),

function(t) { paste0("r_Title[", t, ",Intercept]") } )

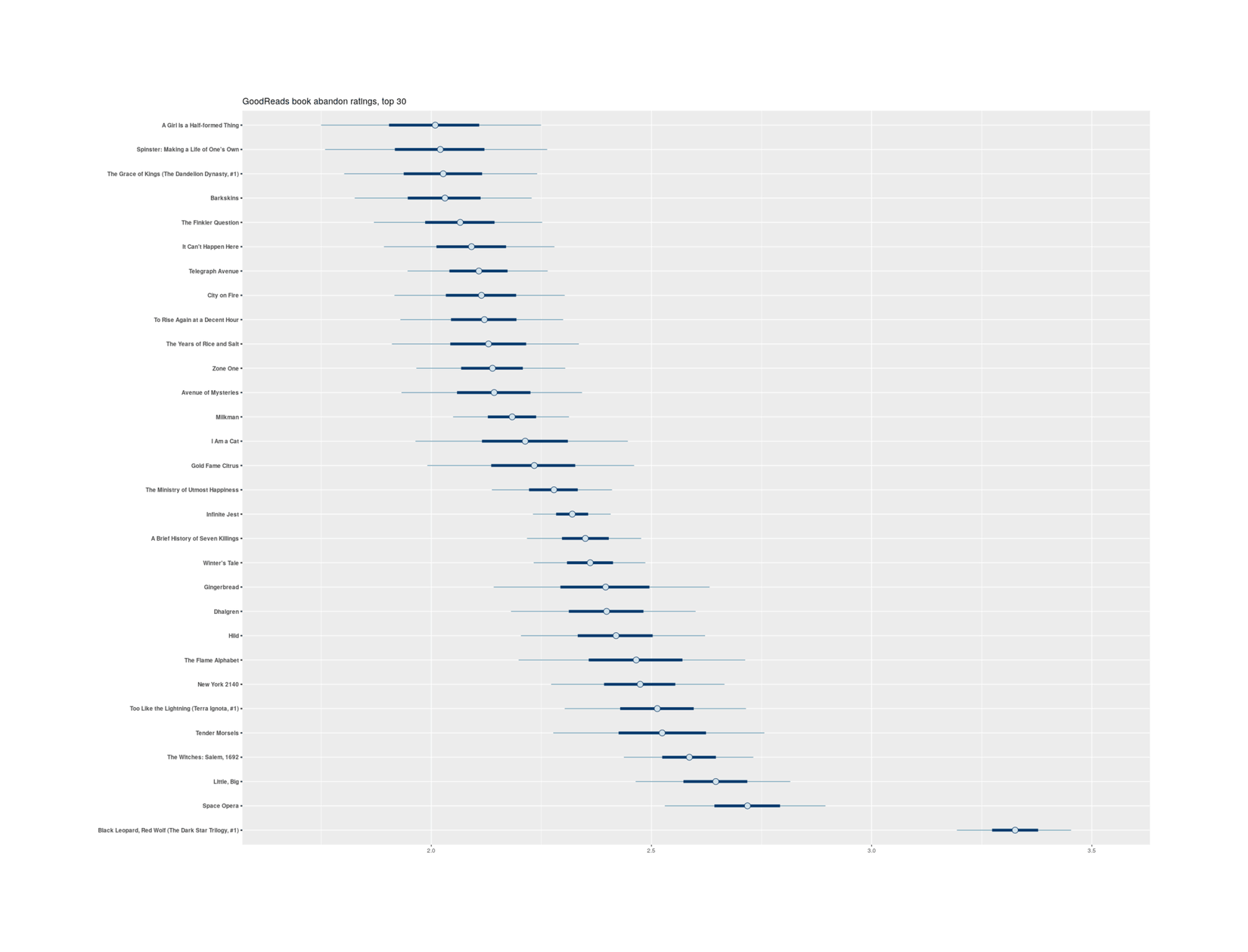

stanplot(gbSimple, pars=titles, exact_match=TRUE) + scale_y_discrete(labels =row.names(rgbs)[1:30]) +

labs(title="GoodReads book abandon ratings, top 30")

Black Leopard, Red Wolf does not enjoy any reprieve here, and to my annoyance, the top ranking changes little from raw proportion to shrunken proportion:

raw <- as.character(gr[order(gr$Abandoned.rate, decreasing=TRUE),]$Title)[1:30]

adjusted <- rownames(rgbs)[1:30]

rawDf <- data.frame(Title=raw, Ranking.raw=1:30)

adjustedDf <- data.frame(Title=adjusted, Ranking.adjusted=1:30)

comparisonDf <- merge(adjustedDf, rawDf, all=TRUE)

comparisonDf$Difference <- comparisonDf$Ranking.raw - comparisonDf$Ranking.adjusted

comparisonDf <- comparisonDf[order(comparisonDf$Ranking.raw),]; comparisonDf

# Title Ranking.adjusted Ranking.raw Difference

# 5 Black Leopard, Red Wolf (The Dark Star Trilogy, #1) 1 1 0

# 17 Space Opera 2 2 0

# 14 Little, Big 3 3 0

# 25 The Witches: Salem, 1692 4 4 0

# 20 Tender Morsels 5 5 0

# 28 Too Like the Lightning (Terra Ignota, #1) 6 6 0

# 22 The Flame Alphabet 8 7 -1

# 16 New York 2140 7 8 1

# 10 Hild 9 9 0

# 8 Gingerbread 11 10 -1

# 7 Dhalgren 10 11 1

# 29 Winter's Tale 12 12 0

# 1 A Brief History of Seven Killings 13 13 0

# 12 Infinite Jest 14 14 0

# 24 The Ministry of Utmost Happiness 15 15 0

# 9 Gold Fame Citrus 16 16 0

# 11 I Am a Cat 17 17 0

# 15 Milkman 18 18 0

# 3 Avenue of Mysteries 19 19 0

# 26 The Years of Rice and Salt 21 20 -1

# 30 Zone One 20 21 1

# 27 To Rise Again at a Decent Hour 22 22 0

# 6 City on Fire 23 23 0

# 19 Telegraph Avenue 24 24 0

# 13 It Can’t Happen Here 25 25 0

# 21 The Finkler Question 26 26 0

# 18 Spinster: Making a Life of One's Own 29 27 -2

# 23 The Grace of Kings (The Dandelion Dynasty, #1) 28 28 0

# 4 Barkskins 27 29 2

# 2 A Girl Is a Half-formed Thing 30 30 0…turns out to be unnecessary. Ah well! Apparently the restriction to >40 “abandoned” counts, while unfortunate for many reasons, actually does take care of much of the winner’s-curse bias in looking at raw proportions: the Bayesian model can’t adjust it much based on the available data.

Least Abandoned

Top-30 addictive books. On a more positive note, of the books which are included in the dataset, which 30 have the lowest abandon rates? I find the results entirely plausible8:

grShrunk[1220:1250,c(1, 6)]

# Title Author

# The Kite Runner Khaled Hosseini

# The Return of the King (The Lord of the Rings, #3) J. R. R. Tolkien

# The Old Man and the Sea Ernest Hemingway

# The Stranger Albert Camus

# The Da Vinci Code (Robert Langdon, #2) Dan Brown

# The Help Kathryn Stockett

# Mockingjay (The Hunger Games, #3) Suzanne Collins

# The Lightning Thief (Percy Jackson and the Olympians, #1) Rick Riordan

# Tuesdays with Morrie Mitch Albom

# A Thousand Splendid Suns Khaled Hosseini

# Twilight (Twilight, #1) Stephenie Meyer

# Bridget Jones's Diary (Bridget Jones, #1) Helen Fielding

# The Great Gatsby F. Scott Fitzgerald

# Memoirs of a Geisha Arthur Golden

# The Secret Garden Frances Hodgson Burnett

# The Secret Life of Bees Sue Monk Kidd

# My Sister's Keeper Jodi Picoult

# The Fault in Our Stars John Green

# The Diary of a Young Girl Anne Frank

# The Notebook (The Notebook, #1) Nicholas Sparks

# Catching Fire (The Hunger Games, #2) Suzanne Collins

# New Moon (Twilight, #2) Stephenie Meyer

# Eclipse (Twilight, #3) Stephenie Meyer

# To Kill a Mockingbird (To Kill a Mockingbird, #1) Harper Lee

# Angels & Demons (Robert Langdon, #1) Dan Brown

# The Giver (The Giver, #1) Lois Lowry

# Harry Potter and the Order of the Phoenix (Harry Potter, #5) J. K. Rowling

# The Hunger Games (The Hunger Games, #1) Suzanne Collins

# Animal Farm George Orwell

# Harry Potter and the Chamber of Secrets (Harry Potter, #2) J. K. Rowling

# Harry Potter and the Sorcerer's Stone (Harry Potter, #1) J. K. RowlingAfter all, whatever else you might think of books like The Da Vinci Code or Twilight, they did hook their readers; and before Harry Potter was a movie franchise juggernaut, it was the delight & despair of school/public librarians attempting to cater to suddenly-ravenous children.

Full Rankings

The full n = 1250 posterior proportion rankings in descending order as a table (click to uncollapse):

|

Rank |

Title |

Author |

Year |

Rating |

Abandonment |

|---|---|---|---|---|---|

|

1 |

Black Leopard, Red Wolf (The Dark Star Trilogy, #1) |

Marlon James |

2019 |

3.49 |

3.323 |

|

2 |

Space Opera |

Catherynne M. Valente |

2018 |

3.50 |

2.714 |

|

3 |

Little, Big |

John Crowley |

1981 |

3.83 |

2.643 |

|

4 |

The Witches: Salem, 1692 |

Stacy Schiff |

2015 |

3.17 |

2.582 |

|

5 |

Tender Morsels |

Margo Lanagan |

2007 |

3.56 |

2.520 |

|

6 |

Too Like the Lightning (Terra Ignota, #1) |

Ada Palmer |

2016 |

3.82 |

2.508 |

|

7 |

New York 2140 |

Kim Stanley Robinson |

2017 |

3.55 |

2.472 |

|

8 |

The Flame Alphabet |

Ben Marcus |

2012 |

2.88 |

2.459 |

|

9 |

Hild |

Nicola Griffith |

2013 |

3.78 |

2.415 |

|

10 |

Dhalgren |

Samuel R. Delany |

1975 |

3.78 |

2.394 |

|

11 |

Gingerbread |

Helen Oyeyemi |

2019 |

3.07 |

2.391 |

|

12 |

Winter’s Tale |

Mark Helprin |

1983 |

3.50 |

2.359 |

|

13 |

A Brief History of Seven Killings |

Marlon James |

2014 |

3.87 |

2.347 |

|

14 |

Infinite Jest |

David Foster Wallace |

1996 |

4.29 |

2.317 |

|

15 |

The Ministry of Utmost Happiness |

Arundhati Roy |

2017 |

3.47 |

2.275 |

|

16 |

Gold Fame Citrus |

Claire Vaye Watkins |

2015 |

3.30 |

2.228 |

|

17 |

I Am a Cat |

Natsume Sōseki |

1905 |

3.71 |

2.208 |

|

18 |

Milkman |

Anna Burns |

2018 |

3.60 |

2.181 |

|

19 |

Avenue of Mysteries |

John Irving |

2015 |

3.23 |

2.139 |

|

20 |

Zone One |

Colson Whitehead |

2011 |

3.26 |

2.135 |

|

21 |

The Years of Rice and Salt |

Kim Stanley Robinson |

2002 |

3.73 |

2.125 |

|

22 |

To Rise Again at a Decent Hour |

Joshua Ferris |

2014 |

3.08 |

2.118 |

|

23 |

City on Fire |

Garth Risk Hallberg |

2015 |

3.41 |

2.109 |

|

24 |

Telegraph Avenue |

Michael Chabon |

2012 |

3.38 |

2.104 |

|

25 |

It Can’t Happen Here |

Sinclair Lewis |

1935 |

3.76 |

2.089 |

|

26 |

The Finkler Question |

Howard Jacobson |

2010 |

2.78 |

2.062 |

|

27 |

Barkskins |

Annie Proulx |

2016 |

3.77 |

2.026 |

|

28 |

The Grace of Kings (The Dandelion Dynasty, #1) |

Ken Liu |

2015 |

3.73 |

2.022 |

|

29 |

Spinster: Making a Life of One’s Own |

Kate Bolick |

2015 |

3.45 |

2.014 |

|

30 |

A Girl Is a Half-formed Thing |

Eimear McBride |

2013 |

3.47 |

2.003 |

|

31 |

Jane, Unlimited |

Kristin Cashore |

2017 |

3.36 |

1.990 |

|

32 |

Do Not Say We Have Nothing |

Madeleine Thien |

2016 |

3.90 |

1.942 |

|

33 |

The Map of Time |

Félix J. Palma |

2008 |

3.38 |

1.936 |

|

34 |

The Quick |

Lauren Owen |

2014 |

3.24 |

1.922 |

|

35 |

The Queen of the Night |

Alexander Chee |

2016 |

3.43 |

1.912 |

|

36 |

What is Not Yours is Not Yours |

Helen Oyeyemi |

2016 |

3.64 |

1.902 |

|

37 |

Frog Music |

Emma Donoghue |

2014 |

3.15 |

1.897 |

|

38 |

Bridge of Clay |

Markus Zusak |

2018 |

3.80 |

1.890 |

|

39 |

The Good Neighbor: The Life and Work of Fred Rogers |

Maxwell King |

2018 |

4.01 |

1.875 |

|

40 |

The Prague Cemetery |

Umberto Eco |

2010 |

3.43 |

1.874 |

|

41 |

4 3 2 1 |

Paul Auster |

2017 |

3.84 |

1.869 |

|

42 |

The Luminaries |

Eleanor Catton |

2013 |

3.72 |

1.864 |

|

43 |

Two Years Eight Months and Twenty-Eight Nights |

Salman Rushdie |

2015 |

3.38 |

1.853 |

|

44 |

The Children’s Book |

A. S. Byatt |

2009 |

3.67 |

1.848 |

|

45 |

The Unconsoled |

Kazuo Ishiguro |

1995 |

3.53 |

1.827 |

|

46 |

How to Live Safely in a Science Fictional Universe |

Charles Yu |

2010 |

3.38 |

1.819 |

|

47 |

The Golden Notebook |

Doris Lessing |

1962 |

3.76 |

1.816 |

|

48 |

The Gone-Away World |

Nick Harkaway |

2008 |

4.12 |

1.812 |

|

49 |

The Wangs vs. the World |

Jade Chang |

2016 |

3.31 |

1.811 |

|

50 |

The Mermaid and Mrs. Hancock |

Imogen Hermes Gowar |

2018 |

3.61 |

1.811 |

|

51 |

Reservoir 13 |

Jon McGregor |

2017 |

3.59 |

1.810 |

|

52 |

Bleeding Edge |

Thomas Pynchon |

2013 |

3.57 |

1.800 |

|

53 |

After Alice |

Gregory Maguire |

2015 |

2.79 |

1.797 |

|

54 |

Deathless (Leningrad Diptych, #1) |

Catherynne M. Valente |

2011 |

4.01 |

1.785 |

|

55 |

White Trash: The 400-Year Untold History of Class in America |

Nancy Isenberg |

2016 |

3.74 |

1.775 |

|

56 |

2312 |

Kim Stanley Robinson |

2012 |

3.45 |

1.771 |

|

57 |

On Such a Full Sea |

Chang-rae Lee |

2014 |

3.48 |

1.766 |

|

58 |

Open City |

Teju Cole |

2011 |

3.50 |

1.754 |

|

59 |

Harry Potter and the Methods of Rationality |

Eliezer Yudkowsky |

2015 |

4.39 |

1.754 |

|

60 |

Titus Groan (Gormenghast, #1) |

Mervyn Peake |

1946 |

3.91 |

1.736 |

|

61 |

Kraken |

China Miéville |

2010 |

3.60 |

1.728 |

|

62 |

Moonglow |

Michael Chabon |

2016 |

3.81 |

1.727 |

|

63 |

Wildwood (Wildwood Chronicles, #1) |

Colin Meloy |

2011 |

3.65 |

1.706 |

|

64 |

Gravity’s Rainbow |

Thomas Pynchon |

1973 |

4.01 |

1.698 |

|

65 |

Flat Broke with Two Goats: A Memoir of Appalachia |

Jennifer McGaha |

2018 |

3.24 |

1.695 |

|

66 |

Skippy Dies |

Paul Murray |

2010 |

3.72 |

1.695 |

|

67 |

Quicksilver (The Baroque Cycle, #1) |

Neal Stephenson |

2003 |

3.93 |

1.693 |

|

68 |

The Sellout |

Paul Beatty |

2015 |

3.77 |

1.683 |

|

69 |

Super Sad True Love Story |

Gary Shteyngart |

2010 |

3.45 |

1.681 |

|

70 |

2666 |

Roberto Bolaño |

2004 |

4.21 |

1.680 |

|

71 |

The Secret Chord |

Geraldine Brooks |

2015 |

3.58 |

1.679 |

|

72 |

Swamplandia! |

Karen Russell |

2011 |

3.23 |

1.673 |

|

73 |

Lincoln in the Bardo |

George Saunders |

2017 |

3.76 |

1.670 |

|

74 |

A Manual for Cleaning Women: Selected Stories |

Lucia Berlin |

2015 |

4.18 |

1.658 |

|

75 |

S. |

J. J. Abrams |

2013 |

3.84 |

1.646 |

|

76 |

Liar, Temptress, Soldier, Spy: Four Women Undercover in the Civil War |

Karen Abbott |

2014 |

3.75 |

1.641 |

|

77 |

The Empathy Exams |

Leslie Jamison |

2014 |

3.64 |

1.640 |

|

78 |

The Red House |

Mark Haddon |

2012 |

2.94 |

1.636 |

|

79 |

The Pox Party (The Astonishing Life of Octavian Nothing, Traitor to the Nation, #1) |

M. T. Anderson |

2006 |

3.50 |

1.635 |

|

80 |

Uncommon Type |

Tom Hanks |

2017 |

3.44 |

1.631 |

|

81 |

Welcome to Night Vale (Welcome to Night Vale, #1) |

Joseph Fink |

2015 |

3.84 |

1.622 |

|

82 |

The Girl Who Circumnavigated Fairyland in a Ship of Her Own Making (Fairyland, #1) |

Catherynne M. Valente |

2011 |

3.96 |

1.584 |

|

83 |

How Not to Be Wrong: The Power of Mathematical Thinking |

Jordan Ellenberg |

2014 |

3.96 |

1.573 |

|

84 |

The Favorite Sister |

Jessica Knoll |

2018 |

3.09 |

1.569 |

|

85 |

The Rise and Fall of D.O.D.O. |

Neal Stephenson |

2017 |

3.87 |

1.551 |

|

86 |

Theft by Finding: Diaries 1977–2002 |

David Sedaris |

2015 |

3.93 |

1.549 |

|

87 |

Wolf Hall (Thomas Cromwell #1) |

Hilary Mantel |

2009 |

3.86 |

1.545 |

|

88 |

The Good Lord Bird |

James McBride |

2013 |

3.77 |

1.542 |

|

89 |

The Savage Detectives |

Roberto Bolaño |

1998 |

4.12 |

1.534 |

|

90 |

Unsheltered |

Barbara Kingsolver (Author, Narrator) |

2018 |

3.61 |

1.527 |

|

91 |

How to Be Both |

Ali Smith |

2014 |

3.65 |

1.518 |

|

92 |

Capital in the Twenty-First Century |

Thomas Piketty |

2013 |

4.04 |

1.516 |

|

93 |

NW |

Zadie Smith |

2012 |

3.44 |

1.501 |

|

94 |

The Post-Birthday World |

Lionel Shriver |

2007 |

3.54 |

1.500 |

|

95 |

My Absolute Darling |

Gabriel Tallent |

2017 |

3.63 |

1.496 |

|

96 |

How to Read a Book: The Classic Guide to Intelligent Reading |

Mortimer J. Adler |

1940 |

4.01 |

1.492 |

|

97 |

A Murder in Time (Kendra Donovan, #1) |

Julie McElwain |

2016 |

3.75 |

1.490 |

|

98 |

The Windup Girl |

Paolo Bacigalupi |

2009 |

3.75 |

1.488 |

|

99 |

The Lonely Polygamist |

Brady Udall |

2010 |

3.51 |

1.484 |

|

100 |

We Are Never Meeting In Real Life |

Samantha Irby |

2017 |

3.92 |

1.479 |

|

101 |

The High Mountains of Portugal |

Yann Martel |

2016 |

3.38 |

1.472 |

|

102 |

The Bees |

Laline Paull |

2014 |

3.70 |

1.469 |

|

103 |

The Better Angels of Our Nature: Why Violence Has Declined |

Steven Pinker |

2010 |

4.18 |

1.463 |

|

104 |

The People in the Trees |

Hanya Yanagihara |

2013 |

3.69 |

1.458 |

|

105 |

H is for Hawk |

Helen Macdonald |

2014 |

3.73 |

1.458 |

|

106 |

The Flamethrowers |

Rachel Kushner |

2013 |

3.48 |

1.457 |

|

107 |

Then We Came to the End |

Joshua Ferris |

2007 |

3.46 |

1.442 |

|

108 |

The Pale King |

David Foster Wallace |

2011 |

3.95 |

1.438 |

|

109 |

The Buried Giant |

Kazuo Ishiguro |

2015 |

3.49 |

1.434 |

|

110 |

Gödel, Escher, Bach: An Eternal Golden Braid |

Douglas R. Hofstadter |

1979 |

4.29 |

1.432 |

|

111 |

The Peripheral |

William Gibson |

2014 |

3.94 |

1.432 |

|

112 |

The Essex Serpent |

Sarah Perry |

2016 |

3.60 |

1.428 |

|

113 |

The Monstrumologist (The Monstrumologist, #1) |

Rick Yancey |

2009 |

3.88 |

1.421 |

|

114 |

Imagine Me Gone |

Adam Haslett |

2016 |

3.69 |

1.410 |

|

115 |

The Little Paris Bookshop |

Nina George |

2013 |

3.50 |

1.410 |

|

116 |

Idaho |

Emily Ruskovich |

2017 |

3.52 |

1.402 |

|

117 |

Descent |

Tim Johnston |

2015 |

3.60 |

1.397 |

|

118 |

James Potter and the Hall of Elders’ Crossing (James Potter, #1) |

G. Norman Lippert |

2007 |

3.77 |

1.396 |

|

119 |

American War |

Omar El Akkad |

2017 |

3.79 |

1.385 |

|

120 |

The Little Friend |

Donna Tartt |

2002 |

3.46 |

1.381 |

|

121 |

Some Luck (Last Hundred Years: A Family Saga, #1) |

Jane Smiley |

2014 |

3.61 |

1.369 |

|

122 |

Underworld |

Don DeLillo |

1997 |

3.92 |

1.359 |

|

123 |

All the Birds in the Sky |

Charlie Jane Anders |

2016 |

3.58 |

1.354 |

|

124 |

Fire and Fury: Inside the Trump White House |

Michael Wolff |

2018 |

3.44 |

1.351 |

|

125 |

The Water Knife |

Paolo Bacigalupi |

2015 |

3.83 |

1.350 |

|

126 |

Accelerando |

Charles Stross |

2005 |

3.88 |

1.346 |

|

127 |

Jonathan Strange & Mr Norrell |

Susanna Clarke |

2004 |

3.82 |

1.341 |

|

128 |

Perdido Street Station (New Crobuzon, #1) |

China Miéville |

2000 |

3.97 |

1.333 |

|

129 |

The Road to Character |

David Brooks |

2015 |

3.66 |

1.327 |

|

130 |

The Zookeeper’s Wife |

Diane Ackerman |

2007 |

3.46 |

1.320 |

|

131 |

Meddling Kids |

Edgar Cantero |

2017 |

3.56 |

1.319 |

|

132 |

The Sympathizer |

Viet Thanh Nguyen |

2015 |

4.00 |

1.319 |

|

133 |

Dark Money: The Hidden History of the Billionaires Behind the Rise of the Radical Right |

Jane Mayer |

2016 |

4.34 |

1.317 |

|

134 |

Night Train to Lisbon |

Pascal Mercier |

2004 |

3.72 |

1.307 |

|

135 |

Fates and Furies |

Lauren Groff |

2015 |

3.57 |

1.304 |

|

136 |

Ulysses |

James Joyce |

1922 |

3.73 |

1.304 |

|

137 |

Nexus (Nexus, #1) |

Ramez Naam |

2012 |

4.05 |

1.293 |

|

138 |

John Dies at the End (John Dies at the End, #1) |

David Wong |

2007 |

3.90 |

1.290 |

|

139 |

Warlight |

Michael Ondaatje |

2018 |

3.64 |

1.286 |

|

140 |

Once Upon a River |

Diane Setterfield |

2018 |

3.99 |

1.284 |

|

141 |

The Yiddish Policemen’s Union |

Michael Chabon |

2007 |

3.71 |

1.279 |

|

142 |

The Library at Mount Char |

Scott Hawkins |

2015 |

4.09 |

1.279 |

|

143 |

The Casual Vacancy |

J. K. Rowling |

2012 |

3.30 |

1.276 |

|

144 |

Vampires in the Lemon Grove |

Karen Russell |

2013 |

3.68 |

1.275 |

|

145 |

The Satanic Verses |

Salman Rushdie |

1988 |

3.71 |

1.273 |

|

146 |

California |

Edan Lepucki |

2014 |

3.23 |

1.264 |

|

147 |

Snow |

Orhan Pamuk |

2002 |

3.58 |

1.258 |

|

148 |

The Hidden Life of Trees: What They Feel, How They Communicate – Discoveries from a Secret World |

Peter Wohlleben |

2015 |

4.06 |

1.256 |

|

149 |

We Are Not Ourselves |

Matthew Thomas |

2014 |

3.70 |

1.253 |

|

150 |

Here I Am |

Jonathan Safran Foer |

2016 |

3.64 |

1.253 |

|

151 |

The Paying Guests |

Sarah Waters |

2014 |

3.41 |

1.241 |

|

152 |

Gilead (Gilead, #1) |

Marilynne Robinson |

2004 |

3.85 |

1.239 |

|

153 |

The Bone Clocks |

David Mitchell |

2014 |

3.83 |

1.233 |

|

154 |

Lab Girl |

Hope Jahren |

2016 |

4.02 |

1.230 |

|

155 |

Sex at Dawn: The Prehistoric Origins of Modern Sexuality |

Christopher Ryan |

2010 |

4.02 |

1.226 |

|

156 |

Antifragile: Things That Gain from Disorder |

Nassim Nicholas Taleb |

2012 |

4.09 |

1.225 |

|

157 |

The Overstory |

Richard Powers |

2018 |

4.19 |

1.225 |

|

158 |

The Readers of Broken Wheel Recommend |

Katarina Bivald |

2013 |

3.55 |

1.224 |

|

159 |

Ancillary Justice (Imperial Radch #1) |

Ann Leckie |

2013 |

3.98 |

1.219 |

|

160 |

LaRose |

Louise Erdrich |

2016 |

3.87 |

1.217 |

|

161 |

Time and Again (Time, #1) |

Jack Finney |

1970 |

3.96 |

1.211 |

|

162 |

The Almost Moon |

Alice Sebold |

2007 |

2.69 |

1.209 |

|

163 |

The Book of Disquiet: The Complete Edition |

Fernando Pessoa |

1982 |

4.46 |

1.207 |

|

164 |

Hidden Figures |

Margot Lee Shetterly |

2016 |

3.97 |

1.206 |

|

165 |

Seating Arrangements |

Maggie Shipstead |

2012 |

3.03 |

1.205 |

|

166 |

A Constellation of Vital Phenomena |

Anthony Marra |

2013 |

4.13 |

1.201 |

|

167 |

The City of Brass (The Daevabad Trilogy, #1) |

S. A. Chakraborty |

2017 |

4.15 |

1.198 |

|

168 |

Outline |

Rachel Cusk |

2014 |

3.62 |

1.197 |

|

169 |

In the Unlikely Event |

Judy Blume |

2015 |

3.52 |

1.192 |

|

170 |

Min kamp 1 (Min kamp #1) |

Karl Ove Knausgård |

2009 |

4.08 |

1.189 |

|

171 |

Boneshaker (The Clockwork Century, #1) |

Cherie Priest |

2009 |

3.50 |

1.184 |

|

172 |

My Name Is Red |

Orhan Pamuk |

1998 |

3.85 |

1.184 |

|

173 |

The Gathering |

Anne Enright |

2007 |

3.07 |

1.178 |

|

174 |

The Lacuna |

Barbara Kingsolver |

2009 |

3.79 |

1.178 |

|

175 |

The First Fifteen Lives of Harry August |

Claire North |

2014 |

4.04 |

1.171 |

|

176 |

Going Bovine |

Libba Bray |

2009 |

3.65 |

1.170 |

|

177 |

In One Person |

John Irving |

2012 |

3.67 |

1.170 |

|

178 |

The Elegance of the Hedgehog |

Muriel Barbery |

2006 |

3.76 |

1.168 |

|

179 |

Dear Life |

Alice Munro |

2012 |

3.75 |

1.167 |

|

180 |

Challenger Deep |

Neal Shusterman |

2015 |

4.14 |

1.157 |

|

181 |

Swing Time |

Zadie Smith |

2016 |

3.56 |

1.157 |

|

182 |

Less |

Andrew Sean Greer |

2017 |

3.71 |

1.157 |

|

183 |

Paddle Your Own Canoe: One Man’s Fundamentals for Delicious Living |

Nick Offerman |

2013 |

3.68 |

1.152 |

|

184 |

Er ist wieder da |

Timur Vermes |

2012 |

3.44 |

1.147 |

|

185 |

Seveneves |

Neal Stephenson |

2015 |

3.99 |

1.144 |

|

186 |

Fleishman Is in Trouble |

Taffy Brodesser-Akner |

2019 |

3.77 |

1.143 |

|

187 |

Special Topics in Calamity Physics |

Marisha Pessl |

2006 |

3.71 |

1.140 |

|

188 |

Blackout (All Clear, #1) |

Connie Willis |

2010 |

3.83 |

1.132 |

|

189 |

The Emperor’s Children |

Claire Messud |

2006 |

2.95 |

1.126 |

|

190 |

Arcadia |

Lauren Groff |

2012 |

3.67 |

1.124 |

|

191 |

Tinkers |

Paul Harding |

2008 |

3.38 |

1.122 |

|

192 |

Afterworlds (Afterworlds #1) |

Scott Westerfeld |

2014 |

3.73 |

1.120 |

|

193 |

Tangerine |

Christine Mangan |

2018 |

3.21 |

1.118 |

|

194 |

Pygmy |

Chuck Palahniuk |

2009 |

2.97 |

1.117 |

|

195 |

The Invisible Library (The Invisible Library, #1) |

Genevieve Cogman |

2015 |

3.73 |

1.114 |

|

196 |

Swann’s Way (In Search of Lost Time, #1) |

Marcel Proust |

1913 |

4.14 |

1.108 |

|

197 |

The Road to Little Dribbling: Adventures of an American in Britain |

Bill Bryson |

2015 |

3.71 |

1.106 |

|

198 |

The Summer Before the War |

Helen Simonson |

2016 |

3.78 |

1.099 |

|

199 |

Sweetbitter |

Stephanie Danler |

2016 |

3.28 |

1.091 |

|

200 |

Women Who Run With the Wolves: Myths and Stories of the Wild Woman Archetype |

Clarissa Pinkola Estés |

1992 |

4.15 |

1.087 |

|

201 |

House of Leaves |

Mark Z. Danielewski |

2000 |

4.10 |

1.086 |

|

202 |

Possession |

A. S. Byatt |

1990 |

3.89 |

1.079 |

|

203 |

Fear: Trump in the White House |

Bob Woodward |

2018 |

3.91 |

1.078 |

|

204 |

The Girls of Atomic City: The Untold Story of the Women Who Helped Win World War II |

Denise Kiernan |

2013 |

3.68 |

1.068 |

|

205 |

The Narrow Road to the Deep North |

Richard Flanagan |

2013 |

4.01 |

1.066 |

|

206 |

Red Mars (Mars Trilogy, #1) |

Kim Stanley Robinson |

1992 |

3.85 |

1.054 |

|

207 |

Five Days at Memorial: Life and Death in a Storm-Ravaged Hospital |

Sheri Fink |

2013 |

3.90 |

1.053 |

|

208 |

An Instance of the Fingerpost |

Iain Pears |

1997 |

3.94 |

1.053 |

|

209 |

My Brilliant Friend (The Neapolitan Novels, #1) |

2011 |

3.94 |

1.048 |

|

|

210 |

12 Rules for Life: An Antidote to Chaos |

Jordan B. Peterson |

2018 |

3.96 |

1.040 |

|

211 |

Transcription |

Kate Atkinson |

2018 |

3.50 |

1.034 |

|

212 |

One More Thing: Stories and Other Stories |

B. J. Novak |

2014 |

3.67 |

1.027 |

|

213 |

The Library Book |

Susan Orlean |

2018 |

3.95 |

1.018 |

|

214 |

The 7½ Deaths of Evelyn Hardcastle |

Stuart Turton |

2018 |

3.92 |

1.017 |

|

215 |

1Q84 |

Haruki Murakami |

2009 |

3.92 |

1.016 |

|

216 |

The Glass Bead Game |

Hermann Hesse |

1943 |

4.11 |

1.011 |

|

217 |

The Book of Strange New Things |

Michel Faber |

2014 |

3.66 |

1.008 |

|

218 |

The Terror |

Dan Simmons |

2007 |

4.06 |

1.008 |

|

219 |

Sea of Poppies (Ibis Trilogy, #1) |

Amitav Ghosh |

2008 |

3.95 |

1.003 |

|

220 |

Blindsight (Firefall, #1) |

Peter Watts |

2006 |

4.01 |

1.002 |

|

221 |

The Valley of Amazement |

Amy Tan |

2013 |

3.63 |

0.997 |

|

222 |

Code Name Verity |

Elizabeth E. Wein |

2012 |

4.05 |

0.997 |

|

223 |

The Slap |

Christos Tsiolkas |

2008 |

3.19 |

0.995 |

|

224 |

The Goblin Emperor (The Goblin Emperor, #1) |

Katherine Addison |

2014 |

4.04 |

0.995 |

|

225 |

Purity |

Jonathan Franzen |

2015 |

3.61 |

0.994 |

|

226 |

Midnight’s Children |

Salman Rushdie |

1981 |

3.98 |

0.990 |

|

227 |

The Mars Room |

Rachel Kushner |

2018 |

3.49 |

0.986 |

|

228 |

Inherent Vice |

Thomas Pynchon |

2009 |

3.74 |

0.983 |

|

229 |

A God in Ruins (Todd Family, #2) |

Kate Atkinson |

2015 |

3.94 |

0.981 |

|

230 |

Mrs. Lincoln’s Dressmaker |

Jennifer Chiaverini |

2013 |

3.46 |

0.975 |

|

231 |

Boy, Snow, Bird |

Helen Oyeyemi |

2013 |

3.35 |

0.972 |

|

232 |

Cryptonomicon |

Neal Stephenson |

1999 |

4.25 |

0.964 |

|

233 |

The Witch Elm |

Tana French |

2018 |

3.54 |

0.963 |

|

234 |

Beauty Queens |

Libba Bray |

2011 |

3.62 |

0.959 |

|

235 |

To Say Nothing of the Dog (Oxford Time Travel, #2) |

Connie Willis |

1998 |

4.13 |

0.957 |

|

236 |

The Design of Everyday Things |

Donald A. Norman |

1988 |

4.18 |

0.956 |

|

237 |

Life After Life (Todd Family, #1) |

Kate Atkinson |

2013 |

3.76 |

0.954 |

|

238 |

The Highly Sensitive Person: How to Thrive When the World Overwhelms You |

Elaine N. Aron |

1996 |

3.87 |

0.949 |

|

239 |

Blood Meridian, or the Evening Redness in the West |

Cormac McCarthy |

1985 |

4.17 |

0.944 |

|

240 |

Fire & Blood (A Targaryen History, #1) |

George R. R. Martin |

2018 |

3.89 |

0.940 |

|

241 |

The Cat’s Table |

Michael Ondaatje |

2011 |

3.60 |

0.936 |

|

242 |

Embassytown |

China Miéville |

2011 |

3.88 |

0.935 |

|

243 |

Mein Kampf |

Adolf Hitler |

1925 |

3.16 |

0.926 |

|

244 |

Cloud Atlas |

David Mitchell |

2004 |

4.01 |

0.924 |

|

245 |

The Known World |

Edward P. Jones |

2003 |

3.83 |

0.917 |

|

246 |

The Tiger’s Wife |

Téa Obreht |

2011 |

3.39 |

0.911 |

|

247 |

Furiously Happy: A Funny Book About Horrible Things |

Jenny Lawson |

2015 |

3.92 |

0.911 |

|

248 |

A Place for Us |

Fatima Farheen Mirza |

2018 |

4.15 |

0.910 |

|

249 |

Pure (Pure, #1) |

Julianna Baggott |

2012 |

3.74 |

0.907 |

|

250 |

The Hero With a Thousand Faces |

Joseph Campbell |

1949 |

4.20 |

0.907 |

|

251 |

SPQR: A History of Ancient Rome |

Mary Beard |

2015 |

4.07 |

0.905 |

|

252 |

The Magic Mountain |

Thomas Mann |

1924 |

4.14 |

0.903 |

|

253 |

A Tale for the Time Being |

Ruth Ozeki |

2013 |

4.00 |

0.900 |

|

254 |

The Rook (The Checquy Files, #1) |

Daniel O’Malley |

2012 |

4.12 |

0.896 |

|

255 |

Anathem |

Neal Stephenson |

2008 |

4.18 |

0.893 |

|

256 |

Girl Waits with Gun (Kopp Sisters, #1) |

Amy Stewart |

2015 |

3.76 |

0.892 |

|

257 |

The Gene: An Intimate History |

Siddhartha Mukherjee |

2016 |

4.37 |

0.892 |

|

258 |

Today Will Be Different |

Maria Semple |

2016 |

3.18 |

0.892 |

|

259 |

The Righteous Mind: Why Good People Are Divided by Politics and Religion |

Jonathan Haidt |

2012 |

4.22 |

0.888 |

|

260 |

Aftermath (Star Wars: Aftermath, #1) |

Chuck Wendig |

2015 |

3.21 |

0.887 |

|

261 |

Zen and the Art of Motorcycle Maintenance: An Inquiry Into Values |

Robert M. Pirsig |

1974 |

3.77 |

0.884 |

|

262 |

Lily and the Octopus |

Steven Rowley |

2016 |

3.71 |

0.883 |

|

263 |

Better Than Before: Mastering the Habits of Our Everyday Lives |

Gretchen Rubin |

2015 |

3.84 |

0.881 |

|

264 |

The Nix |

Nathan Hill |

2016 |

4.09 |

0.879 |

|

265 |

The Magicians (The Magicians, #1) |

Lev Grossman |

2009 |

3.51 |

0.879 |

|

266 |

Hopscotch |

Julio Cortázar |

1963 |

4.24 |

0.876 |

|

267 |

The Waves |

Virginia Woolf |

1931 |

4.14 |

0.874 |

|

268 |

Gardens of the Moon (Malazan Book of the Fallen, #1) |

Steven Erikson |

1999 |

3.88 |

0.872 |

|

269 |

Children of Blood and Bone (Legacy of Orïsha, #1) |

Tomi Adeyemi |

2018 |

4.16 |

0.870 |

|

270 |

The Orphan Master’s Son |

Adam Johnson |

2012 |

4.07 |

0.867 |

|

271 |

Salt: A World History |

Mark Kurlansky |

2002 |

3.74 |

0.867 |

|

272 |

Kushiel’s Dart (Phèdre’s Trilogy, #1) |

Jacqueline Carey |

2001 |

4.03 |

0.863 |

|

273 |

Everyone Brave is Forgiven |

Chris Cleave |

2016 |

3.78 |

0.861 |

|

274 |

The Wolf of Wall Street (The Wolf of Wall Street, #1) |

Jordan Belfort |

2007 |

3.71 |

0.857 |

|

275 |

If on a Winter’s Night a Traveler |

Italo Calvino |

1979 |

4.05 |

0.856 |

|

276 |

Feed (Newsflesh Trilogy, #1) |

Mira Grant |

2010 |

3.85 |

0.855 |

|

277 |

Going Clear: Scientology, Hollywood, and the Prison of Belief |

Lawrence Wright |

2013 |

4.04 |

0.854 |

|

278 |

Why We Broke Up |

Daniel Handler |

2011 |

3.48 |

0.852 |

|

279 |

The Other Einstein |

Marie Benedict |

2016 |

3.74 |

0.852 |

|

280 |

Foucault’s Pendulum |

Umberto Eco |

1988 |

3.89 |

0.851 |

|

281 |

Sweet Tooth |

Ian McEwan |

2012 |

3.41 |

0.848 |

|

282 |

Longbourn |

Jo Baker |

2013 |

3.63 |

0.847 |

|

283 |

A Little Life |

Hanya Yanagihara |

2015 |

4.30 |

0.846 |

|

284 |

Dorothy Must Die (Dorothy Must Die, #1) |

Danielle Paige |

2014 |

3.83 |

0.844 |

|

285 |

The Interestings |

Meg Wolitzer |

2013 |

3.57 |

0.844 |

|

286 |

The Dante Club (The Dante Club #1) |

Matthew Pearl |

2003 |

3.38 |

0.831 |

|

287 |

The Clockmaker’s Daughter |

Kate Morton |

2018 |

3.73 |

0.831 |

|

288 |

The Happiness Project |

Gretchen Rubin |

2009 |

3.61 |

0.827 |

|

289 |

The Female Persuasion |

Meg Wolitzer |

2018 |

3.60 |

0.827 |

|

290 |

The Reality Dysfunction (Night’s Dawn, #1) |

Peter F. Hamilton |

1996 |

4.14 |

0.824 |

|

291 |

How to Change Your Mind: What the New Science of Psychedelics Teaches Us About Consciousness, Dying, Addiction, Depression, and Transcendence |

Michael Pollan |

2018 |

4.28 |

0.821 |

|

292 |

The Hare With Amber Eyes: A Family’s Century of Art and Loss |

Edmund de Waal |

2009 |

3.87 |

0.820 |

|

293 |

Eileen |

Ottessa Moshfegh |

2015 |

3.49 |

0.819 |

|

294 |

Pride and Prejudice and Zombies (Pride and Prejudice and Zombies, #1) |

Seth Grahame-Smith |

2009 |

3.29 |

0.817 |

|

295 |

The Marriage Plot |

Jeffrey Eugenides |

2011 |

3.44 |

0.814 |

|

296 |

The World Without Us |

Alan Weisman |

2007 |

3.80 |

0.814 |

|

297 |

The Fifth Season (The Broken Earth, #1) |

N. K. Jemisin |

2015 |

4.31 |

0.813 |

|

298 |

The Shining Girls |

Lauren Beukes |

2013 |

3.50 |

0.812 |

|

299 |

The Passage (The Passage, #1) |

Justin Cronin |

2010 |

4.04 |

0.809 |

|

300 |

Shades of Grey (Shades of Grey, #1) |

Jasper Fforde |

2009 |

4.14 |

0.801 |

|

301 |

The Fireman |

Joe Hill |

2016 |

3.89 |

0.799 |

|

302 |

Flight Behavior |

Barbara Kingsolver |

2012 |

3.78 |

0.798 |

|

303 |

Salvage the Bones |

Jesmyn Ward |

2011 |

3.92 |

0.797 |

|

304 |

Her Body and Other Parties |

Carmen Maria Machado |

2017 |

3.91 |

0.794 |

|

305 |

The Dog Stars |

Peter Heller |

2012 |

3.92 |

0.793 |

|

306 |

Bad Feminist |

Roxane Gay |

2014 |

3.94 |

0.784 |

|

307 |

Mythos: The Greek Myths Retold |

Stephen Fry |

2017 |

4.24 |

0.783 |

|

308 |

And I Darken (The Conqueror’s Saga, #1) |

Kiersten White |

2016 |

3.87 |

0.782 |

|

309 |

The Inheritance of Loss |

Kiran Desai |

2005 |

3.42 |

0.781 |

|

310 |

Reading Lolita in Tehran: A Memoir in Books |

Azar Nafisi |

2003 |

3.60 |

0.781 |

|

311 |

Doomsday Book (Oxford Time Travel, #1) |

Connie Willis |

1992 |

4.03 |

0.768 |

|

312 |

Master and Commander (Aubrey & Maturin, #1) |

Patrick O’Brian |

1970 |

4.10 |

0.766 |

|

313 |

The Thousand Autumns of Jacob de Zoet |

David Mitchell |

2010 |

4.03 |

0.759 |

|

314 |

A Suitable Boy (A Suitable Boy, #1) |

Vikram Seth |

1993 |

4.11 |

0.749 |

|

315 |

The City & the City |

China Miéville |

2009 |

3.91 |

0.747 |

|

316 |

Death Comes to Pemberley |

P. D. James |

2011 |

3.25 |

0.746 |

|

317 |

Labyrinth (Languedoc, #1) |

Kate Mosse |

2005 |

3.57 |

0.743 |

|

318 |

The Disappearing Spoon: And Other True Tales of Madness, Love, and the History of the World from the Periodic Table of the Elements |

Sam Kean |

2010 |

3.91 |

0.737 |

|

319 |

The Bone Season (The Bone Season, #1) |

Samantha Shannon |

2013 |

3.76 |

0.737 |

|

320 |

The Dovekeepers |

Alice Hoffman |

2011 |

4.04 |

0.735 |

|

321 |

The Signal and the Noise: Why So Many Predictions Fail—But Some Don’t |

Nate Silver |

2012 |

3.98 |

0.734 |

|

322 |

The Book of Speculation |

Erika Swyler |

2015 |

3.60 |

0.732 |

|

323 |

Housekeeping |

Marilynne Robinson |

1980 |

3.82 |

0.727 |

|

324 |

The Long Earth (The Long Earth, #1) |

Terry Pratchett |

2012 |

3.76 |

0.725 |

|

325 |

Consider Phlebas |

Iain M. Banks |

1987 |

3.86 |

0.724 |

|

326 |

Trigger Warning: Short Fictions and Disturbances |

Neil Gaiman |

2015 |

3.93 |

0.724 |

|

327 |

The Leavers |

Lisa Ko |

2017 |

3.87 |

0.722 |

|

328 |

How to Build a Girl |

Caitlin Moran |

2014 |

3.71 |

0.719 |

|

329 |

Sacré Bleu |

Christopher Moore |

2012 |

3.78 |

0.715 |

|

330 |

Altered Carbon (Takeshi Kovacs, #1) |

Richard K. Morgan |

2002 |

4.05 |

0.709 |

|

331 |

The Three-Body Problem (Remembrance of Earth’s Past #1) |

Liu Cixin |

2008 |

4.06 |

0.704 |

|

332 |

White Teeth |

Zadie Smith |

2000 |

3.76 |

0.700 |

|

333 |

Manhattan Beach |

Jennifer Egan |

2017 |

3.62 |

0.699 |

|

334 |

Naked Lunch |

William S. Burroughs |

1959 |

3.46 |

0.699 |

|

335 |

The Hangman’s Daughter (The Hangman’s Daughter, #1) |

Oliver Pötzsch |

2008 |

3.73 |

0.693 |

|

336 |

Reamde |

Neal Stephenson |

2011 |

3.97 |

0.691 |

|

337 |

On the Jellicoe Road |

Melina Marchetta |

2006 |

4.13 |

0.690 |

|

338 |

Serena |

Ron Rash |

2008 |

3.53 |

0.687 |

|

339 |

A Gentleman in Moscow |

Amor Towles |

2016 |

4.36 |

0.682 |

|

340 |

The Power |

Naomi Alderman |

2016 |

3.84 |

0.682 |

|

341 |

My Grandmother Asked Me to Tell You She’s Sorry |

Fredrik Backman |

2013 |

4.05 |

0.678 |

|

342 |

The Monuments Men: Allied Heroes, Nazi Thieves, and the Greatest Treasure Hunt in History |

Robert M. Edsel |

2009 |

3.76 |

0.673 |

|

343 |

Finnikin of the Rock (Lumatere Chronicles, #1) |

Melina Marchetta |

2008 |

3.91 |

0.669 |

|

344 |

Authority (Southern Reach, #2) |

Jeff VanderMeer |

2014 |

3.51 |

0.667 |

|

345 |

The End of Your Life Book Club |

Will Schwalbe |

2012 |

3.81 |

0.664 |

|

346 |

The Golem and the Jinni (The Golem and the Jinni, #1) |

Helene Wecker |

2013 |

4.11 |

0.664 |

|

347 |

Rabbit, Run |

John Updike |

1960 |

3.58 |

0.661 |

|

348 |

Present Over Perfect: Leaving Behind Frantic for a Simpler, More Soulful Way of Living |

Shauna Niequist |

2016 |

3.85 |

0.660 |

|

349 |

The Crimson Petal and the White |

Michel Faber |

2002 |

3.88 |

0.646 |

|

350 |

The Bear and the Nightingale (Winternight Trilogy, #1) |

Katherine Arden |

2017 |

4.12 |

0.643 |

|

351 |

Lord Foul’s Bane (The Chronicles of Thomas Covenant the Unbeliever, #1) |

Stephen R. Donaldson |

1977 |

3.72 |

0.640 |

|

352 |

Flatland: A Romance of Many Dimensions |

Edwin A. Abbott |

1884 |

3.82 |

0.639 |

|

353 |

The Hundred Thousand Kingdoms (Inheritance, #1) |

N. K. Jemisin |

2010 |

3.85 |

0.637 |

|

354 |

A Spool of Blue Thread |

Anne Tyler |

2015 |

3.41 |

0.636 |

|

355 |

Eligible (The Austen Project, #4) |

Curtis Sittenfeld |

2016 |

3.61 |

0.635 |

|

356 |

The Chemist |

Stephenie Meyer |

2016 |

3.71 |

0.634 |

|

357 |

Thinking, Fast and Slow |

Daniel Kahneman |

2011 |

4.14 |

0.632 |

|

358 |

Tenth of December |

George Saunders |

2013 |

3.97 |

0.632 |

|

359 |

The Sword of Shannara (The Original Shannara Trilogy, #1) |

Terry Brooks |

1977 |

3.76 |

0.632 |

|

360 |

Incarceron (Incarceron, #1) |

Catherine Fisher |

2007 |

3.64 |

0.630 |

|

361 |

Metro 2033 (Metro, #1) |

Dmitry Glukhovsky |

2005 |

3.99 |

0.629 |

|

362 |

Tropic of Cancer |

Henry Miller |

1934 |

3.68 |

0.627 |

|

363 |

Consider the Lobster and Other Essays |

David Foster Wallace |

2005 |

4.24 |

0.624 |

|

364 |

Gulp: Adventures on the Alimentary Canal |

Mary Roach |

2013 |

3.93 |

0.624 |

|

365 |

The Little Stranger |

Sarah Waters |

2009 |

3.54 |

0.621 |

|

366 |

A Confederacy of Dunces |

John Kennedy Toole |

1980 |

3.88 |

0.614 |

|

367 |

The Hundred-Year-Old Man Who Climbed Out of the Window and Disappeared (The Hundred-Year-Old Man, #1) |

Jonas Jonasson |

2009 |

3.83 |

0.613 |

|

368 |

The Left Hand of Darkness (Hainish Cycle #4) |

Ursula K. Le Guin |

1969 |

4.06 |

0.613 |

|

369 |

Shantaram |

Gregory David Roberts |

2003 |

4.27 |

0.613 |

|

370 |

The Idiot |

Elif Batuman |

2017 |

3.63 |

0.606 |

|

371 |

The Idiot |

Fyodor Dostoyevsky |

1869 |

4.18 |

0.606 |

|

372 |

At Home: A Short History of Private Life |

Bill Bryson |

2010 |

3.97 |

0.606 |

|

373 |

Let’s Pretend This Never Happened: A Mostly True Memoir |

Jenny Lawson |

2012 |

3.90 |

0.601 |

|

374 |

The Heart Goes Last |

Margaret Atwood |

2015 |

3.37 |

0.601 |

|

375 |

The Postmistress |

Sarah Blake |

2009 |

3.33 |

0.595 |

|

376 |

Cleopatra: A Life |

Stacy Schiff |

2010 |

3.66 |

0.594 |

|

377 |

Red Sparrow (Red Sparrow Trilogy, #1) |

Jason Matthews |

2013 |

3.95 |

0.594 |

|

378 |

Etiquette & Espionage (Finishing School, #1) |

Gail Carriger |

2013 |

3.81 |

0.592 |

|

379 |

How to Be a Woman |

Caitlin Moran |

2011 |

3.73 |

0.589 |

|

380 |

The Royal We |

Heather Cocks |

2015 |

3.80 |

0.589 |

|

381 |

The Diviners (The Diviners, #1) |

Libba Bray |

2012 |

3.97 |

0.588 |

|

382 |

The Museum of Extraordinary Things |

Alice Hoffman |

2014 |

3.74 |

0.586 |

|

383 |

The Black Company (The Chronicles of the Black Company, #1) |

Glen Cook |

1984 |

3.94 |

0.583 |

|

384 |

Astrophysics for People in a Hurry |

Neil deGrasse Tyson |

2017 |

4.10 |

0.583 |

|

385 |

The Long Way to a Small, Angry Planet (Wayfarers, #1) |

Becky Chambers |

2014 |

4.16 |

0.582 |

|

386 |

The Wedding Date (The Wedding Date, #1) |

Jasmine Guillory |

2018 |

3.54 |

0.581 |

|

387 |

Alexander Hamilton |

Ron Chernow |

2004 |

4.23 |

0.570 |

|

388 |

The Atlantis Gene (The Origin Mystery, #1) |

A. G. Riddle |

2013 |

3.72 |

0.566 |

|

389 |

American Pastoral (The American Trilogy, #1) |

Philip Roth |

1997 |

3.92 |

0.566 |

|

390 |

Truthwitch (The Witchlands, #1) |

Susan Dennard |

2016 |

3.89 |

0.565 |

|

391 |

Behind the Beautiful Forevers: Life, Death, and Hope in a Mumbai Undercity |

Katherine Boo |

2012 |

3.98 |

0.564 |

|

392 |

Night Film |

Marisha Pessl |

2013 |

3.78 |

0.564 |

|

393 |

The Lies of Locke Lamora (Gentleman Bastard, #1) |

Scott Lynch |

2006 |

4.30 |

0.563 |

|

394 |

A Visit from the Goon Squad |

Jennifer Egan |

2010 |

3.66 |

0.557 |

|

395 |

Suite Française |

Irène Némirovsky |

2004 |

3.84 |

0.555 |

|

396 |

The Twelve Tribes of Hattie |

Ayana Mathis |

2012 |

3.48 |

0.554 |

|

397 |

The Warmth of Other Suns: The Epic Story of America’s Great Migration |

Isabel Wilkerson |

2010 |

4.36 |

0.554 |

|

398 |

The Weird Sisters |

Eleanor Brown |

2011 |

3.36 |

0.553 |

|

399 |

The Aeronaut’s Windlass (The Cinder Spires, #1) |

Jim Butcher |

2015 |

4.18 |

0.549 |

|

400 |

The Eyre Affair (Thursday Next, #1) |

Jasper Fforde |

2001 |

3.91 |

0.542 |

|

401 |

Tigana |

Guy Gavriel Kay |

1990 |

4.10 |

0.542 |

|

402 |

Armada |

Ernest Cline |

2015 |

3.52 |

0.534 |

|

403 |

Red Rising (Red Rising, #1) |

Pierce Brown |

2014 |

4.27 |

0.526 |

|

404 |

The Burgess Boys |

Elizabeth Strout |

2013 |

3.57 |

0.523 |

|

405 |

The Coldest Girl in Coldtown |

Holly Black |

2013 |

3.85 |

0.522 |

|

406 |

The Knife of Never Letting Go (Chaos Walking, #1) |

Patrick Ness |

2008 |

3.98 |

0.520 |

|

407 |

The Story of Edgar Sawtelle |

David Wroblewski |

2008 |

3.62 |

0.520 |

|

408 |

The Emperor of All Maladies: A Biography of Cancer |

Siddhartha Mukherjee |

2010 |

4.31 |

0.517 |

|

409 |

Geek Love |

Katherine Dunn |

1989 |

3.97 |

0.517 |

|

410 |

The Particular Sadness of Lemon Cake |

Aimee Bender |

2010 |

3.21 |

0.515 |

|

411 |

The Leftovers |

Tom Perrotta |

2011 |

3.40 |

0.511 |

|

412 |

The Black Swan: The Impact of the Highly Improbable |

Nassim Nicholas Taleb |

2007 |

3.92 |

0.511 |

|

413 |

Don Quixote |

Miguel de Cervantes Saavedra |

1615 |

3.87 |

0.508 |

|

414 |

A Heartbreaking Work of Staggering Genius |

Dave Eggers |

2000 |

3.68 |

0.505 |

|

415 |

Damned (Damned, #1) |

Chuck Palahniuk |

2011 |

3.38 |

0.503 |

|

416 |

My Year of Rest and Relaxation |

Ottessa Moshfegh |

2018 |

3.67 |

0.496 |

|

417 |

Sleeping Beauties |

Stephen King |

2017 |

3.73 |

0.492 |

|

418 |

The Corrections |

Jonathan Franzen |

2001 |

3.79 |

0.491 |

|

419 |

The Tin Drum |

Günter Grass |

1959 |

3.96 |

0.490 |

|

420 |

The Girls |

Emma Cline |

2016 |

3.47 |

0.489 |

|

421 |

The Wonder |

Emma Donoghue |

2016 |

3.62 |

0.486 |

|

422 |

To the Lighthouse |

Virginia Woolf |

1927 |

3.77 |

0.482 |

|

423 |

Let the Great World Spin |

Colum McCann |

2009 |

3.95 |

0.475 |

|

424 |

The Heart’s Invisible Furies |

John Boyne |

2017 |

4.47 |

0.472 |

|

425 |

The Orchardist |

Amanda Coplin |

2012 |

3.77 |

0.471 |

|

426 |

Acceptance (Southern Reach, #3) |

Jeff VanderMeer |

2014 |

3.57 |

0.470 |

|

427 |

A Fire Upon the Deep (Zones of Thought, #1) |

Vernor Vinge |

1992 |

4.14 |

0.468 |

|

428 |

The Untethered Soul: The Journey Beyond Yourself |

Michael A. Singer |

2007 |

4.25 |

0.467 |

|

429 |

The Radium Girls: The Dark Story of America’s Shining Women |

Kate Moore |

2017 |

4.16 |

0.466 |

|

430 |

Three Men in a Boat (Three Men, #1) |

Jerome K. Jerome |

1889 |

3.89 |

0.464 |

|

431 |

Angle of Repose |

Wallace Stegner |

1971 |

4.27 |

0.463 |

|

432 |

The New Jim Crow: Mass Incarceration in the Age of Colorblindness |

Michelle Alexander |

2010 |

4.50 |

0.459 |

|

433 |

The Imperfectionists |

Tom Rachman |

2010 |

3.54 |

0.457 |

|

434 |

What Happened |

Hillary Rodham Clinton |

2017 |

3.97 |

0.456 |

|

435 |

The Undoing Project: A Friendship That Changed Our Minds |

Michael Lewis |

2016 |

3.99 |

0.447 |

|

436 |

A Discovery of Witches (All Souls Trilogy, #1) |

Deborah Harkness |

2011 |

4.00 |

0.445 |

|

437 |

The Gargoyle |

Andrew Davidson |

2008 |

3.96 |

0.440 |

|

438 |

Mad About the Boy (Bridget Jones, #3) |

Helen Fielding |

2013 |

3.35 |

0.440 |

|

439 |

Girl, Wash Your Face: Stop Believing the Lies about Who You Are So You Can Become Who You Were Meant to Be |

Rachel Hollis |

2018 |

3.76 |

0.439 |

|

440 |

Pale Fire |

Vladimir Nabokov |

1962 |

4.15 |

0.433 |

|

441 |