One Man’s Modus Ponens

One man’s modus ponens is another man’s modus tollens is a saying in Western philosophy encapsulating a common response to a logical proof which generalizes the reductio ad absurdum and consists of rejecting a premise based on an implied conclusion. I explain it in more detail, provide examples, and a Bayesian gloss.

A logically-valid argument which takes the form of a modus ponens may be interpreted in several ways; a major one is to interpret it as a kind of reductio ad absurdum, where by ‘proving’ a conclusion believed to be false, one might instead take it as a modus tollens which proves that one of the premises is false. This “Moorean shift” is aphorized as the snowclone, “One man’s modus ponens is another man’s modus tollens”.

The Moorean shift is a powerful counter-argument which has been deployed against many skeptical & metaphysical claims in philosophy, where often the conclusion is extremely unlikely and little evidence can be provided for the premises used in the proofs; and it is relevant to many other debates, particularly methodological ones.

Beginning syllogistic logic, 2 of the simplest ‘valid argument’ patterns we learn are the modus ponens and the modus tollens. Both modus ponens and modus tollens are ‘logically valid’, as they use universally accepted rules of inference to proceed from given premises to a conclusion, and are equivalent via a contrapositive.

Diagrammed respectively, modus ponens:

A

A → B

∴ B

and modus tollens:

A → B

¬B

∴ ¬A

These arguments are logically correct, but whether any given argument using a modus is true is an entirely different question: an argument could be wrong because it misapplies the rules of inference (and the conclusion does not actually follow), or because the premises themselves are wrong. (Logic is like pipes: a good set of pipes moves water around without letting the water within out, or letting things without in—but it only moves water around, and cannot create water out of nothing.) Certain contentions countenance neither contradiction nor conviction.1

Given a modus ponens proof of something like the skeptical claim that there is no external world (solipsism), one can, rather than simply rejecting it out of hand while refusing to discuss it further, or attempting to find a flaw in the application of inference rules which renders the argument a non sequitur (which usually isn’t there2), or struggling to find specific strong evidence against any of the premises (which can be extremely difficult for abstract points), one can instead flip the argument on its head: given that one knows there is an external world (solipsism is not true), by modus tollens, the skeptical argument’s premises about knowledge must then be false.3 As a proof is merely truth-preserving machinery, it cannot create outputs which are more true than its inputs (GIGO); if the output is clearly false, then inputs must be false. This response is closely related to the Duhem-Quine thesis, by putting attention on the whole argument, and it can be considered a flaw in uses of proofs by contradiction or the reductio ad absurdum—how does one know the conclusion really is absurd and to reject one of the premises instead of perhaps “biting the bullet”? People may disagree greatly about something being ‘absurd’, and presenting an argument might ‘backfire’.4

Probably the most famous philosopher to use this specific argument, and the reason it is called a “Moorean shift”, is George Edward Moore’s “Here is one hand” argument against various skeptical arguments (“Proof of an External World”/“A Defence of Common Sense”): as WP summarizes it,

Moore argues against idealism and skepticism toward the external world on the grounds that skeptics could not give reasons to accept their metaphysical premises that were more plausible to him than the reasons he had to accept the common sense claims about our knowledge of the world that skeptics and idealists must deny. In other words, he is more willing to believe that he has a hand than to believe the premises of what he deems “a strange argument in a university classroom.”

An Islamic version goes (Imam al-Haddad, The Sublime Treasures):

Nothing can be soundly understood

If daylight itself needs proof.

Lucretius, considering the skeptic denying truth with his arguments, asks what would make us believe any arguments more than our own senses (William Ellery Leonard translation)?

Thou’lt find

That from the senses first hath been create

Concept of truth, nor can the senses be

Rebutted. For criterion must be found

Worthy of greater trust, which shall defeat

Through own authority the false by true;

What, then, than these our senses must there be

Worthy a greater trust? Shall reason, sprung

From some false sense, prevail to contradict

Those senses, sprung as reason wholly is

From out of the senses?—For lest these be true,

All reason also then is falsified.5

R. Scott Bakker describes a similar crisis of faith in his early philosophizing, ascribing the argument to a ‘nihilist’, who criticizes Bakker’s Heideggerian defense of mental meaning (rather than the eliminativism Bakker had been struggling to resist), arguing that trying to rebut scientific determinism using Continental philosophy was “kind of like using Ted Bundy’s testimony to convict Mother Theresa?”

Bryan Caplan described the conflict of skeptical arguments with “common sense” & burdens of proof this way:

Hasn’t common sense been wrong before? Of course. But how do people show that a common sense view is wrong? By demonstrating a conflict with other views even more firmly grounded in common sense. The strongest scientific evidence can always be rejected if you’re willing to say, “Our senses deceive us” or “Memory is never reliable” or “All the scientists have conspired to trick us.” The only problem with these foolproof intellectual defenses is… that… they’re… absurd.

!['Will You Fight? Or Will You Perish Like a Dog?' Mickey Mouse meme, philosophy of mind/epistemology version (by rulesofthirds http://rulesofthirds.tumblr.com/post/114417295600 ). Transcript: DONALD DUCK: 'Everything that we know and love is reducible to the absurd acts of chemicals, and there is therefore no intrinsic value in this material universe.' MICKEY MOUSE [apparently grabbing DONALD DUCK by the shirt]: '*Hypocrite that you are*, for you trust the chemicals in your brain to tell you they are chemicals. All knowledge is ultimately based on that which we cannot prove. Will you fight? Or will you perish like a dog?' On materialism.](/doc/philosophy/mind/2015-03-23-rulesofthirds-mickeymousememe-perishlikeadog.jpg)

On materialism.

Since everything we learn about ‘logic’ or ‘metaphysics’ or ‘necessity’ itself derives from experience, it is difficult to see how one could ever be more confident in the abstract claims and arguments which make up a disproof of the external world than in the premise that the external world exists, or other claims like the Eleatic disproofs of time/motion/change. If someone argues that logic proves that time doesn’t exist and nothing moves or changes, what can one really say to such logic except to shrug and walk away? (This has an easy interpretation, in what one might call Bayesian informal logic, as reflecting different prior probabilities in a Bayesian network.) “Moorean shift” is not the most memorable phrase6, and at some point someone coined the maxim, “One man’s modus ponens is another man’s modus tollens.”, which is more self-explanatory and has entered wider circulation. The maxim version is useful because it reminds us of the ambiguity of any given argument, and that for productive discussion, it is not enough to simply produce a logically valid argument, but we must consider to what extent other people would agree with the premises. This point isn’t always appreciated: when you have 2 contradicting claims or arguments, only 1 can be correct but the contradiction doesn’t tell you which one is correct. You need to step outside the argument and find additional data or perspectives. From Gary Drescher’s Good and Real: Demystifying Paradoxes from Physics to Ethics:

A paradox arises when two seemingly airtight arguments lead to contradictory conclusions—conclusions that cannot possibly both be true. It’s similar to adding a set of numbers in a two-dimensional array and getting different answers depending on whether you sum up the rows first or the columns. Since the correct total must be the same either way, the difference shows that an error must have been made in at least one of the two sets of calculations. But it remains to discover at which step (or steps) an erroneous calculation occurred in either or both of the running sums. There are two ways to rebut an argument. We might call them ‘countering’ and ‘invalidating’.

To counter an argument is to provide another argument that establishes the opposite conclusion.

To invalidate an argument, we show that there is some step in that argument that simply does not follow from what precedes it (or we show that the argument’s premises—the initial steps—are themselves false).

If an argument starts with true premises, and if every step in the argument does follow, then the argument’s conclusion must be true. However, invalidating an argument—identifying an incorrect step somewhere-does not show that the argument’s conclusion must be false. Rather, the invalidation merely removes that argument itself as a reason to think the conclusion true; the conclusion might still be true for other reasons. Therefore, to firmly rebut an argument whose conclusion is false, we must both invalidate the argument and also present a counterargument for the opposite conclusion.

In the case of a paradox, invalidating is especially important. Whichever of the contradictory conclusions is incorrect, we’ve already got an argument to counter it—that’s what makes the matter a paradox in the first place! Piling on additional counterarguments may (or may not) lead to helpful insights, but the counterarguments themselves cannot suffice to resolve the paradox. What we must also do is invalidate the argument for the false conclusion-that is, we must show how that argument contains one or more steps that do not follow.

Failing to recognize the need for invalidation can lead to frustratingly circular exchanges between proponents of the conflicting positions. One side responds to the other’s argument with a counterargument, thinking it a sufficient rebuttal. The other side responds with a counter-counterargument—perhaps even a repetition of the original argument—thinking it an adequate rebuttal of the rebuttal. This cycle may persist indefinitely. With due attention to the need to invalidate as well as counter, we can interrupt the cycle and achieve a more productive discussion.

The danger of the Moorean shift is that it becomes a license for fanaticism; as Thomas Nagel (“A Philosopher Defends Religion”) describes Alvin Plantinga’s attempt to save religion from falsification:

An atheist familiar with biology and medicine has no reason to believe the biblical story of the resurrection. But a Christian who believes it by faith should not, according to Plantinga, be dissuaded by general biological evidence. Plantinga compares the difference in justified beliefs to a case where you are accused of a crime on the basis of very convincing evidence, but you know that you didn’t do it. For you, the immediate evidence of your memory is not defeated by the public evidence against you, even though your memory is not available to others. Likewise, the Christian’s faith in the truth of the gospels, though unavailable to the atheist, is not defeated by the secular evidence against the possibility of resurrection. Of course sometimes contrary evidence may be strong enough to persuade you that your memory is deceiving you. Something analogous can occasionally happen with beliefs based on faith, but it will typically take the form, according to Plantinga, of a change in interpretation of what the Bible means. This tradition of interpreting scripture in light of scientific knowledge goes back to Augustine, who applied it to the ‘days’ of creation. But Plantinga even suggests in a footnote that those whose faith includes, as his does not, the conviction that the biblical chronology of creation is to be taken literally can for that reason regard the evidence to the contrary as systematically misleading. One would think that this is a consequence of his epistemological views that he would hope to avoid.

Nevertheless, this is an important argument to be familiar with, as it is widely used, correct in many cases, and is at the core of many methodological discussions.

Examples

Philosophy/Ethics

a Creationist argument against evolution runs:

If a rational God is not responsible for human minds, and instead they were cobbled together by unguided evolutionary processes, we should not expect them to be trustworthy. Since our minds are generally trustworthy, though, the evolutionary worldview must not be correct.

on Jerry Fodor’s What Darwin Got Wrong: “the mad dog naturalist: Alex Rosenberg interviewed by Richard Marshall”

3AM: In the rather heated response to Jerry Fodor’s provocations about natural selection your response was one of the few that recognized that he was onto something. I want to quote you: “His modus tollens is a biologist’s and cognitive scientist’s modus ponens. Assuming his argument is valid and the right conclusion is not that Darwin’s theory is mistaken but that Jerry’s and any other non-Darwinian approach to the mind is wrong. That puts Jerry in good company, of course: Einstein’s.” I don’t know if you’d agree, but it struck me that many of the responses to Fodor’s argument got it wrong about why he was wrong (if he was). Why do you think Fodor wrong but in an interesting way?

Alex Rosenberg: When Einstein developed the “spooky action at a distance” objection to quantum mechanics in the ’30s (the EPR thought experiment), he had no idea he was actually formulating the idea of entanglement and that his objection when tested would vindicate quantum mechanics 40 or 50 years later. When Fodor argued that natural selection can’t see properties, and can’t produce organic systems, for example brains—that respond to, represent, register properties, he thought he was providing a reduction ad absurdum of Darwinian theory (the way Einstein thought he was providing a reduction ad absurdum of quantum theory.) The confirmation of Bell’s inequalities theorem by D’espagnat’s experiments turned Einstein’s reductio into a modus tollens. I believe that Fodor’s attempted reductio of Darwinian theory is a modus tollens of representationalist theories of the mind, theories that accord to the wet stuff, to neural states what Searle calls original intentionality. It’s an argument for eliminativism about intentional content. So Fodor is totally wrong abut Darwinian theory, but his argument shows that we Darwinians (and all the physicists if I am right that Darwin’s theory is just the 2d law in action among the macromolecules) have to go eliminativist about the brain.

Derek Parfit, discussing the question of why the universe exists rather than nothing, mentions of the fine-tuning argument that:

Of the range of possible initial conditions, fewer than one in a billion billion would have produced a Universe with the complexity that allows for life. If this claim is true, as I shall here assume, there is something that cries out to be explained. Why was one of this tiny set also the one that actually obtained? On one view, this was a mere coincidence. That is conceivable, since coincidences happen. But this view is hard to believe, since, if it were true, the chance of this coincidence occurring would be below one in a billion billion.

Others say: “The Big Bang was fine-tuned. In creating the Universe, God chose to make life possible.” Atheists may reject this answer, thinking it improbable that God exists. But this probability cannot be as low as one in a billion billion. So even atheists should admit that, of these two answers to our question, the one that invokes God is more likely to be true. This reasoning revives one of the traditional arguments for belief in God.

Leaving aside the issue of to what extent likelihoods of billion billions happen and how many bits of evidence it would take, William Flesch simply notes that

Parfit seems to think that the probability that God exists is greater than one in a billion billion, so that the existence of God is more likely to be true than the accidental existence of a life-supporting universe. But his stipulation that he’s assuming that the claims of current cosmology are true gives the game away. For even if you think that the odds that God exists are greater than one in a billion billion, it’s dizzyingly more probable that cosmology has it wrong. (After all, similar sorts of error are not unprecedented in the history of physics.) In fact, Parfit’s argument ought to embarrass cosmologists, not atheists. To paraphrase Parfit: cosmologists may reject this answer, thinking it improbable that their theory is wrong. But this probability cannot be as low as one in a billion billion. So even cosmologists should admit that, of these two answers to our question, the one that invokes scientific error is more likely to be true.

An example from mathematics by Timothy Gowers (“Vividness in Mathematics and Narrative”, in Circles Disturbed: The Interplay of Mathematics and Narrative), focusing on the differing cases of rational numbers vs imaginary numbers:

…a suggestion was made that proofs by contradiction are the mathematician’s version of irony. I’m not sure I agree with that: when we give a proof by contradiction, we make it very clear that we are discussing a counterfactual, so our words are intended to be taken at face value. But perhaps this is not necessary. Consider the following passage.

There are those who would believe that every polynomial equation with integer coefficients has a rational solution, a view that leads to some intriguing new ideas. For example, take the equation x2 - 2 = 0. Let p⧸q be a rational solution. Then (p⧸q)2 - 2 = 0, from which it follows that p2 = 2 × q2. The highest power of 2 that divides p2 is obviously an even power, since if 2k is the highest power of 2 that divides p, then 22_k_ is the highest power of 2 that divides p2. Similarly, the highest power of 2 that divides 2_q_2 is an odd power, since it is greater by 1 than the highest power that divides q2. Since p2 and 2_q_2 are equal, there must exist a positive integer that is both even and odd. Integers with this remarkable property are quite unlike the integers we are familiar with: as such, they are surely worthy of further study.

I find that it conveys the irrationality of √2 rather forcefully. But could mathematicians afford to use this literary device? How would a reader be able to tell the difference in intent between what I have just written and the following superficially similar passage?

There are those who would believe that every polynomial equation has a solution, a view that leads to some intriguing new ideas. For example, take the equation x2 + 1 = 0. Let i be a solution of this equation. Then i2 + 1 = 0, from which it follows that i^2 = −1. We know that i cannot be positive, since then i2 would be positive. Similarly, i cannot be negative, since i2 would again be positive (because the product of two negative numbers is always positive). And i cannot be 0, since 02 = 0. It follows that we have found a number that is not positive, not negative, and not zero. Numbers with this remarkable property are quite unlike the numbers we are familiar with: as such, they are surely worthy of further study.

Indeed, how would a reader show the difference—why do we apply modus tollens when we accept √2 must be irrational but then apply modus ponens and accept i as being real in some sense? Do we simply appeal to the utility of using i, and say with Wittgenstein, “If a contradiction were now actually found in arithmetic—that would only prove that an arithmetic with such a contradiction in it could render very good service; and it would be better for us to modify our concept of the certainty required, than to say it would really not yet have been a proper arithmetic.”7

if Singerian or Effective Altruism arguments suggest that it is moral to give away all one’s money to save many lives, does that refute altruism in general? What about a rejection of empathy?

For example, in response to the classic demonstration of scope neglect using a dilemma of saving birds from an oil slick, a commentator wrote:

Scope insensitivity exists, and to avoid the mistakes caused by it, LWers say to shut up and multiply—to take how much you’d do for one instance of what you care about, then multiply the expected utility even when the conclusion may be counterintuitive. For example, if you’d be willing to pay $3 to have the oil scrubbed off one nearby salient bird suffering from an oil spill, you should be willing to pay more to have the oil scrubbed off >1 birds. But why not reason in the other direction? Instead of shutting up and multiplying, why not shut up and divide? Suppose you’re not willing to donate any amount of money to save thousands of faraway birds. Then it would be irrational for you to pay $3 to have the oil scrubbed off one salient bird. It’s true that it’s irrational to both be willing to pay $3 to save one bird and not be willing to pay the same or more to save more birds. But from that alone, it doesn’t follow that you should donate >$3 to save more birds.

Similarly, ‘torture vs dust specks’.

Peter Van Inwagen (“Is It Wrong Everywhere, Always, and for Anyone to Believe Anything on Insufficient Evidence?”) argues that it is morally fine to believe in things (like gods) on insufficient evidence, because if it wasn’t, that would mean that many non-religious beliefs (with inadequate evidence) would be morally wrong as well

Spencer Case, “Bearing Witness: My Journey Out of Mormonism”:

I continued to discuss my doubts with my dad and with my new bishop, Bishop Olson, both of whom admonished me to go on a mission. At that point my departure would have been imminent. I recall one phone conversation with Bishop Olson in which he inadvertently nudged me to part ways with the church. He said the fact that I was still in the church having these conversations with him, seeking the truth, was proof that I really did know that it was true. Otherwise, what was the sense in my still going to church? Why would I continue seeking? He had a point. He ended the phone call with “See you in church this Sunday.” I never went back.

if it is bad for Koreans to kill & eat dogs because dogs can suffer and are intelligent, does that mean it’s also bad to kill & eat pigs and many other animals and young human infants of dog-level or less intelligence?

Or, does that just mean we should eat dogs too?

if the existence of God/souls means artificial intelligence is impossible, then since artificial intelligence increasingly looks possible, does AI disprove gods/souls?

does the possibility of precognition refute retrocognition?

if it is impermissible to attempt to make healthier babies by genetic selection or engineering (‘eugenics’), is it then impermissible to use vaccination? What about PGD for genetic disorders, or abortion of fetuses with Down’s?

“If we consider inclusion and diversity to be a measure of societal progress, then IQ screening proposals are unethical,” says Lynn Murray of Don’t Screen Us Out, a group that campaigns against prenatal testing for Down’s syndrome. “There must be wide consultation.”

If it is impermissible to select between embryos based on their genetics, does that mean it is also impermissible to change embryo makeups by selecting between possible donors? (And what about choice of spouses & assortative mating?) “Discounts, guarantees and the search for ‘good’ genes: The booming fertility business”:

“It’s a little unsettling to be marketing characteristics as potentially positive in a future child”, said Rebecca Dresser, a bioethicist at Washington University in St. Louis and a member of the President’s Council on Bioethics under George W. Bush. “But it’s hard to think on what basis to prohibit that.” And so, Dresser said, “what we have now is prospective parents making judgments about what they think ‘good’ genes are”—decisions that are literally changing the face of the next generation.

Deep ecology/Anarcho-primitivism: Ted Kaczynski made many observations about technology & civilization that most would agree with, such as the eventual takeover of Nature (“The Unabomber Was Right”), and concluded that as Nature is more valuable than anything else, including human well-being, technology/civilization must be destroyed, rather than improved or accelerated

if, as Scott Aaronson argues (Quantum Computing since Democritus, “Fun with the Anthropic Principle”), some highly controversial versions of weird anthropic principles go poorly with Bayesian statistics, does that disprove Bayesian statistics?

Isaac Newton, along with Lucretius, argued (in an anthropic vein) that humanity must be of recent origin, given all the recent innovations and the lack of recorded history, considering the absurd alternative

slavery:

Some arguments given by Harper et al 1853173ya in The Pro-Slavery Argument are striking when quoted/paraphrased:

“Females are human and rational beings. They may be found of better faculties, and better qualified to exercise political privileges, and to attain the distinctions of society, than many men; yet who complains of the order of society by which they are excluded from them?” He says we have to function with general rules that are good for society even if they violate the rights of individuals (ie. one 18 year old might be capable to hold political office but we still don’t allow 18 year olds).

He goes on to list a bunch of ways in which society already restricts rights and liberty (including animals) and why we think that’s fine and why it’s super necessary, and why are you suddenly getting mad about slavery?

…he weirdly argues against utilitarianism by saying how can you compare the pleasure and suffering of a man with cultivated and nuanced taste to a man with dull and simple taste?

…“Who but a driveling fanatic has thought of the necessity of protecting domestic animals from the cruelty of their owners? And yet are not great and wanton cruelties practised on these animals?”

…“[whipping] would be degrading to a freeman, who had the thoughts and aspirations of a freeman. In general, it is not degrading to a slave, nor is it felt to be so. The evil is the bodily pain. Is it degrading to a child?”

phrenology was usually employed to justify American slavery, pointing to their docility; but phrenologist & abolitionist George Combe argued that phrenology proved that this proof of docility showed that slavery could be abolished without the repeat of problems like the 1804 Haiti massacre. (As no “war of extermination” took place after the Civil War when the slaves were freed, Combe appears to have been correct, if perhaps for the wrong reasons…)

Pet cloning is criticized on the grounds that it is a highly expensive way of creating another pet cat or dog, while there are many animals that could be adopted; one dog cloner, Amy Vangemert offers an interesting defense of her choice by analogy to human (non) adoption:

She said: “I have had some serious backlash from people. A couple of acquaintances said I was wrong and it was inhumane and there were so many dogs out there that need to be adopted. But that’s like telling a mother that she shouldn’t have her own child when there are children out there who need parents.”

Antonio García Martínez criticizes the use of statistics in Facebook advertising, noting that models will inevitably pick up correlates of various statuses like SES or race, and unethically ‘discriminate’ based on this; he follows the logic and notes that, since everything is correlated, the same facts which make Facebook advertising unethical will make all other forms of advertising discriminatory, such as advertising in only one magazine and not all possible magazines, and hence advertising must be even further banned or regulated:

It’s worth noting that if this regulatory trend becomes well established and more generalized, it could have implications way beyond Facebook. Consider a magazine advertiser who chooses to publicize senior executive positions in male-oriented Esquire but not in female-oriented Marie Claire. Since magazine publishers commonly flaunt their specific demos in sales pitch decks, it’s easy for advertisers to segment audiences. Is that advertiser violating the spirit of the law? I would say so. Should the government enforce the law as they do with Facebook? Again, I would say so.

Is it immoral to train self-driving cars on public roads when (as of 2019) they appear to be only as safe as teenager or geriatric car drivers?

Brad Templeton, who lives in testing hotspot Sunnyvale, frequently sees the cars on the road. He worked on them, too, as part of Google’s self-driving car project roughly a decade ago. Most experts in the field say real-world testing is needed, he says, something he agrees with. Templeton says a small number of crashes are acceptable when considering the eventual overall improved safety when human drivers are off the roads. He compares it to teenagers learning to drive. “We accept them driving, with very high risk, because it is the only way to turn them into safer middle-aged drivers. And all we get out of that is one safer driver,” he said. As autonomous vehicles are trained, “we get a million safer cars from a prototype fleet of hundreds.”

But John Joss, 85, doesn’t think the robot drivers are that mature. “They drive like either geriatrics or 17-year-olds who have very limited experience of driving,” said Joss, a magazine writer.

Recessions, surprisingly, decrease total mortality; does that mean recessions are good, or that decreases in total mortality are bad (and the increase in deaths during boom times is good)?

The Johnson & Johnson Covid-19 vaccine was linked to a rare blood-clotting side-effect in women; it was widely noted (including at least 3 of my relatives when discussing it) that this was rarer than, say, hormonal birth-control pills; naturally, of course, “A Vaccine Side Effect Leaves Women Wondering: Why Isn’t the Pill Safer?”!

Garry Kasparov has argued that Western history is essentially false (loosely allying with Fomenko’s New Chronology) because if one extrapolates the stable Western population growth rates of the past few centuries back to the Roman Empire, that produces Roman Empire population numbers orders of magnitudes too low; therefore, the Roman Empire never existed and was somehow falsified.

Christ’s Prophecies: the references by Jesus Christ in the Gospels to an apparently-imminent apocalypse, such as the Olivet Discourse, have long vexed Christians as Judgment Day has not yet come. For example:

Truly I tell you, this generation [Greek: genea] will certainly not pass away until all these things have happened. Heaven and earth will pass away, but my words will not pass away.

or

For this we declare to you by a word from the Lord, that we who are alive, who are left until the coming of the Lord, will not precede those who have fallen asleep.

To explain them while maintaining the truth of the statements, there are a few logical possibilities. A logically valid (but unserious) answer would be to say that some of the generation or listeners have not yet died, and still wander the earth as Lazarus or the Wandering Jew.

A more defensible approach is to argue that the generation or listeners hadn’t all died before the events described and the events did happen, because they refer to events in the first century AD: Preterism.

One might question this because some promises like the resurrection of the dead do not seem to have happened at all, but full preterism maintains that all that indeed happened in 70 AD etc., but simply in a way we cannot observe!

From Diogenes Laërtius, Lives of the Eminent Philosophers, Book VI:

Some authors affirm that the following also belongs to him [Diogenes the Cynic]: that Plato saw him washing lettuces, came up to him and quietly said to him, ‘Had you paid court to Dionysius, you wouldn’t now be washing lettuces’, and that he with equal calmness made answer, ‘If you had washed lettuces, you wouldn’t have paid court to Dionysius.’

Science

Noriko: “Wow, you must have a real knack for it!”

Kazumi: “That’s not it, Miss Takaya! It takes hard work in order to achieve that.”

Noriko: “Hard work? You must have a knack for hard work, then!”

Gunbuster, episode 1

Probably the most famous current example in science is the still-controversial Bell’s theorem, where several premises lead to an experimentally-falsified conclusion, therefore, by modus tollens, one of the premises is wrong—but which? There is no general agreement on which to reject, leading to:

Superdeterminism: rejection of the assumption of statistical independence between choice of measurement & measurement (ie. the universe conspires so the experimenter always just happens to pick the ‘right’ thing to measure and gets the right measurement)

De Broglie–Bohm theory: rejection of assumption of local variables, in favor of universe-wide variables (ie. the universe conspires to link particles, no matter how distant, to make the measurement come out right)

Transactional interpretation: rejection of the speed of light as a limit, allowing FTL/superluminal communication (ie. the universe conspires to let two linked particles communicate instantaneously to make the measurement come out right)

Many-Worlds interpretation: rejection of there being a single measurement in favor of every possible measurement (ie. the universe takes every possible path, ensuring it comes out right)

[Stephen Hawking] tested the existence of time travelers on 2009-06-28 by throwing a party & announcing it later; he reported no one else attended.8 Hawking concluded that no time travelers attended, that this was evidence for time travellers not existing, and time travel being impossible (consistent with his conjecture).

However, one could also conclude that time travelers do not exist because humans go extinct before inventing time travel, or that time travelers did attend the party invisibly, or that they did because Stephen Hawking was a time traveler!

randomized controlled experiments (RCTs), particularly with blinding or preregistration, especially larger replications of small famous correlational results, typically turn up much smaller or zero effects in medicine, psychology, and sociology; the more rigorous the experiment, the smaller the effect. The response to this is often to not explain how merely flipping a coin can make genuine effects disappear, but to attack the entire idea of RCTs/replication:

Rossi 1987 notes that a common reaction in sociology to the failure of many welfare or education programs, which ‘succeeded’ when studied at small scale or using correlational data and then failed when tested with large randomized experiments, is to deny that randomization or quantitative measurement are valid9

the Replication/Reproducibility crisis in psychology: Jason Mitchell argues that the inability of replicators to confirm the ‘stereotype threat’ effect means that replication doesn’t work (and shouldn’t be published):

The recent special issue of Social Psychology, for example, features one paper that successfully reproduced observations that Asian women perform better on mathematics tests when primed to think about their race than when primed to think about their gender. A second paper, following the same methodology, failed to find this effect (Moon & Roeder, 201412ya); in fact, the 95% confidence interval does not include the original effect size. These oscillations should give serious pause to fans of replicana. Evidently, not all replicators can generate an effect, even when that effect is known to be reliable. On what basis should we assume that other failed replications do not suffer the same unspecified problems that beguiled Moon and Reoder? The replication effort plainly suffers from a problem of false negatives.

Mina Bissell, likewise criticizing replication initiatives because biology research is so fragile that they will not get the same results (Gelman commentary):

Many scientists use epithelial cell lines that are exquisitely sensitive. The slightest shift in their microenvironment can alter the results—something a newcomer might not spot. It is common for even a seasoned scientist to struggle with cell lines and culture conditions, and unknowingly introduce changes that will make it seem that a study cannot be reproduced. Cells in culture are often immortal because they rapidly acquire epigenetic and genetic changes. As such cells divide, any alteration in the media or microenvironment—even if minuscule—can trigger further changes that skew results. Here are three examples from my own experience…

Trish Greenhalgh endorses a ban on RCTs because of the null effects they keep finding:

Here are some intellectual fallacies based on the more-research-is-needed assumption (I am sure readers will use the comments box to add more examples).

Despite dozens of randomized controlled trials of self-efficacy training (the ‘expert patient’ intervention) in chronic illness, most people (especially those with low socio-economic status and/or low health literacy) still do not self-manage their condition effectively. Therefore we need more randomized trials of self-efficacy training.

Despite conflicting interpretations (based largely on the value attached to benefits versus those attached to harms) of the numerous large, population-wide breast cancer screening studies undertaken to date, we need more large, population-wide breast cancer screening studies.

Despite the almost complete absence of ‘complex interventions’ for which a clinically as well as statistically-significant effect size has been demonstrated and which have proved both transferable and affordable in the real world, the randomized controlled trial of the ‘complex intervention’ (as defined, for example, by the UK Medical Research Council [3]) should remain the gold standard when researching complex psychological, social and organizational influences on health outcomes.

Despite consistent and repeated evidence that electronic patient record systems can be expensive, resource-hungry, failure-prone and unfit for purpose, we need more studies to ‘prove’ what we know to be the case: that replacing paper with technology will inevitably save money, improve health outcomes, assure safety and empower staff and patients.

Last year, Rodger Kessler and Russ Glasgow published a paper arguing for a ten-year moratorium on randomized controlled trials on the grounds that it was time to think smarter about the kind of research we need and the kind of study designs that are appropriate for different kinds of question.[4]

“Building an evidence base for IVF ‘add-ons’”, Macklon et al 2019, likewise echoes it:

Despite these challenges, major and laudable RCTs addressing clinical questions in our field reach publication in top journals. However, in addition to sharing the necessary major financial and manpower investment to perform, their clear tendency to produce negative findings means that they are primarily serving to remove treatment options from the clinician and their patient. This can of course represent an important contribution. However, when such trials test empirical treatments (which many IVF ‘add-ons’ are), they risk increasing confusion rather than clarity.

Scandinavian population registry studies, which are able to link lifelong government data on medical/tax/school/employment/military/IQ on the entire population of a country to perform retroactive longitudinal studies, are sometimes criticized as not being applicable to other countries; the irony is that the true Scandinavian/American difference on any research question is likely smaller than the total systematic biases + sampling error in the non-population-registry American studies one would have to use to try to estimate what that difference is.

dual n-back: meta-analyses of dual n-back effects on IQ and other ‘far transfer’ consistently find that studies with weak methodology like ‘passive’ control groups10 get stronger effects than ‘active’ control groups; does this mean that DNB works?

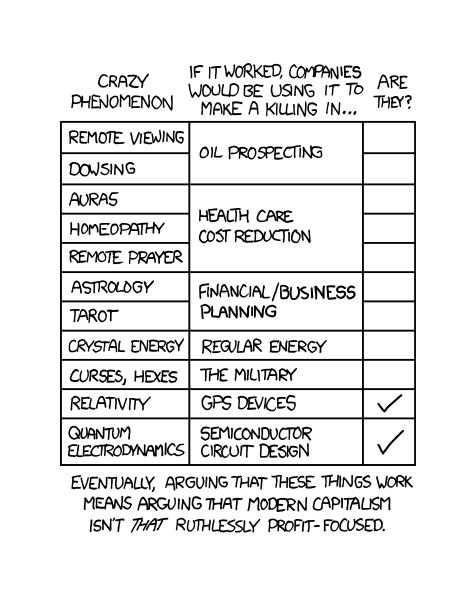

Daryl Bem published an analysis of multiple experiments following standard psychology procedures & statistics demonstrating the existence of ESP/psi; Bem believes this proves psi, but does this prove psi or instead disprove standard psychology methodology? (See also Jaynes on ESP.)

incidentally, a psi enthusiast states:

Given parapsychology’s chronic underfunding (it has been calculated that ALL the funding that has ever been received by parapsychologists would fund academic psychology for ONE month) it is surprising the amount that has been learnt so far.

…Stuart said: ‘the experimenter’s skeptical attitude meant his psi interfered with the psi of the participants’—it doesn’t take experimenter psi to interfere with the subjects performance. Simple experimenter attitudes and other subtle cues are picked up by the subjects and this affects their performance. Skeptic Wiseman ran a study with Marilyn Schlitz investigating distant intention. He got nothing, she found significant evidence. Exactly the same set-up. Attitudes make a difference, and no special pleading to experimenter psi is required.

This has long been a standard psi response to the observation that psi experiments get much smaller or nil effects when conducted by non-believers: it doesn’t indicate problems with the psi experiments, but rather the skeptics are unable to measure psi because their very skepticism emits an anti-psi field destroying psi.

heliocentrism vs geocentrism: Greek heliocentrism famously predicted stellar parallax, which was not observable at the time; heliocentrism’s requisite implied distance to stars was then used as a modus tollens.

Before the discovery of the timing error, the 201115ya FTL neutrinos was an excellent place to apply this ‘I defy (that particular) data’ reasoning, as are such errors in general: Steven Kaas puts it nicely:

According to [the 200917ya blog post] “A New Challenge to Einstein”, General Relativity has been refuted at 98% confidence. I wonder if it wouldn’t be more accurate to say that, actually, 98% confidence has been refuted at General Relativity.

given the failure of personality GWASes and GCTAs indicating near-zero SNP heritability, does a genetics paper claiming to identify genes predicting almost all of personality heritability using only n~4k merely demonstrate that their method grossly overfits?11

does the Flynn effect mean that much of the population was retarded a century ago (or does that merely prove that the effect is ‘hollow’)?

more specifically: does the Flynn effect mean that the death penalty has been unjust in executing those we would now consider mentally retarded?12

on a possible correlation between hormone and Obama/Romney vote-shares (highly likely to be spurious):

“There is absolutely no reason to expect that women’s hormones affect how they vote any more than there is a reason to suggest that variations in testosterone levels are responsible for variations in the debate performances of Obama and Romney,” said Susan Carroll, professor of political science and women’s and gender studies.

-

James H. Borland, a professor of education at Teachers College, said that looking at the gifted landscape in New York City suggests that one of two things must be true: either black and Hispanic children are less likely to be gifted, or there is something wrong with the way the city selects children for those programs. “It is well known in the education community that standardized tests advantage children from wealthier families and disadvantage children from poorer families,” Dr. Borland said…That changed in September 200818ya, when the Bloomberg administration ushered in admission based only on a cutoff score on two high-stakes tests given in one sitting—the Otis-Lennon School Ability Test, or Olsat, and the Bracken School Readiness Assessment. The overhaul was meant to standardize the admissions process and make it fairer. But the new tests decreased diversity, with children from the poorest districts offered a smaller share of kindergarten gifted slots after those were introduced, while pupils in the wealthiest districts got more.

self-esteem-boosting interventions:

And indeed, they’ve gotten dramatic results. In one of the best-known studies, low-performing black middle school students who completed several 15-minute classroom writing exercises raised their GPAs by nearly half a point over two years, compared with a control group. Such astonishing results have struck some observers—particularly nonpsychologists—as nearly magical, and possibly unbelievable. But a growing body of evidence is showing that the interventions can work, not only among black middle school students, but also for women, minority college students and other populations.

“When this was first described to me, I was skeptical,” says physics professor Michael Dubson, PhD, of the University of Colorado-Boulder, who worked with psychologists there on a study with women physics students. “But now that I think about it, we all know that it’s possible to damage a student in 15 minutes. It’s easy to wreck someone’s self-esteem. So if that’s possible, then maybe it’s also possible to improve it.”

if a survey of Mensa self-reported diagnoses indicates that high-IQ individuals are at relative risks of physical & psychological disorders as high as RR = 223, contradicting almost all previous research, does that indicate that research was wrong or that Mensa surveys are not useful?

Richard Feynman’s supposedly was tested as a child by his school with an IQ score in the 130s; given his accomplishments, this is highly doubtful and a closer look at the source of the anecdote reveals many reasons why the score is either false or unreliable

if an analysis claims that there is only a 1 in ten trillion chance that William Shakespeare wrote Shakespeare’s plays…

if the FDA uses computational modeling of chemicals to argue that a plant has “high potential for abuse”, a plant which has been used by hundreds of thousands of Americans for decades without a known addiction epidemic, does that establish an impending threat to American public health, or refute their computational modeling?

And if a mouse model shows some dopaminergic effects of modafinil—which has been used by million of Americans over the past 2–3 decades—does that indicate modafinil is at risk of serious drug addict abuse, or so much the worse for animal models?

if Freudian psychodynamics psychotherapy works as well as cognitive behavioral therapy (CBT), does CBT not work? More generally, if the Dodo bird verdict is true, do any of these psychological treatments work or are their theories true?

if nicotine has stimulating effects and a review can be written proposing using it for sports, does that debunk reviews or suggest that nicotine might be useful?

When Seth Roberts argues that one’s subjective memories about sleep conflict with the sleep data recorded by one’s Zeo EEG sleep-tracking device, does that constitute a disproof of the Zeo’s accuracy? No: establishing contradictions between one’s memories/subjective impressions and the Zeo merely tells us that one (or both) are wrong; it doesn’t tell us that the Zeo is wrong unless you have additional data or arguments which say that the Zeo is less reliable than the memories. One could take the Zeo contradicting memories as just proof of the fallibility of sleep-related memories (eg. Feige et al 2008)13! (The fundamental question of epistemology: “What do you believe, and why do you believe it?”)

For example, if someone is caught on camera sleep-walking, and denies strenuously that he was sleep-walking, do you take modus ponens and say his memories prove he was not sleep-walking and reject the camera footage; or modus tollens and say that the claim his sleep memories are reliable imply he could not have been caught on camera, but he was, therefore we can reject the claim his sleep memories imply no walking? But extraordinary claims require extraordinary evidence, so you choose to take modus tollens—because you have priors which say that memories are malleable and untrustworthy, while camera footage is much harder to fake.

model checking & uncertainty: if a statistical analysis assumes that having an extremely high IQ is normally distributed (rather than a truncated normal distribution) and that the implication of a headcount of high IQ types at Harvard implies that >90% of high IQ people are failures & discriminated against by society, does that show that almost every smart person is doomed, or that the statistical model is incorrect?

if obesity and Internet use are addictive in similar ways, should we take Internet usage much more seriously?

-

What I’m saying is that to argue that our ancestors were sexual omnivores is no more a criticism of monogamy than to argue that our ancestors were dietary omnivores is a criticism of vegetarianism.

if the Civil War wiped out most of the wealth of many rich families, but their children gradually recovered in SES despite being raised poor with no inheritance, does that show the importance of genetics to human capital, or that actually, all intergenerational transmission is thanks to “the importance of social networks in facilitating employment opportunities and access to credit”?

the existence of white dwarf stars, when Subrahmanyan Chandrasekhar calculated his Chandrasekhar limit (earning a Nobel fully 48 years later); Lev Landau apparently also realized the implication, but “concluded that quantum laws might be invalid for stars heavier than 1.5 solar mass.”, while Arthur Eddington balked at the implications entirely:

Dr. Chandrasekhar had got this result before, but he has rubbed it in in his last paper; and, when discussing it with him, I felt driven to the conclusion that this was almost a reductio ad absurdum of the relativistic degeneracy formula. Various accidents may intervene to save the star, but I want more protection than that. I think there should be a law of Nature to prevent a star from behaving in this absurd way!

If one takes the mathematical derivation of the relativistic degeneracy formula as given in astronomical papers, no fault is to be found. One has to look deeper into its physical foundations, and these are not above suspicion. The formula is based on a combination of relativity mechanics and non-relativity quantum theory, and I do not regard the offspring of such a union as born in lawful wedlock…

is the life span of the oldest person to ever live, Jeanne Calment, too good to be true & prima facie proof of fraud?

which is more likely, that hydrocephalus can destroy 95% of a human brain without necessarily reducing intelligence & possibly even increasing intelligence in reported cases—or that this conflates brain volume with brain matter, and the hydrocephalus cases in question are unverifiable, mislabeled, & misdescribed?

in defending the existence of the Pygmalion effect, Rosenthal & Jacobson 196858ya invoke an earlier animal experiment of theirs (done to criticize Tryon’s Rats): “If animals become ‘brighter’ when expected to by their experimenters, then it seemed reasonable to think that children might become brighter when expected to by their teachers.” However, the Pygmalion effect has been debunked; so, if children do not become brighter when expected to by their teachers, then surely that casts doubt on their claim that the animals became brighter too…?

Biological cells build up waste-products like lipofuscin as they age, which they cannot or do not dispose of. Chemist Johan Bjorksten noted of such cross-linked, to quote Mike Darwin’s description14:

He had noticed that as organisms age, they tend to accumulate insoluble, often pigmented matter inside their non-dividing cells. Lipofuscin, which accumulates most prominently in brain and cardiac cells, is one such “age pigment.”…Bjorksten determined that this insoluble material, which could occupy as much as 30% to 40% of the volume of non-dividing cells in aged animals, consisted largely of cross linked molecules of lipids and proteins. So molecularly cross linked, compact and tough was this material that it was completely resistant to digestion by trypsin and other commonly available “digestive” biological enzymes.

This posed a puzzle for Bjorksten, because if no living systems could decompose this material, it was so stable that it would necessarily remain as indigestible debris after each organism died. Thus, the earth should be covered in such debris by now! Clearly, this is not case, and so this implied to Bjorksten that there must, in fact, be living organisms with specialized enzymes capable of breaking down this material…He set out to find enzymes in nature which could reverse these cross links and thus, he thought, reverse aging.

This observation has been broadened to the “microbial infallibility hypothesis” of bioremediation: “microorganisms will be found to degrade every chemical substance synthesized by any living organism.” Later gerontologist Aubrey de Grey applied the same reasoning in looking for enzymes to break down lipofuscin, specifically, in Ending Aging 200719ya (“Upgrading the Biological Incinerators”, pg121):

…what was needed was a biomedical superfund project…There were actual land sites all over the planet that should be very badly contaminated by lipofuscin, because their soil has been seeded with the stuff for generations. I speak, of course, of graveyards…there was no accumulation of lipofuscin in cemeteries—and if there was, we certainly ought to be aware of it, because lipofuscin is fluorescent. Months later, when I was discussing the issue with fellow Cambridge scientist John Archer, he would put the disconnect succinctly: “Why don’t graveyards glow in the dark?”

…Scientists became interested in this phenomenon in the 1950s, when it was noted that the levels of many hard-to-degrade pollutants at contaminated sites were present at much lower levels than would have been expected. A big part of the explanation turned out to be the rapid evolution of quickly reproducing organisms like bacteria. Any highly energy-rich substance represents a potential feast—and thus, an ecological niche—for any organism possessing the enzymes needed to digest that material and liberate its stored energy.

And sporopollenin.

Peer review and scholarly awards vs falsified predictions—Noah Smith summarizes one instance where many people made the move:

Biologist Paul Ehrlich is one of the most discredited popular intellectuals in America. He’s so discredited that his Wikipedia page starts the second paragraph with “Ehrlich became well known for the discredited 196858ya book The Population Bomb”. In that book he predicted that hundreds of millions of people would starve to death in the decade to come; when no such thing happened (in the 70s or ever so far), Ehrlich’s name became sort of a household joke among the news-reading set.

And yet despite all this, in the year 2022, 60 Minutes still had Ehrlich on to offer his thoughts on wildlife loss. When the news program was roundly ridiculed for giving Ehrlich air time, the 90-year-old scholar defended himself on Twitter by citing his academic credentials, and the fact that The Population Bomb had been peer-reviewed:

60 Minutes extinction story has brought the usual right-wing out in force. If I’m always wrong so is science, since my work is always peer-reviewed, including the POPULATION BOMB and I’ve gotten virtually every scientific honor. Sure I’ve made some mistakes, but no basic ones.

As many acidly pointed out, the fact that Ehrlich has impeccable credentials and was peer-reviewed is a reason to take a more skeptical eye toward academic credentials and peer review in general. Maybe we’ve gotten better at these things since the 60s, and maybe not. But being spectacularly wrong with the approval of a community of experts is much worse than being spectacularly wrong as a lone kook, because it means that the whole field of people we’ve entrusted to serve as experts on a topic somehow allowed itself to embrace total nonsense.

the Arago spot: deduced by Poisson as a theoretical reductio ad absurdum of Fresnel’s theory of light submitted to a prestigious contest, Fresnel’s ally François Arago instead decided to try to observe the supposed spot—and succeeded

Olber’s paradox defines a trilemma: given that we do not see a bright night sky, the universe cannot be all of: infinite, homogenous, and eternal.

Miscellaneous

if the head injury rates for cars and bicycles are similar (or various sports, or for showers, or walking, especially in the elderly), and one wouldn’t wear a helmet inside a car, should one not wear a bicycle helmet as well?

if a soda has the equivalent of two Cadbury chocolate eggs of sugar or 6 donuts, perhaps one should feel less guilty about eating candy

if terrorism is less of a mortality risk than bathtub accidents, and we spend much more on fighting terrorism than on household accidents, should we spend less on terrorism or more on accident reduction?

On Sweden, Tyler Cowen has said:

Q: “…And then they look at Canada, or Scandinavia, which are less capitalist than the US, and that looks pretty good to them. And so they blame capitalism. Do you think that they’re wrong? Which part of that story is wrong?”

Tyler Cowen: “People do look at Sweden, but they rarely point out that per capita income in West Virginia right now is about the same as that in Sweden. The state that’s supposed to be our biggest train wreck is about as rich as Sweden. I’m not saying the quality of public goods is always as high in West Virginia, but I think that fact is not nearly widely enough known.”

Littlewood’s Law: are mass bomb threats to Jews in America evidence of lurking white supremacists empowered by the election of Donald Trump, or merely hoaxes by a sociopathic Israeli Jew?

does European immigration into America and the Native Americans imply that America should have an Open Borders policy?

in the ‘socialist calculation debate’, it was noted that centralized planning would incur infeasible computational demands; now that both computers & planning algorithms have advanced by many orders of magnitude, does that mean socialism is possible?

On Portuguese investments: “Portuguese business leaders say that Angolan investments unfairly attract the kind of scrutiny that money from elsewhere, including China, does not.”

the visual novel Umineko relies on a “treachery of images” twist in order to deceive the reader; one reader praises the writing for being so bad that the reader should be able to deduce this twist from the beginning:

Looking at this scene now, it’s pretty amazing that I never figured out that Kinzo was dead from the beginning. Everything about not being able to meet him directly and Nanjo’s hedging is so ridiculously suspicious. Ryukishi was probably banking on people using that to figure out that not every scene could be taken literally.

the logical inversion can be used for comedic effect: many Oglaf (NSFW) and SMBC comics in particular draw on this

Sports doping can be seen with the naked eye in the history of world records: any period in which records are being regularly broken or where small countries oddly outperform15 may indicate abuse. For example, women sports, particularly weightlifting or track & field, set many records in the Cold War thanks to the massive state-sponsored doping programs (eg. East Germany & Russia), and many of those records still officially stand (even though they were obviously fraudulent).

In 2023, Paul Graham noted that “By a remarkable coincidence, the introduction of complete birth registration in the US had an effect on the number of supercentenarians similar to the effect that testing for steroids had on women’s world records.”; a reader objected that “Incorrect chart [about women records disappearing]. Pole vault alone has had women’s world record set 30 or so times since 200026ya.”

But this could also simply mean that the testing didn’t work. What were the circumstances?

If we look at the records in question, we immediately notice two things: they are almost all set by a single Russian woman, Yelena Isinbayeva, and the record-breaking stopped dead in 200917ya (14 years and counting) when she retired from competition—in the wake of the revelation of the hugely successful Russian doping program and how the FSB had beaten the various tests, through measures like breaking into storage facilities to swap out tainted urine samples. (Ironically, one of her post-athletic jobs has been oversight of the Russian anti-doping agency.) Some of her records have stood since 2005, despite continued improvements in pole-vaulting technology and general athletic progress.

Obviously, Isinbayeva’s once-in-multiple-generations level of success was at least partially due to doping, and it ended once better testing came into effect. So the modus tollens objection backfires by instead being a modus ponens demonstrating how well Graham’s original observation works for detecting cheating.

External Links

Appendix

Jaynes on ESP

Bayesian E.T. Jaynes, in “Chapter 5: Queer uses for probability theory”, discusses the probabilistic generalization of the reasoning we are engaged in when we choose whether to modus ponens or modus tollens, with early ESP experiments as an example, pointing out that from a Bayesian perspective, all claims are being evaluated in a larger Bayesian-model-comparison context where issues like experimenter error or bias are always possibilities:

What probability would you assign to the hypothesis that Mr. Smith has perfect extrasensory perception (ESP)? He can guess right every time which number you have written down. To say zero is too dogmatic…We take this man who says he has extrasensory perception, and we will write down some numbers 1–10 on a piece of paper and ask him to guess which numbers we’ve written down. We’ll take the usual precautions to make sure against other ways of finding out. If he guesses the first number correctly, of course we will all say “you’re a very lucky person, but I don’t believe it.” And if he guesses two numbers correctly, we’ll still say “you’re a very lucky person, but I don’t believe it.” By the time he’s guessed four numbers correctly—well, I still wouldn’t believe it. So my state of belief is certainly lower than −40 db. How many numbers would he have to guess correctly before you would really seriously consider the hypothesis that he has extrasensory perception? In my own case, I think somewhere around 10. My personal state of belief is, therefore, about −100 db. You could talk me into a ±10 change, and perhaps as much as ±30, but not much more than that.

But on further thought we see that, although this result is correct, it is far from the whole story. In fact, if he guessed 1000 numbers correctly, I still would not believe that he has ESP, for an extension of the same reason that we noted in Chapter 4 when we first encountered the phenomenon of resurrection of dead hypotheses. An hypothesis A that starts out down at −100 db can hardly ever come to be believed whatever the data, because there are almost sure to be alternative hypotheses (B1, B2, . . .) above it, perhaps down at −60 db. Then when we get astonishing data that might have resurrected A, the alternatives will be resurrected instead. Let us illustrate this by two famous examples, involving telepathy and the discovery of Neptune.

…on the basis of such a result [as Mrs. Stewart’s experimental results in Modern Experiments In Telepathy, Soal & Bateman 195472ya], ESP researchers would proclaim a virtual certainty that ESP is real. …it hardly matters what these prior probabilities are; in the view of an ESP researcher who does not consider the prior probability Pf = P (Hf | X) particularly small, P (Hf |D, X) is so close to unity that its decimal expression starts with over a hundred 9’s. He will then react with anger and dismay when, in spite of what he considers this overwhelming evidence, we persist in not believing in ESP. Why are we, as he sees it, so perversely illogical and unscientific? The trouble is that the above calculations (5-9) and (5-12) represent a very naive application of probability theory, in that they consider only Hp and Hf; and no other hypotheses. If we really knew that Hp and Hf were the only possible ways the data (or more precisely, the observable report of the experiment and data) could be generated, then the conclusions that follow from (5-9) and (5-12) would be perfectly all right. But in the real world, our intuition is taking into account some additional possibilities that they ignore.

…When we are dealing with some extremely implausible hypothesis, recognition of a seemingly trivial alternative possibility can make orders of magnitude difference in the conclusions. Taking note of this, let us show how a more sophisticated application of probability theory explains and justifies our intuitive doubts.

Let Hp, Hf, and Lp, Lf, Pp, Pf be as above; but now we introduce some new hypotheses about how this report of the experiment and data might have come about, which will surely be entertained by the readers of the report even if they are discounted by its writers. These new hypotheses _(H1, H2 . . . Hk) range all the way from innocent possibilities such as unintentional error in the record keeping, through frivolous ones (perhaps Mrs. Stewart was having fun with those foolish people, with the aid of a little mirror that they did not notice), to less innocent possibilities such as selection of the data (not reporting the days when Mrs. Stewart was not at her best), to deliberate falsification of the whole experiment for wholly reprehensible motives. Let us call them all, simply, “deception”. For our purposes it does not matter whether it is we or the researchers who are being deceived, or whether the deception was accidental or deliberate. Let the deception hypotheses have likelihoods and prior probabilities Li, Pi, i = (1, 2, …, k). There are, perhaps, 100 different deception hypotheses that we could think of and are not too far-fetched to consider, although a single one would suffice to make our point. In this new logical environment, what is the posterior probability of the hypothesis Hf that was supported so overwhelmingly before? Probability theory now tells us: (5-13)

Introduction of the deception hypotheses has changed the calculation greatly; in order for P (Hf |D, X) to come anywhere near unity it is now necessary that: (5-14)

Pp Lp + Σi Pi Li ≪ Pf Lf

From (5-7), Pp Lp is completely negligible so (5-14) is not greatly different from: (5-15)

Σ Pi ≪ Pf

But each of the deception hypotheses is, in my judgment, more likely than Hf, so there is not the remotest possibility that inequality (5-15) could ever be satisfied.

Therefore, this kind of experiment can never convince me of the reality of Mrs. Stewart’s ESP; not because I assert P~f = 0 dogmatically at the start, but because the verifiable facts can be accounted for by many alternative hypotheses.

…Indeed, the very evidence which the ESPers throw at us to convince us, has the opposite effect on our state of belief; issuing reports of sensational data defeats its own purpose. For if the prior probability of deception is greater than that of ESP, then the more improbable the alleged data are on the null hypothesis of no deception and no ESP, the more strongly we are led to believe, not in ESP, but in deception. For this reason, the advocates of ESP (or any other marvel) will never succeed in persuading scientists that their phenomenon is real, until they learn how to eliminate the possibility of deception in the mind of the reader.

It is interesting that Laplace perceived this phenomenon long ago. His Essai Philosophique sur les probabilités (1819207ya) has a long chapter on the “Probabilities of Testimonies”, in which he calls attention to “the immense weight of testimonies necessary to admit a suspension of natural laws”. He notes that those who make recitals of miracles, “decrease rather than augment the belief which they wish to inspire; for then those recitals render very probable the error or the falsehood of their authors. But that which diminishes the belief of educated men often increases that of the uneducated, always avid for the marvelous.”

We observe the same phenomenon at work today, not only in the ESP enthusiast, but in the astrologer, reincarnationist, exorcist, fundamentalist preacher or cultist of any sort, who attracts a loyal following among the uneducated by claiming all kinds of miracles; but has zero success in converting educated people to his teachings. Educated people, taught to believe that a cause-effect relation requires a physical mechanism to bring it about, are scornful of arguments which invoke miracles; but the uneducated seem actually to prefer them. [see also David Hume’s “Of Miracles”]

Slavery and Phrenology

George Combe (1788–701858168ya) appears to have made a phrenological argument (that African docility implies the morality of abolition rather than maintaining slavery) in 3 places—a marginal note on a letter from a pro-slavery advocate in 1839187ya; his Notes on the United States of America, 1841185ya; and his System of Phrenology, 1843183ya:

Caldwell’s long letter on the ‘animal organs’ reached Combe in September 1839187ya; as reproduced & summarized by Poskett 2016:

It did not matter, as most whites agreed, that Africans possessed inferior brains. What mattered was whether they could be entrusted with freedom. Filling the space to the side of Caldwell’s first sheet, Combe compared the character of Africans and Native Americans. Drawing on Caldwell’s own animal comparison, he wrote that Native Americans were ‘indomitable, ferocious savages. They are not tameable. They are not slaves because they are not tameable’. With the Second Seminole War still raging in Florida, this argument appealed directly to southern fears of the Native American population. In contrast, Combe argued, ‘Africans are mild, docile & intelligent, compared with them. They are slaves because they are tameable’. Here Combe first expressed an idea in note-form which he would later return to in print. Stories of violent slave rebellions in Virginia and Jamaica fuelled white fears of immediate abolition throughout the 1830s. Caldwell himself argued that ‘strife and blood-shed would soon become the daily occupation’ of the free African. Combe responded to these concerns, arguing that phrenology in fact showed African character to be essentially placid, writing that ‘the qualities which make them submit to slavery are a guarantee that if emancipated & justly dealt with, they wd [sic] not shed blood’.51 Combe later repeated this argument in the 1840s, first in his Notes on the United States of North America and then in the fifth and expanded edition of his System of Phrenology.52 But he first worked it out in the margins of Caldwell’s letter.

-

Mr. Clay regards it as certain, that if slavery were abolished, a war of extermination would ensue between the races, which would lead to greater evils than those generated by slavery. This is the argument of the white man, of the master, in whose eyes his own losses or sufferings are ponderous as gold, and those of three millions of Negroes light as a feather. Ask the Negroes their opinion of the miseries of the existing system and weigh this against the evils anticipated by the Whites from emancipation, and then strike the balance. Before I had an opportunity of studying the Negro character and Negro brain, I entertained the same opinion with Mr. Clay, that a war of extermination would be the consequence of immediate freedom. More accurate and extensive information has induced me to change this view. I may here anticipate a statement which belongs, in chronological order, to a more advanced date, namely, that I have studied the crania of the North American Indians and of the Negroes in various parts of the United States, and also observed their living heads, and have arrived at the following conclusions. The North American Indians have given battle to the Whites, and perished before them, but have never been reduced either to national or to personal servitude. The development of the brains shows large organs of Destructiveness, Secretiveness, Cautiousness, Self-Esteem, and Firmness, with deficient organs of Benevolence, Conscientiousness, and Reflection. This indicates a natural character that is proud, cautious, cunning, cruel, obstinate, vindictive, and little capable of reflection or combination. The brain of the Negro, in general (for there are great varieties among the African race, and individual exceptions are pretty numerous), shows proportionately less Destructiveness, Cautiousness, Self-Esteem, and Firmness, and greater Benevolence, Conscientiousness, and Reflection, than the brain of the native American. In short, in the Negro brain the moral and Reflecting organs are of larger size, in proportion to the organs of the animal propensities now enumerated, than in that of the Indian. The Negro is, therefore, naturally more submissive, docile, intelligent, patient, trustworthy, and susceptible of kindly emotions, and less cruel, cunning, and vindictive, than the other race.

These differences in their natural dispositions throw some light on the differences of their fates. The American Indian has escaped the degradation of slavery, because he is a wild, vindictive, cunning, untameable savage, too dangerous to be trusted by the white men in social intercourse with themselves, and moreover, too obtuse and intractable to be worth coercing into servitude. The African has been deprived of freedom and rendered “property,” according to Mr. Clay’s view, because he is by nature a tame man, submissive, affectionate, intelligent and docile. He is so little cruel, cunning, fierce, and vindictive, that the white men can oppress him far beyond the limits of Indian endurance, and still trust their lives and property within his reach; while he is so intelligent that his labor is worth acquiring. The native American is free, because he is too dangerous and too worthless a being to be valuable as a slave; the Negro is in bondage, because his native dispositions are essentially amiable. The one is like the wolf or the fox, the other like the dog. In both, the brain is inferior in size, particularly in the moral and intellectual regions, to that of the Anglo-Saxon race, and hence the foundation of the natural superiority of the latter over both; but my conviction is, that the very qualities which render the Negro in slavery a safe companion to the White, would make him harmless when free. If he were by nature proud, irascible, cunning and vindictive, he would not be a slave; and as he is not so, freedom will not generate these qualities in his mind; the fears, therefore, generally entertained of his commencing, if emancipated, a war of extermination, or for supremacy over the Whites, appear to me to be unfounded; unless, after his emancipation, the Whites should commence a war of extermination against him. The results of emancipation in the British West India Islands have hitherto borne out these views, and I anticipate that the future will still farther confirm them.

A System of Phrenology, similarly:

I have studied the crania and living heads of North American Indians and of Negroes in various parts of the United States, and, after considering their history, I submit the following remarks. The North American Indians have given battle to the Whites, and perished before them, but have never been reduced either to national or to personal servitude. The development of their brains shews large organs of Destructiveness, Secretiveness, Cautiousness, Self-Esteem, and Firmness, with deficient organs of Benevolence, Conscientiousness, and Reflection. This indicates a natural character that is proud, cautious, cunning, cruel, obstinate, vindictive, and little capable of reflection or combination. The brain of the Negro, in general (for there are great varieties among the African race, and individual exceptions are pretty numerous), shews proportionately less Destructiveness, Cautiousness, Self-Esteem, and Firmness, and greater Benevolence, Conscientiousness, and Reflection, than the brain of the native American. In short, in the Negro brain the moral and reflecting organs are of larger size, in proportion to the organs of the animal propensities now enumerated, than in that of the Indian. The Negro is, therefore, naturally more submissive, docile, intelligent, patient, trustworthy, and susceptible of kindly emotions, and less cruel, cunning, and vindictive, than the other race.